Contextualizing the Impact of Geopolitical Tensions on Airline Economics The recent geopolitical tensions stemming from the ongoing conflict in Iran have had profound implications for various sectors, particularly the aviation industry. Chinese airlines have experienced disproportionate financial strain compared to their global counterparts, primarily due to a confluence of factors that exacerbate their vulnerability. The surge in jet fuel prices—a direct result of military actions initiated by the U.S. and Israel—coupled with the competitive pressures posed by China’s expanding high-speed rail infrastructure, has created a challenging operational environment for these carriers. Unlike many international airlines that employ sophisticated hedging strategies to mitigate fuel price volatility, Chinese airlines remain largely unhedged, intensifying their susceptibility to escalating fuel costs. This situation has culminated in significant market share losses and a projected combined net loss for major Chinese carriers in 2026. Main Goal: Mitigating Financial Vulnerabilities in the Aviation Sector The primary objective for Chinese airlines in the wake of these challenges is to develop robust strategies to mitigate financial vulnerabilities associated with fuel price volatility and competitive market pressures. Achieving this goal necessitates the implementation of comprehensive risk management frameworks, including increased fuel hedging activities and innovative pricing strategies. By adopting these measures, airlines can better insulate themselves from external shocks and maintain operational viability. Advantages of Implementing Strategic Changes Reduced Exposure to Fuel Price Fluctuations: By engaging in fuel hedging, airlines can stabilize their fuel costs, enabling more predictable financial forecasting and budgeting. This approach has been successfully employed by airlines such as Singapore Airlines, which reported substantial gains from fuel hedging. Enhanced Pricing Power: Implementing dynamic pricing strategies can allow airlines to better manage fare increases without excessively burdening consumers. This is particularly vital in a price-sensitive domestic market where demand could be easily eroded. Increased Competitive Edge: As the rail network expands, airlines that strategically position themselves through superior customer service and innovative offerings may retain market share against the growing high-speed rail alternatives. Financial Resilience: State-owned enterprises may leverage government backing to raise equity and support balance sheets, reducing bankruptcy risks amidst financial turmoil. Caveats and Limitations While there are clear advantages to implementing these strategies, certain limitations must be acknowledged. The inherent volatility of oil prices means that even well-hedged airlines can face financial distress in cases of extreme market fluctuations. Additionally, the competitive landscape is continually evolving, with aggressive fare pricing potentially leading to ‘demand destruction’ if airlines over-rely on fuel surcharges. Future Implications of AI in the Aviation Industry The integration of artificial intelligence (AI) technologies will significantly shape the future of the aviation industry. AI’s capabilities in predictive analytics can enhance operational efficiencies by better forecasting fuel needs and optimizing fuel purchasing strategies. Moreover, AI can revolutionize customer service through personalized experiences and streamlined booking processes, further enhancing revenue streams. As AI continues to evolve, its application in financial modeling and risk assessment will empower airlines to make informed decisions that mitigate financial vulnerabilities, ultimately leading to a more resilient aviation sector. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview In recent discussions following the SEC Tournament quarterfinal match between the Florida Gators and Alabama Crimson Tide, notable figures such as head coach Kevin O’Sullivan and third baseman Ethan Surowiec provided insights into their team’s performance and strategic approach. The Gators emerged victorious with a commanding score of 13-3, a win that not only enhances their standing in the SEC but also reflects their upward trajectory as one of the league’s most formidable teams. O’Sullivan emphasized the significance of their pitching staff’s performance, particularly that of Liam Peterson, while Surowiec highlighted the offensive strategies that have contributed to their recent successes. Main Goals and Achievements The primary objective articulated in the original discussion is to maintain a competitive edge while navigating the complexities of postseason play. The Gators aim to consolidate their strengths as they advance, underscoring the importance of strategic pitching and adaptive offensive techniques. Achieving this goal involves leveraging the players’ skills and building confidence, especially in light of previous victories against rivals such as Georgia. The team’s focus on a cohesive game plan and player health is crucial for sustaining performance levels throughout the tournament. Advantages of the Current Strategy Enhanced Pitching Depth: O’Sullivan remarked on the return of key bullpen arms, which fortifies the team’s pitching lineup. This depth allows for more strategic matchups against opponents, increasing the likelihood of favorable outcomes in high-pressure situations. Player Confidence: Surowiec’s comments on offensive strategy reveal a focus on timely hitting and situational awareness, which fosters a sense of confidence among players. This psychological edge can significantly impact performance under pressure. Adaptability: The ability to adjust offensive strategies based on game circumstances—such as utilizing the backside of the field as Surowiec indicated—highlights a flexible approach that can counteract defensive tactics employed by opponents. Health Management: Prioritizing player health is essential during tournaments, as noted by O’Sullivan’s emphasis on the recovery of key players. Healthy players are more likely to perform at peak levels, which is critical in high-stakes games. Limitations and Caveats While the advantages identified are significant, there are inherent limitations to consider. The unpredictability of tournament play means that strategic advantages can be undermined by unexpected performances from opponents or injuries to key players. Additionally, while confidence is a vital component of success, overconfidence can lead to complacency, which may adversely affect performance. Future Implications of AI Developments in Sports Analytics As artificial intelligence continues to evolve within the realm of sports analytics, its implications for teams like the Florida Gators are profound. AI technologies can enhance performance analysis by providing deeper insights into player statistics, health metrics, and game strategies. For example, AI-driven data analysis can identify patterns in opponent behavior, allowing teams to tailor their game plans more effectively. Furthermore, predictive analytics could forecast player performance trends, enabling coaches to make more informed decisions regarding lineups and strategies. In conclusion, the integration of AI in sports analytics will likely transform how teams prepare for and compete in tournaments. By harnessing these advanced tools, teams can optimize their performance, mitigate risks associated with player health, and enhance their overall competitive edge. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context of the Announcement The field of computer vision has witnessed remarkable advancements, especially through the contributions of innovative technologies such as the Ultralytics YOLO (You Only Look Once) framework. This platform, developed by Glenn Jocher, operates at an impressive scale, executing approximately 2.5 billion model inferences daily across diverse sectors, including robotics, healthcare, and manufacturing. The announcement of Jocher’s participation in the upcoming OSCCA (Open Source Computer Vision Conference) event in Los Angeles on May 4th serves as a testament to the ongoing evolution in vision AI. Main Goal of the Presentation The primary objective of Glenn Jocher’s presentation at OSCCA is to shed light on the transformative potential of YOLO in making advanced vision AI accessible to a broader audience. By sharing insights into the development and deployment of YOLO, Jocher aims to empower attendees with the knowledge required to leverage these technologies effectively. This goal is particularly relevant for vision scientists and practitioners who seek to understand the practical applications of object detection AI in real-world scenarios. Advantages of Attending OSCCA Exclusive Access to Expertise: Attendees will benefit from Jocher’s pre-recorded talk, which will not be available to the general public. This unique opportunity allows participants to gain insights directly from a leading expert in the field. Networking Opportunities: The event facilitates interactions with fellow vision scientists, developers, and industry leaders, fostering collaborations that can lead to advancements in research and application. Comprehensive Knowledge Sharing: In addition to Jocher, OSCCA features presentations from other prominent figures in computer vision, such as Gary Bradski and Doug Fidaleo, providing a well-rounded understanding of current trends and innovations. Exposure to Cutting-Edge Technologies: The associated Display Week Exhibition Hall showcases the latest advancements in AI and imaging technologies, offering attendees firsthand experience with tools and innovations shaping the future. Caveats and Limitations While the advantages of attending OSCCA are substantial, participants should also consider potential limitations. Given the concentrated nature of the program, the depth of each topic may be constrained, limiting the opportunity for in-depth exploration of specific interests. Additionally, the event is geographically limited to Los Angeles, which may pose accessibility challenges for some interested parties. Future Implications of AI Developments The continued evolution of AI technologies, particularly in computer vision, will significantly influence the landscape for vision scientists. As tools like YOLO become more sophisticated and widely adopted, the demand for skilled professionals who can navigate and implement these technologies will increase. Furthermore, the integration of AI into various sectors will likely lead to the emergence of new applications, prompting a reevaluation of existing methodologies and practices in vision science. Conclusion In summary, Glenn Jocher’s talk at OSCCA represents a pivotal moment for attendees interested in the intersection of AI and computer vision. The insights shared during this session, along with the networking opportunities and exposure to emerging technologies, are invaluable for both current practitioners and aspiring vision scientists. Engaging with this content not only enhances individual expertise but also contributes to the broader discourse on the future of AI in vision applications. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview In contemporary cloud computing environments, performance is not merely an attribute of individual resources; it is fundamentally influenced by the symbiotic interaction of compute, storage, and networking capabilities. The Azure Infrastructure as a Service (IaaS) platform espouses a system-level approach that facilitates organizations in achieving consistent and scalable performance across various demanding workloads, including artificial intelligence (AI), cloud-native applications, and critical business systems. This architecture is imperative for data engineers who must ensure that their solutions are robust, efficient, and adaptable to evolving demands. Main Goal and Achievement The central objective of Azure IaaS is to enhance performance by integrating resources in a manner that minimizes bottlenecks and optimizes throughput across all system dimensions. Achieving this goal necessitates a paradigm shift from traditional resource allocation strategies to a more holistic, system-oriented approach. This involves leveraging Azure’s capabilities to automate performance tuning, thereby allowing data engineers to focus on application design and functionality without the complexities of manual layer adjustments. Advantages of the System-Level Approach Holistic Performance Management: Azure IaaS enables performance assessment across multiple dimensions, including latency, throughput, scalability, and consistency, allowing for a comprehensive evaluation of workload efficiency. Dynamic Resource Scalability: The platform supports elastic scaling, particularly beneficial for cloud-native applications that experience fluctuating demand, ensuring that resources are provisioned efficiently in real time. Enhanced AI Workload Support: The system is designed to optimize performance for AI tasks, ensuring that data movement and processing are synchronized to meet the high demands of machine learning and inference tasks. Reduced Operational Overhead: By integrating compute, storage, and networking capabilities, Azure IaaS simplifies infrastructure management, allowing data engineers to prioritize innovation over maintenance. Predictable Performance for Business-Critical Systems: The architecture assures consistent performance under varying loads, which is vital for transactional systems and enterprise applications that require reliability and low latency. Considerations and Limitations While the system-level approach offers numerous advantages, it is essential to recognize that it may not eliminate all performance challenges. The dynamic nature of cloud workloads can still lead to unpredictable bottlenecks, particularly if workloads are not properly balanced across the system. Organizations must remain vigilant in monitoring performance metrics and be prepared to adapt their strategies as workloads evolve. Future Implications of AI on Big Data Engineering The convergence of AI and big data engineering is poised to reshape the landscape of cloud performance optimization. As AI applications become increasingly sophisticated, the demand for seamless data processing and rapid computation will intensify. Azure IaaS is well-positioned to accommodate these shifts through its system-level architecture, which can adapt to the complexities introduced by AI. Furthermore, the integration of AI-driven tools within Azure will likely enhance predictive analytics capabilities, enabling proactive performance tuning and resource allocation, thus further empowering data engineers to achieve their goals with greater efficiency. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview of AI-Powered Marketing In the rapidly evolving landscape of marketing technology, the integration of artificial intelligence (AI) has emerged as a transformative force. A notable instance is the recent partnership between Digital Turbine and Google Cloud, which aims to enhance AI-driven optimization and recommendation capabilities across Digital Turbine’s mobile platform. This strategic collaboration underscores a broader trend in the industry towards leveraging real-time mobile signals to bolster targeting, engagement, and overall performance for advertisers and publishers. As mobile ecosystems grow increasingly complex, the ability to harness real-time data is crucial for delivering high-impact advertising solutions. This partnership reflects a significant investment in AI-powered intelligence, enabling organizations to process and act on vast amounts of data generated within the mobile ecosystem. Main Goal and Achievement Strategy The primary goal of Digital Turbine’s partnership with Google Cloud is to embed advanced AI capabilities within its platform to create a more intelligent and adaptive marketing environment. This objective can be achieved through the integration of the Gemini Enterprise Agent Platform with Digital Turbine’s existing technology. By utilizing Google Cloud’s infrastructure, Digital Turbine aims to enhance its core intelligence systems, thereby improving the efficacy of its advertising and publisher solutions. Advantages of AI Integration in Marketing Enhanced Targeting: The incorporation of AI allows for more precise targeting of advertisements, leading to improved user engagement and conversion rates. The ability to process real-time signals enables advertisers to tailor their campaigns based on current user behavior. Real-Time Optimization: AI technologies facilitate continuous learning and adaptation, optimizing marketing strategies in real-time. This dynamic approach allows businesses to respond swiftly to market changes and consumer preferences. Scalability: Leveraging Google Cloud’s infrastructure enables Digital Turbine to scale its operations effectively, processing millions of mobile signals without sacrificing performance or security. Data-Driven Insights: The combination of proprietary signals and advanced AI capabilities yields deeper insights into market trends and audience behavior, fostering data-driven decision-making among marketers. Privacy and Security: The partnership emphasizes a secure and governed AI foundation, ensuring that user privacy is maintained while maximizing performance in mobile advertising. Caveats and Limitations While the integration of AI into marketing platforms offers significant advantages, it is essential to recognize potential limitations. For instance, the effectiveness of AI systems is contingent upon the quality and richness of the data they process. Additionally, as AI technologies evolve, there may be challenges related to algorithmic bias and the ethical use of consumer data. Marketers must remain vigilant in addressing these issues to maintain trust and compliance with regulatory standards. Future Implications of AI in Marketing The continued advancements in AI technology are poised to reshape the marketing landscape significantly. As organizations like Digital Turbine enhance their capabilities through strategic partnerships, we can expect a greater emphasis on real-time decision-making and adaptive marketing strategies. Future developments may include even more sophisticated predictive analytics, enabling marketers to anticipate consumer behavior more accurately and tailor their offerings accordingly. In conclusion, the integration of AI into marketing practices not only enhances operational efficiencies but also fosters a more responsive and personalized consumer experience. As the industry progresses, the collaboration between technology leaders will likely set new standards for what is achievable in AI-powered marketing. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Background The collaboration between Humanoid, Bosch, and Schaeffler marks a significant milestone in the evolution of humanoid robotics, particularly within the realm of Smart Manufacturing. This partnership aims to scale the production and deployment of the HMND 01 platform, which is designed to operate in industrial environments. As industries increasingly seek to incorporate advanced robotic solutions to enhance productivity and efficiency, such collaborations become pivotal. The completion of a successful proof of concept (POC) in March 2026 has paved the way for this strategic alliance, illustrating the urgency and potential of humanoid robots in real-world applications. Main Goal and Achievement Strategy The primary objective of this partnership is to transition from prototype validation to large-scale deployment of humanoid robots in various industrial sectors, including logistics and manufacturing. This goal can be achieved through the strategic collaboration of key players—Humanoid provides innovative robotic solutions, while Bosch offers extensive manufacturing capabilities and Schaeffler integrates these technologies into live operations. By leveraging Bosch’s manufacturing expertise and Schaeffler’s operational framework, Humanoid aims to bring humanoid robots into the mainstream industrial landscape efficiently. Advantages of the Partnership Enhanced Production Capabilities: By partnering with Bosch, Humanoid benefits from robust manufacturing infrastructure, enabling rapid scaling of production to meet market demand. Innovative Design for Excellence (DfX): The commitment to DfX principles ensures that the robots are designed for manufacturability, reliability, and cost-effectiveness, which enhances long-term economic viability. Proven Technology: The successful execution of the POC demonstrated the technical readiness of the HMND 01 robots, confirming their capability to handle diverse tasks in dynamic environments. Strategic Supply Chain Integration: Schaeffler’s role as a preferred supplier facilitates a consistent flow of high-quality components, essential for the production of humanoid robots, thereby minimizing delays and production costs. Autonomy and Flexibility: The design of humanoid robots allows them to operate in human-centric spaces without requiring significant modifications to existing infrastructure, offering flexibility in deployment. Future Implications of AI in Humanoid Robotics The integration of artificial intelligence (AI) in humanoid robotics is expected to significantly impact the industry in several ways. As AI technologies evolve, the autonomy of humanoid robots is anticipated to increase, with targets aiming for a 99.5% success rate in autonomous operations. This enhancement will not only improve efficiency but also reduce the need for human oversight in routine tasks. Furthermore, AI will play a critical role in facilitating adaptive learning, enabling robots to refine their operational capabilities through experience. The shift towards a simulation-first approach in training will further decrease the reliance on extensive real-world data, streamlining the deployment process. As AI continues to advance, the potential for humanoid robots to undertake more complex and dexterous tasks will expand, positioning them as essential assets in modern manufacturing environments. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context The emergence of digital front doors in healthcare has transformed the way health plans connect with their members, facilitating access to efficient and high-quality care. However, the current systems employed often fall short, leading to significant financial inefficiencies. Issues such as inaccurate provider directories, generic search results, and a lack of tailored recommendations result in members being directed toward inappropriate or higher-cost providers. This misalignment not only delays necessary care but also contributes to the broader issue of healthcare waste, estimated to be around $400 billion annually due to low-value and unnecessary services. The pressing nature of these challenges necessitates an immediate reevaluation of digital front door strategies, particularly in light of tightening margins and increasing pressures on medical loss ratios. Main Goal and Achievement Strategies The primary goal outlined in the original discourse is to transform the digital front door from a passive access point into an active mechanism for cost control and quality improvement in healthcare delivery. Achieving this objective involves integrating advanced technology solutions, such as AI-driven navigation and provider performance analytics, into existing digital front door frameworks. By leveraging these tools, health plans can enhance member navigation, optimize connections to high-value care providers, and ultimately reduce unnecessary medical expenditures. This proactive approach not only addresses immediate inefficiencies but also positions health plans to improve overall member outcomes and satisfaction. Advantages of Optimizing the Digital Front Door Enhanced Member Navigation: By streamlining access to high-value providers, members can receive appropriate care more efficiently, reducing the likelihood of unnecessary treatments and associated costs. Improved Cost Management: The integration of AI capabilities allows for better monitoring of care pathways, identifying low-value services, and realigning resources to high-quality providers, thereby enhancing the return on investment (ROI) by 20-40 times. Data-Driven Care Decisions: Utilizing performance data enables health plans to refine their networks, ensuring that members are matched with providers who deliver superior care outcomes. Reduction of Care Delays: Addressing the “hassle map” that members encounter when seeking care, particularly in specialty services, can lead to timely interventions and better health results. However, it is essential to recognize potential limitations. The effectiveness of these strategies relies heavily on the accuracy of data used for provider matching and the ongoing evolution of AI technologies. Additionally, health plans must ensure that their digital front door solutions are user-friendly and integrated seamlessly within existing infrastructures to avoid further complications. Future Implications The integration of AI into healthcare systems, particularly regarding digital front doors, heralds significant future implications. As AI technologies advance, we can expect enhanced capabilities in predictive analytics, allowing health plans to anticipate member needs and tailor care pathways accordingly. Moreover, the increasing sophistication of AI will enable a more personalized healthcare experience, wherein members receive care recommendations based on historical data and individual health profiles. HealthTech professionals must stay abreast of these developments, as successful adaptation will be crucial for maintaining competitive advantage in an increasingly digital healthcare landscape. As these tools develop, they will not only improve operational efficiencies but also foster a healthcare environment that prioritizes high-value, outcome-oriented care. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

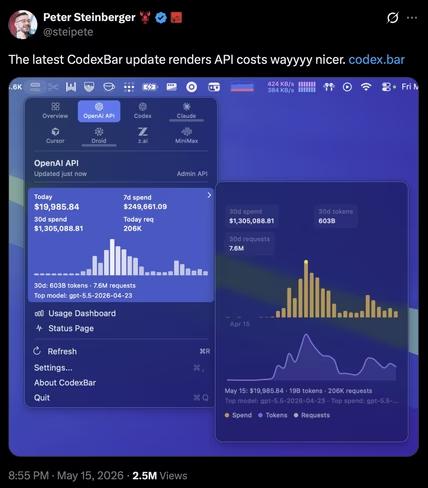

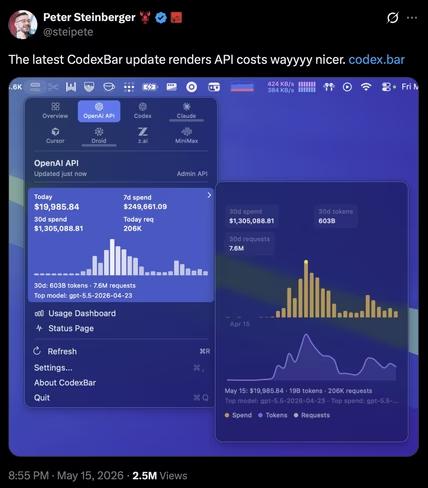

Context The advent of artificial intelligence (AI) in software development has ushered in unprecedented changes, as exemplified by Peter Steinberger’s recent endeavors with OpenClaw. Over a span of 30 days, Steinberger, now an engineer at OpenAI, utilized 100 AI Codex instances, resulting in an expenditure of $1.3 million in API tokens. This expenditure, amounting to 603 billion tokens across 7.6 million requests, serves as a pivotal case study, shedding light on the economic implications of employing autonomous AI agents in software development without financial constraints. The data underscores not only the rapid escalation of costs associated with continuous AI operation but also provides a crucial public data point regarding the financial dynamics of large-scale autonomous coding. Main Goal and Achievement Methodology The principal objective of Steinberger’s initiative is to explore the capacities and economic ramifications of autonomous AI-driven software development. By leveraging an extensive array of Codex instances, Steinberger aimed to establish a benchmark for understanding the cost implications tied to the operation of AI agents in a large-scale coding environment. Achieving this goal necessitates a rigorous approach to monitoring resource consumption, understanding token economics, and optimizing AI operations to discern the balance between cost and productivity. Furthermore, the commitment to open-source principles ensures that the insights generated can benefit the broader developer community. Advantages of AI in Software Development Increased Efficiency: Steinberger’s autonomous development pipeline enables tasks such as reviewing pull requests and scanning for security vulnerabilities, traditionally requiring a larger engineering team, to be executed by a small group of humans overseeing multiple AI agents. Scalability: The ability of AI agents to manage extensive workloads, as evidenced by the completion of 7.6 million requests, demonstrates the potential for scaling development processes far beyond human capabilities. Real-time Performance Monitoring: AI agents can continuously monitor performance and flag regressions, ensuring immediate feedback and adjustments to the development process. Cost Transparency: Steinberger’s detailed breakdown of costs, including the implications of different pricing models, provides valuable insights for organizations contemplating the integration of AI tools into their development workflows. Caveats and Limitations Despite the advantages, there are significant caveats to consider. The high operational costs, as demonstrated by the $1.3 million expenditure, highlight the financial risks associated with extensive AI deployment. Additionally, reliance on proprietary models may pose challenges regarding sustainability and accessibility for smaller enterprises. The necessity of a robust infrastructure to support such operations cannot be overlooked, as it may not be feasible for all organizations to replicate Steinberger’s model. Future Implications The implications of Steinberger’s project extend beyond immediate financial considerations and into the broader landscape of software development. As organizations increasingly adopt AI-assisted coding tools, fundamental questions regarding the economics of AI development will arise. The divergence in pricing models, where traditional subscription frameworks may not align with the demands of autonomous agents, signals a need for new pricing structures that reflect the realities of AI usage. Furthermore, as advancements in AI technology continue, the potential for reduced inference costs and enhanced efficiencies could reshape the economic landscape, making these tools more accessible to a wider array of developers and organizations. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Background The cultivation of heirloom tomatoes presents unique opportunities for farmers seeking to enhance their crop diversity and market appeal. Heirloom tomatoes, characterized by their open-pollinated nature and historical significance, offer a plethora of varieties ranging from slicers and beefsteaks to cherries. These varieties are not only cherished for their taste but also for their potential to adapt to different agricultural practices and climates. For AgriTech innovators, understanding the nuances of these heirloom varieties can lead to better breeding and cultivation practices, ultimately supporting sustainable agricultural methods. Main Goals of Heirloom Tomato Cultivation The primary goal of growing heirloom tomatoes is to preserve their genetic diversity while maximizing their yield and flavor potential. Achieving this involves selecting varieties that are well-suited to local climates and growing conditions. Farmers are encouraged to leverage their knowledge of seasonal lengths and climate tendencies to determine which varieties will thrive in their specific environments. This approach not only increases the likelihood of successful harvests but also enables breeders to develop unique varieties that meet market demands. Advantages of Heirloom Tomato Cultivation Diverse Flavor Profiles: Heirloom tomatoes are renowned for their rich flavors and unique textures, which can significantly enhance culinary applications. Varieties such as the Cherokee Purple and San Marzano are sought after for their exceptional taste, attracting customers who seek quality produce. Genetic Diversity: The open-pollinated nature of heirloom tomatoes facilitates genetic diversity, which is essential for resilience against pests and diseases. This genetic variability allows growers to cultivate varieties that can withstand local environmental challenges. Market Differentiation: Growing heirloom tomatoes can provide a competitive edge in the marketplace. Unique varieties can attract niche markets, thereby enhancing profitability and customer loyalty. Breeding Opportunities: The adaptability of heirloom varieties offers breeding potential for farmers interested in developing new cultivars tailored to specific needs or preferences. This can lead to increased sustainability within farming practices. Caveats and Limitations While the benefits of heirloom tomato cultivation are substantial, there are limitations to consider. Heirloom varieties often have lower yields compared to hybrid counterparts, which can pose challenges for large-scale commercial farming. Additionally, these tomatoes may require specific growing conditions and increased management practices to thrive, necessitating a more hands-on approach from farmers. Future Implications of AI in Heirloom Tomato Cultivation As AgriTech continues to evolve, the integration of artificial intelligence (AI) into the agricultural sector promises to revolutionize heirloom tomato cultivation. AI technologies can enhance data collection regarding growth patterns, soil health, and climate impacts, allowing for more precise cultivation strategies. Predictive analytics can assist farmers in making informed decisions about which heirloom varieties to plant based on real-time environmental data. Furthermore, AI-driven genetic analysis could facilitate more effective breeding programs, leading to the development of heirloom varieties that are better adapted to changing climate conditions. Conclusion The cultivation of heirloom tomatoes stands as a testament to the intersection of tradition and innovation within agriculture. By understanding the advantages and limitations of these varieties, AgriTech innovators can play a pivotal role in preserving genetic diversity while enhancing crop resilience and market appeal. The future of heirloom tomato cultivation looks promising, especially with the anticipated advancements in AI, which will further refine cultivation practices and contribute to sustainable agricultural development. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview The integration of artificial intelligence (AI) into marketing has emerged as a transformative approach, enhancing the efficiency and effectiveness of marketing campaigns. The contemporary marketing landscape increasingly relies on AI technologies to facilitate data-driven strategies, allowing marketers to execute more personalized and impactful campaigns. According to industry surveys, a significant percentage of marketers consider AI software as a critical component of their overall data strategy. This post examines the essential AI marketing tools that can empower marketers to leverage AI capabilities, streamline their processes, and maximize their campaign effectiveness. Main Goal and Achievements The primary objective of employing AI marketing tools is to optimize marketing strategies by automating processes, personalizing content, and improving audience engagement. Marketers can achieve this through various AI-driven platforms that analyze consumer behavior, generate content, and enhance decision-making processes. By selecting and implementing the right tools, marketers can significantly reduce the time spent on mundane tasks, allowing them to focus on strategic initiatives that drive growth. Advantages of AI Marketing Tools 1. **Enhanced Personalization**: AI tools enable marketers to tailor their messages and campaigns based on individual consumer behavior and preferences. For instance, tools like Personalize utilize real-time data analytics to identify the interests of contacts, allowing for targeted marketing efforts that resonate more effectively with the audience. 2. **Increased Efficiency**: AI tools such as Jasper Ai streamline content creation processes. By automating writing tasks, marketers can produce high-quality content in a fraction of the time it would traditionally take, thus enhancing productivity and reducing operational costs. 3. **Data-Driven Insights**: AI marketing platforms provide valuable insights through advanced analytics. Tools like Seventh Sense analyze consumer behavior patterns to optimize email marketing campaigns by determining the best times to send communications, ultimately improving open rates and engagement. 4. **Improved SEO Strategy**: Platforms like HubSpot SEO employ machine learning algorithms to refine content strategies, helping marketers identify and rank for relevant topics that drive organic traffic. This capability is vital for businesses aiming to enhance their online visibility in a competitive landscape. 5. **Scalability and Adaptability**: AI tools, such as Albert AI, offer scalable solutions that adapt to changing market conditions and consumer preferences. This adaptability is crucial in a rapidly evolving digital landscape, allowing brands to remain relevant and competitive. 6. **Cost-Effectiveness**: Many AI marketing tools are designed to provide enterprise-grade technology at a fraction of the cost, making advanced marketing capabilities accessible to small and medium-sized businesses. This democratization of technology enables a broader range of companies to engage in sophisticated marketing strategies. Caveats and Limitations While the advantages of AI marketing tools are substantial, there are important considerations to bear in mind. The accuracy of AI-driven insights is contingent upon the quality of data input; poor data can lead to misguided decisions. Additionally, the reliance on automated tools may result in a lack of human touch in marketing communications, potentially alienating certain audiences. It is essential for marketers to balance automation with personalized customer interaction to maintain authenticity in their brand messaging. Future Implications of AI in Marketing As AI technology continues to advance, its influence on marketing strategies will expand even further. Emerging trends such as predictive analytics, natural language processing, and machine learning will empower marketers to anticipate consumer needs and preferences with unprecedented accuracy. Future developments may include more sophisticated AI tools that offer deeper insights into customer journeys, enabling businesses to craft even more personalized experiences. Moreover, as ethical considerations surrounding AI usage grow, there will be an increasing emphasis on transparency and data privacy. Marketers will need to navigate these challenges while leveraging AI capabilities to maintain consumer trust and loyalty. In conclusion, the integration of AI marketing tools represents a pivotal shift in the marketing landscape, empowering practitioners to enhance their strategies through automation, personalization, and data-driven insights. By embracing these technologies, marketers can position themselves for sustained success in an increasingly competitive environment. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here