Introduction Large language models (LLMs) have revolutionized the field of Natural Language Processing (NLP) by offering sophisticated conversational abilities and generating human-like text. However, their propensity for producing verbose and complex responses poses significant challenges for users and developers alike. This tendency is often linked to a phenomenon known as “hallucination,” wherein the model generates content that is inaccurate or fabricated. Thus, establishing effective guardrails to mitigate verbosity and hallucinations is essential for ensuring the reliability and clarity of LLM-generated outputs. This blog post explores strategies for measuring and managing verbosity in LLM responses, focusing on the integration of the Textstat Python library and the LangChain framework. Understanding the Goals of Verbosity Management The primary objective of managing verbosity in LLMs is to enhance the clarity and accuracy of generated text. By controlling the complexity of language used by the model, developers can ensure that responses remain grounded in factual information, reducing the risk of hallucinations. This can be achieved by setting a complexity threshold—measured using metrics like the Automated Readability Index (ARI)—that dictates the acceptable level of verbosity in model outputs. When a generated response exceeds this threshold, a re-prompting mechanism can be employed to elicit a more concise and straightforward answer from the model. Advantages of Implementing Verbosity Checks Improved Readability: By applying readability metrics, such as ARI, models can produce responses that are more accessible to a wider audience, including non-experts. Reduced Hallucination Rates: Limiting verbosity can help ground responses in factual data, thereby minimizing the incidence of hallucinations that often arise from overly elaborate language. Enhanced User Experience: Concise and clear responses improve user satisfaction, making interactions with LLMs more efficient and effective. Scalable Solutions: The integration of libraries like Textstat and frameworks such as LangChain allows for the automation of verbosity checks, making it easier to manage LLM outputs at scale. Caveats and Limitations Despite the numerous advantages of implementing verbosity checks, several limitations must be acknowledged. The effectiveness of readability metrics varies across different contexts; what is deemed “readable” for one audience may not be for another. Additionally, the use of lightweight models, such as distilgpt2, may yield less robust summarizations compared to more advanced models designed specifically for text summarization. Consequently, while the ARI score may decrease, the quality of generated text may not meet all user expectations. Future Implications for AI Development As advancements in AI continue to evolve, the importance of managing verbosity and hallucinations will become even more critical. Future developments may introduce more sophisticated metrics for assessing language quality, including semantic consistency checks and enhanced inference techniques. This will not only improve the reliability of LLMs but also expand their applications across diverse fields, from educational tools to customer service solutions. Consequently, researchers and developers must prioritize the implementation of robust verbosity management strategies to harness the full potential of LLMs while maintaining ethical standards and user trust. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context: Recent Developments in Large Language Models (LLMs) The landscape of Large Language Models (LLMs) has experienced significant transformations over the past six months, particularly marked by the November 2025 inflection point, which heralded substantial advancements in model capabilities, especially in coding tasks. This period has seen a dynamic interchange of the title of the “best” model among prominent AI providers, including OpenAI, Anthropic, and Google, reflecting a rapidly evolving competitive environment. The introduction of innovative coding agents that leverage reinforcement learning has notably improved the practical applications of these models in software development, presenting new opportunities and challenges for data engineers and analysts. Main Goal of Advancements in LLMs The primary objective of these advancements in LLM technology is to enhance the efficiency and accuracy of coding processes, thereby transforming how software development is approached. By leveraging the capabilities of advanced LLMs, data engineers can automate routine coding tasks, reduce errors, and improve productivity. Achieving this involves integrating these models into existing workflows and continuously refining their training through user feedback and real-world application scenarios. Structured List of Advantages Enhanced Coding Accuracy: Recent models exhibit significantly improved accuracy in generating code, as evidenced by successful implementations in complex coding scenarios. Increased Productivity: The automation of simple and repetitive coding tasks allows engineers to focus on more complex and creative aspects of software development. Rapid Model Development: The competitive nature of AI providers has led to accelerated innovation, resulting in models that are not only more powerful but also faster and more efficient. Accessibility of Powerful Models: The emergence of models that can run on standard laptops democratizes access to advanced AI tools, allowing smaller teams to leverage powerful technology without significant investment in infrastructure. Continuous Improvement: The iterative development process of these models ensures that they are constantly evolving based on user experiences and feedback, leading to better performance over time. However, it is essential to acknowledge certain caveats, such as the potential for models to produce incorrect outputs or the need for substantial computational resources for training and deployment. Additionally, the reliance on AI may introduce new challenges related to code quality and maintainability. Future Implications of AI Developments in Data Analytics and Insights The ongoing advancements in AI and LLMs are poised to have profound implications for the field of data analytics and insights. As these models become more sophisticated, they will facilitate more complex data analysis tasks, enabling data engineers to derive insights faster and with greater accuracy. The integration of AI into data workflows could lead to a paradigm shift where data engineers not only focus on data management and processing but also on strategic decision-making based on AI-driven insights. Furthermore, the growing capabilities of local models suggest that organizations will increasingly rely on in-house solutions, potentially reducing the need for cloud-based resources and enhancing data security. In conclusion, the developments in LLMs over the past six months represent a significant leap forward for the data analytics industry. As these technologies continue to evolve, data engineers must remain adaptable, embracing new tools and methodologies to harness the full potential of AI in their work. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview In the realm of cloud gaming, the convergence of technology and user experience allows players to access high-quality games without the burden of extensive hardware requirements. The recent launch of Subnautica 2 on GeForce NOW exemplifies this trend, offering a seamless transition into a captivating alien ocean environment. This integration of cloud gaming platforms signifies a transformative shift, enabling users to engage with new titles concurrently with their official release. Notably, the introduction of 11 new games this week, including limited-time events such as the HITMAN World of Assassination rewards, further emphasizes GeForce NOW’s commitment to enhancing gaming accessibility across various devices. Main Objective and Its Achievement The primary objective of the current trend in cloud gaming is to democratize access to gaming experiences by eliminating the need for high-end hardware and lengthy installations. This goal can be achieved through the implementation of robust cloud infrastructure, which facilitates instant access to games with high fidelity and minimal latency. By leveraging such technologies, platforms like GeForce NOW enable users to engage with their favorite titles immediately, thus enhancing user satisfaction and retention. Advantages of Cloud Gaming Platforms Accessibility: Cloud gaming platforms allow players to access games from multiple devices, including smartphones, tablets, and low-spec PCs. This flexibility broadens the audience for gaming titles, particularly for those without access to expensive hardware. Instant Play: Players can dive straight into games without waiting for downloads or updates, significantly enhancing user experience and engagement rates. Cost-Effectiveness: Users can enjoy high-end gaming experiences without the associated costs of purchasing and maintaining advanced hardware. This model encourages a more extensive user base, as seen with the diverse offerings on GeForce NOW. Regular Updates: Cloud platforms often provide automatic game updates, ensuring that players always have access to the latest features and improvements without manual intervention. Despite these advantages, it is crucial to acknowledge potential limitations, such as internet connectivity requirements and varying performance based on bandwidth. These factors can impact the overall gaming experience, particularly in regions with less reliable internet infrastructure. Future Implications of AI in Cloud Gaming As technological advancements continue to evolve, the integration of Artificial Intelligence (AI) within cloud gaming platforms is poised to redefine user interactions and gaming experiences. Future developments may include personalized gaming environments tailored to individual preferences, enhanced matchmaking algorithms, and improved in-game AI behaviors that adapt to player styles. Such innovations are likely to foster greater immersion and engagement, further solidifying the role of cloud gaming in the broader gaming ecosystem. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview The transformative landscape of legal services is largely influenced by the evolving economics of law firm talent, driven by the dual forces of flexible legal staffing and the increasing pressures exerted by artificial intelligence (AI). This shift is underscored by insights from prominent figures in the legal sector, such as Alex Su and Andy Chagui of Latitude. Their discussion highlights a future-oriented strategy that accommodates rising client expectations for speed, value, and technological integration. As law firms adapt to these changes, they increasingly rely on experienced attorneys who prefer engagement-based work over traditional full-time roles. This model not only addresses the immediate staffing needs of law firms and corporate legal departments but also represents a significant evolution in legal service delivery. Main Goal and Achievement The primary goal elucidated in this discourse is to adapt legal staffing models to meet the changing demands of the legal market while leveraging AI technologies effectively. Achieving this goal involves embracing flexible legal talent solutions that allow firms to respond dynamically to fluctuating workloads and client expectations. By employing experienced attorneys who are adept at navigating various legal challenges without the overhead of permanent hires, firms can optimize their operational efficiency while maintaining quality service. Advantages of Flexible Legal Talent 1. **Enhanced Operational Flexibility**: The rise of remote work and the acceptance of distributed teams enable law firms to engage seasoned attorneys for specific projects without long-term commitments. This flexibility allows firms to scale their workforce in response to fluctuating demand. 2. **Access to Experienced Talent**: Many attorneys involved in flexible staffing possess 10 or more years of experience, requiring less supervision and enabling them to contribute to projects almost immediately. This contrasts sharply with the traditional model that often emphasizes junior associates who may require more guidance. 3. **Effective Integration of AI**: As firms adopt AI tools, the need for subject matter experts becomes paramount. Flexible legal talent can assist in training these AI systems and reviewing AI-generated outputs, thereby alleviating the burden on associates who are already pressed for billable hours. 4. **Cost-Effective Resource Allocation**: The model allows firms to manage costs effectively by utilizing experienced attorneys for non-billable tasks that would otherwise be deprioritized by associates focused on billable hours. This leads to better resource allocation and improved project management. 5. **New Career Pathways for Attorneys**: The flexible model supports attorneys seeking career paths outside of the traditional partnership track. By working on diverse projects, attorneys can gain exposure to new areas of law and develop specialized skills that enhance their marketability. Caveats and Limitations Despite its advantages, the flexible staffing model is not without its challenges. Law firms must ensure that the quality of work remains high, necessitating rigorous vetting processes for flexible talent. Additionally, the reliance on non-permanent staff can create continuity issues, particularly for long-term projects where institutional knowledge is crucial. Firms must strike a balance between using flexible talent and maintaining a core group of permanent attorneys to ensure stability and cohesion within teams. Future Implications of AI in Legal Services The integration of AI into legal workflows is poised to reshape the landscape of legal services significantly. As foundational AI models advance, they are likely to take on more complex tasks traditionally performed by junior associates. This potential shift raises critical questions regarding the future roles of attorneys in law firms, particularly concerning the necessity for entry-level positions. While AI can enhance efficiency, it may also lead to a reduced demand for certain types of legal work, necessitating a reevaluation of hiring practices and career trajectories within the profession. In conclusion, the legal industry’s evolution towards flexible staffing models, coupled with the integration of AI, presents both opportunities and challenges. Legal professionals must remain adaptive and forward-thinking to leverage these changes effectively while ensuring that the core values of legal practice—such as trust, accountability, and quality—are upheld amidst this transformation. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context of AI and Data Sovereignty In contemporary discussions surrounding artificial intelligence (AI) and its integration into business frameworks, the concept of data sovereignty has gained significant traction. As Kevin Dallas, CEO of EDB, asserts, “Data is really a new currency; it’s the IP for many companies.” This sentiment encapsulates growing concerns among enterprises regarding the safeguarding of intellectual property (IP) in the face of deploying AI-enhanced applications reliant on cloud-based large language models. The pivotal questions arise: Are organizations jeopardizing their IP and competitive advantage? The emergence of AI and data sovereignty is driven by the need for companies to assert control over their data and AI systems, moving away from dependency on centralized providers. Internal data from EDB indicates that 70% of global executives recognize the imperative for a sovereign data and AI platform as a requisite for success. The dialogue surrounding AI sovereignty has escalated into a global policy imperative. Industry leaders, such as Jensen Huang, CEO of NVIDIA, have emphasized the necessity for nations to build their own AI infrastructures, leveraging their unique linguistic and cultural assets to develop and refine AI solutions. This growing movement signals a fundamental shift in how enterprises and nations approach AI development and data management. Main Goal and Achievement Strategies The primary goal articulated in this discourse is to establish AI and data sovereignty, enabling organizations to reclaim control over their data and AI systems. This can be achieved through a multi-faceted approach: 1. **Investing in Localized Infrastructure**: Organizations must prioritize the development of localized AI platforms, diminishing reliance on external cloud providers. 2. **Encouraging Policy Dialogues**: Engaging in conversations at national and international forums can help shape policies that support data sovereignty. 3. **Fostering Collaboration**: Enterprises can collaborate with local governments and technology firms to create a robust ecosystem that supports AI development tailored to specific local needs. By adopting these strategies, organizations can mitigate risks associated with data loss and maintain a competitive edge in their respective markets. Advantages of AI and Data Sovereignty The pursuit of AI and data sovereignty presents numerous advantages, including: 1. **Enhanced Security and Privacy**: Controlling data locally reduces the risks associated with data breaches and unauthorized access, fostering a secure environment for sensitive information. 2. **Intellectual Property Protection**: By managing their data and AI systems, organizations can safeguard their intellectual property more effectively, ensuring that proprietary data remains within their control. 3. **Cultural Relevance**: Developing localized AI solutions allows organizations to create applications that resonate more profoundly with specific cultural and linguistic contexts, enhancing user engagement and satisfaction. 4. **Strategic Independence**: Establishing sovereignty over data and AI systems empowers organizations to make independent decisions without external constraints, thus fostering innovation and agility. However, it is crucial to acknowledge potential limitations, such as the initial costs associated with building localized infrastructures and the need for specialized talent to manage these systems. Future Implications of AI Developments As the landscape of AI continues to evolve, the implications for data sovereignty will become increasingly pronounced. The advent of more sophisticated AI technologies, such as autonomous systems, will necessitate robust frameworks for data governance and control. Future developments may include: 1. **Regulatory Advances**: Governments may introduce stricter regulations to ensure that organizations maintain sovereignty over their data, leading to more stringent compliance requirements. 2. **International Collaboration**: As AI becomes more integral to global economies, international partnerships may emerge to share best practices in data sovereignty, fostering a cooperative approach to AI governance. 3. **Technological Innovations**: Advances in decentralized technologies, such as blockchain, could offer new solutions for maintaining data sovereignty, enabling organizations to secure and manage their data more effectively. In conclusion, as enterprises navigate the complexities of AI integration, the pursuit of data sovereignty will remain a critical priority, shaping the future of AI development and organizational strategy. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview of Recent Linux Kernel Vulnerabilities In recent weeks, the Linux operating system has been plagued by a series of significant kernel vulnerabilities, culminating in a newly identified flaw dubbed “ssh-keysign-pwn.” This vulnerability, discovered by security researchers at Qualys, enables unauthorized users to gain access to highly sensitive files, including Secure Shell (SSH) host private keys and shadow password files. The implications of this flaw are profound, as it does not merely represent a singular security breach but rather a pattern of systemic vulnerabilities within the Linux kernel that has emerged over a short span of time. Such vulnerabilities underscore the urgent need for robust cybersecurity measures, particularly as they pertain to SSH authentication mechanisms. Main Goal and Achievement Strategies The primary objective highlighted in discussions surrounding the “ssh-keysign-pwn” vulnerability is the urgent necessity for system administrators and cybersecurity experts to implement timely security patches and updates. This can be achieved through immediate upgrading to Linux kernel versions that eliminate the vulnerability, specifically those released after May 14, 2026. Failure to address this flaw can expose systems to significant risks, including unauthorized access and potential data breaches. By actively monitoring operating environments for updates and deploying patches as soon as they become available, organizations can significantly mitigate their risk profile. Advantages of Addressing Kernel Vulnerabilities Enhanced Security Posture: Immediate patching of vulnerabilities protects sensitive data from unauthorized access and exploitation, thereby strengthening an organization’s overall security framework. Improved System Integrity: Regular updates and patches reinforce the integrity of the operating system, ensuring that systems function as intended without the risk of exploitation by malicious actors. Trust and Compliance: Maintaining updated security protocols helps organizations comply with regulatory requirements and fosters trust among users and stakeholders. Proactive Threat Mitigation: By addressing vulnerabilities swiftly, organizations can prevent potential breaches before they occur, thereby reducing the overall impact of security incidents. Future Implications of AI in Cybersecurity The rapid advancements in artificial intelligence (AI) are poised to significantly impact the cybersecurity landscape, particularly in relation to vulnerability management in operating systems like Linux. AI-driven solutions can enhance threat detection and response capabilities, enabling organizations to identify vulnerabilities like “ssh-keysign-pwn” more swiftly and accurately. Furthermore, AI can facilitate automated patch management, ensuring that systems are updated with the latest security measures without the need for extensive manual intervention. However, the integration of AI into cybersecurity also raises critical challenges, including the potential for adversaries to leverage AI technologies for malicious purposes. As AI continues to evolve, cybersecurity experts must remain vigilant, adapting their strategies to address emerging threats while harnessing the benefits that AI offers in terms of efficiency and effectiveness in securing digital environments. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Introduction The ongoing legal proceedings surrounding the Musk v. Altman case have illuminated critical issues within the artificial intelligence (AI) sector, particularly concerning the ethical and operational frameworks of organizations like OpenAI. As AI technologies continue to permeate various industries, including finance and fintech, the implications of this trial extend beyond the courtroom into the practices and responsibilities of financial professionals. This blog post aims to contextualize the significance of the Musk v. Altman case, analyze its implications for the AI landscape in finance, and elucidate how financial professionals can navigate these emerging challenges. Context and Significance of the Musk v. Altman Case The Musk v. Altman trial underscores a pivotal moment in the evolution of AI organizations, particularly those transitioning from nonprofit to for-profit models. Elon Musk’s allegations against OpenAI, including breaches of fiduciary duty and failure to adhere to nonprofit principles, raise fundamental questions about the governance and accountability of AI entities. As the jury prepares to deliberate, the outcome will serve as a benchmark for the legal expectations placed on AI organizations, especially those involved in financial applications. Main Goals and Achievements The primary goal of the Musk v. Altman case is to establish a legal precedent regarding the responsibilities of AI companies in maintaining ethical practices while pursuing profitability. Achieving this goal entails determining whether OpenAI’s transition to a for-profit model constituted a breach of trust with its stakeholders, particularly its early supporters like Musk. The case, therefore, aims not only to address Musk’s claims but also to set a standard for future AI ventures, particularly in how they balance innovation with ethical obligations. Advantages for Financial Professionals Enhanced Accountability: The legal outcomes of the Musk v. Altman case could lead to more stringent regulations governing AI in finance, enhancing accountability and ethical standards within the industry. This development could foster greater trust among clients and investors. Improved Compliance Strategies: Financial professionals may benefit from clearer compliance frameworks that emerge from the case, enabling them to align their practices with evolving legal standards in AI application. Guidance on Ethical AI Use: The trial highlights the need for ethical considerations in AI deployment, guiding financial professionals on how to responsibly integrate AI technologies into their strategies and operations. Informed Decision-Making: Understanding the implications of the trial can empower financial professionals to make informed decisions about partnerships with AI firms, ensuring alignment with ethical practices and regulatory compliance. Caveats and Limitations While the potential advantages are significant, it is essential to recognize the limitations inherent in the legal outcomes of the Musk v. Altman case. The advisory nature of the jury’s verdict means that ultimate decisions may still vary based on judicial interpretations. Additionally, the rapidly evolving nature of AI technologies may outpace the regulatory frameworks developed in response to this case, necessitating ongoing adaptation by financial professionals. Future Implications of AI in Finance The implications of ongoing AI developments in finance are profound. As AI technologies continue to advance, financial institutions will increasingly rely on AI for data analysis, risk assessment, and compliance monitoring. However, the Musk v. Altman case serves as a cautionary tale, emphasizing the need for ethical governance and transparent operational practices. Future developments may lead to more robust regulatory frameworks that ensure AI technologies are deployed responsibly, fostering innovation while safeguarding stakeholder interests. Conclusion The Musk v. Altman trial exemplifies a critical intersection between legal accountability and technological innovation within the AI sector. As the jury deliberates and the implications unfold, financial professionals must remain vigilant and adaptive to the evolving landscape shaped by these proceedings. By understanding the lessons from this case, they can better navigate the challenges and opportunities presented by AI technologies in finance. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Introduction The rapid advancements in artificial intelligence (AI) and machine learning (ML) technologies are reshaping various industries, including Computer Vision and Image Processing. The recent workshop titled “Building with AI 2026” highlighted the potential of utilizing tools like Gemini CLI in conjunction with Model Context Protocol (MCP) to streamline development processes. This blog post explores the implications of these technologies for Vision Scientists, detailing the goals, advantages, and future trends in the field. Main Goal and Achievement The primary goal discussed in the workshop is to enhance the efficiency of deploying AI models in practical applications, specifically in creating bots and applications that can interact with users effectively. This can be achieved by leveraging Gemini CLI, which allows developers to articulate their needs in natural language, thus simplifying the deployment process. By employing MCPs, developers can access Google’s extensive resources without grappling with complex command-line interfaces, enabling a more intuitive experience. Advantages of Using Gemini CLI and MCPs Streamlined Development Process: The integration of Gemini CLI with official MCPs allows for a more cohesive development workflow. Developers can directly access Google’s APIs and resources, reducing the time spent on setup and configuration. Enhanced Accessibility: The natural language interface of Gemini CLI lowers the barrier to entry for new developers. This democratizes access to advanced AI tools, allowing scientists and engineers from various backgrounds to contribute to projects without needing extensive command-line expertise. Official Documentation Access: The Google Developer Knowledge MCP provides verified information directly from official documentation. This minimizes the risks associated with outdated or misleading information encountered during web searches, ensuring that developers are working with the most current data. Reducing Errors: By facilitating a direct line of communication between developers and the tools they use, Gemini CLI enables the identification and resolution of errors in real-time. This iterative feedback loop is crucial for refining AI models and ensuring robust performance. Reproducibility of Results: The structured workflows established by deploying models through Gemini CLI ensure that results can be consistently reproduced. This is particularly important in scientific research, where reproducibility is a cornerstone of valid experimentation. Caveats and Limitations While the advancements presented are promising, it is essential to recognize certain limitations. The reliance on cloud-based services necessitates a stable internet connection and may incur costs associated with API usage and data storage. Additionally, as the tools evolve, ongoing updates and changes in APIs may require developers to adapt their workflows continuously. Future Implications The integration of AI into Computer Vision and Image Processing is expected to accelerate in the coming years. With continuous improvements in tools like Gemini CLI and the growing adoption of MCPs, Vision Scientists will be empowered to create more sophisticated applications that leverage real-time data processing and user interaction. Future iterations of these technologies may further simplify the development process, enabling scientists to focus more on innovative research rather than the complexities of deployment. Conclusion In conclusion, the workshop on Gemini CLI and MCPs illustrates the transformative potential of AI technologies in the realm of Computer Vision and Image Processing. By simplifying development workflows, enhancing accessibility, and ensuring the use of reliable resources, these tools present significant advantages for Vision Scientists. As the technology continues to evolve, it holds the promise of fostering greater innovation and efficiency in the field. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

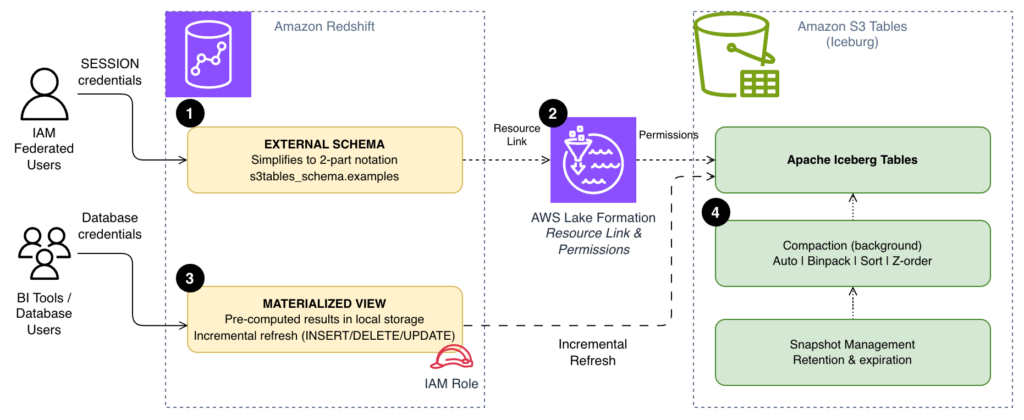

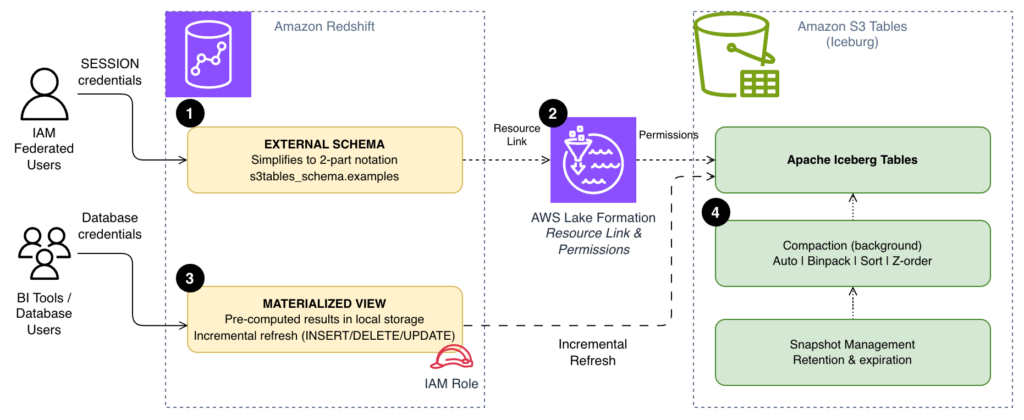

Contextual Overview The integration of Amazon S3 Tables and Amazon Redshift presents a robust framework for executing analytical workloads, particularly when utilizing Apache Iceberg tables. As query volumes surge, inefficiencies can become magnified, particularly evident in scenarios involving recurrent queries. These include frequent dashboard refreshes or repetitive data joins executed by analysts throughout the day, which necessitate scanning data directly from Amazon Simple Storage Service (Amazon S3) each time. Furthermore, reliance on fully qualified three-part table references ([email protected]) can introduce unnecessary complexity, complicating interactions for business intelligence (BI) tools and end users accustomed to more straightforward SQL syntax. Additionally, without appropriate data file organization in S3 Tables, queries may engage more files than necessary. By addressing these critical areas, one can significantly enhance the speed, simplicity, and cost-effectiveness of S3 Tables queries in Amazon Redshift, facilitating both recurring dashboard functionalities and supporting large-scale ad hoc analyses. Main Goal and Achievable Outcomes The primary objective of optimizing S3 Tables queries with Amazon Redshift is to enhance performance and usability through three key strategies: simplifying query syntax with external schemas, utilizing materialized views for storing pre-computed results, and implementing compaction strategies that align data file organization with query patterns. Achieving this optimization can lead to improved query execution times, increased accessibility for end-users, and reduced operational costs. By employing these methodologies, organizations can ensure that data retrieval processes are streamlined and more efficient, thus fostering an environment conducive to data-driven decision-making. Advantages of Optimization Simplified Query Syntax: By transitioning from three-part to two-part notation via external schemas, query execution becomes less cumbersome for users, particularly in BI tools and application code. Enhanced Performance with Materialized Views: Materialized views allow for the local storage of pre-computed results, significantly reducing the frequency and volume of data scans against S3, which can lead to substantial performance gains. Cost-Efficiency Through Compaction: Configuring compaction strategies to fit specific query patterns minimizes the number of files read during queries, thereby optimizing resource utilization and potentially lowering associated costs. Incremental Refresh Capabilities: Redshift’s support for incremental refresh of materialized views enables handling of large datasets efficiently, as only changed rows are processed, reducing both time and computational expenses. Limitations and Caveats Despite the numerous advantages, there are important considerations to note. For instance, while materialized views provide speed advantages, they also require thoughtful management to avoid excessive storage costs. It is essential to balance the frequency of materialized view refreshes with the costs associated with storing pre-computed data. Additionally, the effectiveness of compaction strategies may vary based on the specific patterns of query access, necessitating careful monitoring and potential adjustments over time. Future Implications The landscape of data engineering is poised for significant transformation, particularly with the rise of artificial intelligence (AI). As AI technologies continue to evolve, they will likely enhance the capabilities of data processing and analytics platforms, making them more intelligent and adaptive. For instance, AI-driven optimization algorithms could automate the selection of compaction strategies based on real-time query patterns, further enhancing performance without manual intervention. Additionally, machine learning could be employed to predict query loads and optimize resource allocation dynamically, ensuring that data retrieval processes are not only efficient but also scalable. As these technologies develop, they will undoubtedly shape the future of data engineering, offering new avenues for efficiency and insight extraction from vast datasets. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context of Generative Engine Optimization The landscape of brand discovery is undergoing a rapid transformation, influenced significantly by advancements in artificial intelligence (AI). Marketers are increasingly recognizing that consumer behavior is shifting towards channels where brands either gain visibility through AI-generated responses or risk becoming invisible. This phenomenon is encapsulated in the concept of Generative Engine Optimization (GEO), which emphasizes the need for marketers to structure their content and digital presence so that AI platforms, such as ChatGPT, Google AI, and others, can effectively understand, cite, and recommend their brands. Unlike traditional Search Engine Optimization (SEO), which primarily relies on link-based rankings, GEO prioritizes structured data and machine-readable content, enhancing the efficacy of existing SEO strategies rather than replacing them. Main Goals of Generative Engine Optimization The principal aim of GEO is to secure a tangible and measurable advantage in brand visibility within AI-generated content. This can be achieved by adopting strategies that enhance the machine-readability of digital assets, thereby ensuring that brands are cited in responses generated by AI platforms. Marketers are encouraged to embrace GEO by integrating structured data, utilizing schema markup, and focusing on content formats that align with user queries. By doing so, they can effectively navigate the complexities of AI visibility and leverage it to drive higher-quality traffic to their websites. Advantages of Generative Engine Optimization Enhanced Visibility in AI Responses: GEO allows brands to appear directly within AI-generated answers, significantly increasing the chances of engagement at a moment of high purchase intent. Higher-Quality Leads: Traffic driven by AI referrals tends to exhibit stronger purchase intent, resulting in improved conversion rates compared to traditional organic search. Studies have shown that leads from AI interactions convert at rates up to 4.4 times higher. Increased Brand Authority: Being cited by AI as a trusted source enhances a brand’s perceived authority, which can lead to greater consumer trust and loyalty. Compound Authority Across Platforms: Successful citations in one AI platform can boost visibility across multiple platforms, creating a cycle of increased authority and visibility. Measurable Performance Metrics: GEO introduces new key performance indicators (KPIs) that allow marketers to assess their AI visibility effectively, such as citation frequency and share of voice in AI-generated content. Optimized Content ROI: Existing content can be repurposed and optimized for GEO, allowing marketers to unlock additional visibility without significant new investment. Caveats and Limitations While the benefits of GEO are compelling, several challenges must be navigated. The complexity of tracking AI visibility can pose a significant barrier, as traditional metrics may not accurately reflect performance in this new landscape. Additionally, there are risks associated with AI hallucinations, where the AI may generate inaccurate or misleading information about the brand. Furthermore, implementing structured data and schema markup can require technical expertise that some marketing teams may lack. Future Implications of AI Developments on Marketing The ongoing evolution of AI will undoubtedly shape the future of marketing strategies. As AI technologies become more sophisticated, the demand for structured and machine-readable content will increase. Marketers who proactively adopt GEO strategies will not only enhance their brand visibility but also position themselves to adapt to future trends in consumer behavior and technology. The integration of AI into the marketing ecosystem will likely lead to more personalized and contextually relevant advertising, thereby fostering deeper connections between brands and consumers. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here