Introduction The evaluation of Natural Language Processing (NLP) models is an essential aspect of the development cycle, particularly in the context of Natural Language Understanding (NLU). In this discourse, we will explore the foundational evaluation metrics that serve as cornerstones in assessing the efficacy of NLP models. Often, practitioners encounter challenges in comprehending the myriad definitions and formulas associated with these metrics, leading to a superficial understanding rather than a robust conceptual framework. Main Goal The primary objective of this discussion is to cultivate a profound understanding of evaluation metrics prior to delving into the intricacies of their mathematical representations. This foundational knowledge enables practitioners to discern the nuances of model performance, particularly in relation to the limitations of overall accuracy as a standalone metric. Advantages of Understanding Evaluation Metrics Intuitive Comprehension: Developing an intuitive grasp of evaluation metrics enables practitioners to assess model performance effectively. This understanding allows for more informed decision-making regarding model selection and optimization. Identification of Misleading Metrics: A critical examination of overall accuracy reveals its potential to misrepresent model performance, especially in imbalanced datasets. For instance, a model achieving high accuracy may still fail to capture critical instances relevant to specific applications. Connection to Advanced Metrics: By grasping fundamental concepts, practitioners can better relate advanced metrics such as BLEU and ROUGE to core evaluation principles, enhancing their analytical capabilities. Application in Real-World Scenarios: An understanding of evaluation metrics equips practitioners to tailor their approaches to specific contexts, such as hate speech detection, where the emphasis on catching harmful content outweighs the need for perfect classification of neutral or positive comments. Caveats and Limitations While a robust understanding of evaluation metrics offers numerous advantages, it is imperative to acknowledge certain limitations. For instance, metrics such as precision and recall may not fully encapsulate the complexities of particular NLP tasks, leading to a necessity for nuanced evaluation strategies. Additionally, the reliance on certain metrics may inadvertently prioritize specific aspects of performance at the expense of others, underscoring the importance of a holistic evaluation approach. Future Implications Looking ahead, advancements in artificial intelligence will likely reshape the landscape of evaluation metrics within NLP. As models become increasingly sophisticated, the need for adaptive and context-sensitive evaluation strategies will intensify. Developments in explainable AI (XAI) may further enhance the interpretability of model outputs, allowing practitioners to evaluate not only the accuracy of predictions but also the rationale behind them. Moreover, the integration of multimodal data sources will necessitate the evolution of existing metrics to encompass broader performance criteria. As NLU systems become integral to various applications, from conversational agents to information retrieval, the refinement of evaluation methodologies will play a pivotal role in ensuring their reliability and effectiveness. Conclusion In conclusion, comprehending evaluation metrics in NLP is not merely an academic exercise; it is a vital component of developing effective NLU systems. By fostering an intuitive understanding of these metrics, practitioners can navigate the complexities of model evaluation, ensuring that their methodologies align with real-world applications and user needs. As the field continues to evolve, ongoing education and adaptation in evaluation strategies will be crucial to harnessing the full potential of NLP technologies. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Introduction In contemporary business environments, change is often met with skepticism, as observed in the adage, “When a company makes a change, it’s probably not going to benefit you.” This sentiment underscores a critical aspect of organizational dynamics—understanding the implications of changes, particularly in pricing strategies. The example of McDonald’s rounding cash change to the nearest five cents serves as a case study in the intersection of consumer psychology, pricing strategies, and data analytics. This analysis aims to elucidate the implications of such changes for data analytics professionals, particularly data engineers, and explore the broader effects of these changes in the industry. Understanding the Main Goal The primary objective of the original discussion revolves around analyzing the impact of pricing changes on consumer behavior and corporate profits. This can be achieved through comprehensive data analysis that scrutinizes transaction data to determine the effects of rounding rules on overall revenue. By employing robust analytical methods, data engineers can uncover patterns that inform strategic business decisions and optimize pricing models. Advantages of Data-Driven Pricing Changes The exploration of McDonald’s rounding practices reveals several advantages, including: 1. **Consumer Perception Management**: Pricing strategies that utilize psychological pricing, such as ending prices in .99, create a perception of lower costs. This tactic can enhance consumer attraction and retention. 2. **Revenue Optimization**: The analysis indicates a slight positive rounding difference of 0.04 cents per transaction, suggesting that while individual gains may be minimal, cumulative effects across millions of transactions can yield significant financial benefits for corporations. 3. **Data-Driven Insights**: By leveraging aggregated transaction data, data engineers can identify pricing patterns and consumer behavior trends. This evidence-based approach can lead to more informed decision-making and the development of targeted marketing strategies. 4. **Adaptability to Local Markets**: The analysis highlights the variability in meal pricing and sales tax rates across different states. Data engineers can tailor pricing strategies that accommodate regional differences, thereby maximizing potential revenue streams. Caveats and Limitations While the insights derived from analyzing rounding practices present clear advantages, several limitations must be acknowledged: – **Data Accessibility**: The analysis relies on assumptions regarding pricing distribution and consumer behavior, which can vary widely. Access to detailed transaction data is crucial for more precise analyses. – **Generalizability**: The findings from a specific case, such as McDonald’s, may not universally apply to all businesses or industries. Each organization has unique factors that influence pricing strategies. – **Temporal Factors**: Market conditions, economic trends, and consumer preferences are subject to change. Continuous monitoring and real-time data analysis are necessary to ensure the effectiveness of pricing strategies. Future Implications and the Role of AI As the landscape of data analytics continues to evolve, the integration of artificial intelligence (AI) technologies is poised to transform the industry. AI can automate complex data analysis processes, providing deeper insights into consumer behavior and pricing strategies. Machine learning algorithms can predict future trends based on historical data, allowing businesses to adapt their pricing models proactively. Moreover, AI-driven analytics can enhance the accuracy of data collection and processing, mitigating the limitations of traditional methods. As businesses increasingly rely on data-driven decision-making, the role of data engineers will become even more critical in harnessing AI technologies to optimize pricing strategies and improve overall business performance. Conclusion In summary, understanding the implications of pricing changes, such as those implemented by McDonald’s, underscores the importance of data analytics in modern business practices. By leveraging data-driven insights, organizations can optimize pricing strategies to enhance consumer perception and maximize revenue. As advancements in AI continue to shape the industry, data engineers will play a pivotal role in driving these changes, ensuring that businesses can navigate the complexities of pricing dynamics effectively. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

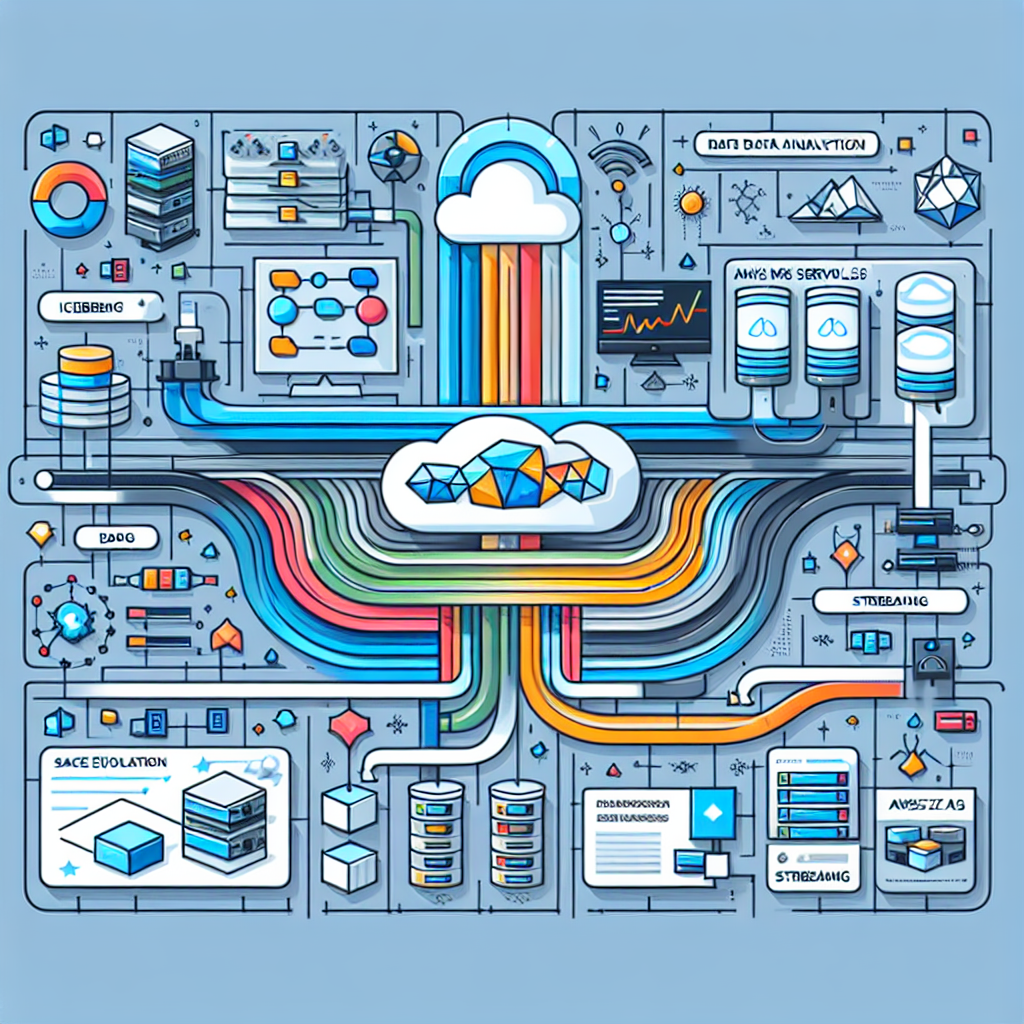

Introduction In the contemporary landscape of big data engineering, the efficient synchronization of real-time data within data lakes is paramount. Organizations are increasingly grappling with challenges related to data accuracy, latency, and scalability. As businesses strive for actionable insights derived from near real-time data, the need for advanced data management solutions becomes ever more critical. This blog post focuses on the integration of Amazon MSK Serverless, Apache Iceberg, and AWS Glue streaming as a comprehensive solution to unlock real-time data insights through schema evolution. Main Goal and Implementation Strategy The primary objective of this integration is to facilitate real-time data processing and analytics by leveraging schema evolution capabilities. Schema evolution refers to the ability to modify the structure of a data table to accommodate changes in the data over time without interrupting ongoing operations. This is particularly vital in streaming environments where data is continuously ingested from diverse sources. By employing Apache Iceberg’s robust schema evolution support, organizations can ensure that their streaming pipelines remain operational even when underlying data structures change. Key Advantages of the Integrated Solution Continuous Data Processing: The solution ensures uninterrupted data flows, enabling organizations to maintain analytical capabilities without the need for manual intervention during schema changes. Scalability: Utilizing Amazon MSK Serverless allows for automatic provisioning and scaling of resources, eliminating the complexities typically associated with capacity management. Real-Time Analytics: By streamlining the data processing pipeline from Amazon RDS to Iceberg tables via AWS Glue, businesses can access up-to-date insights, thus enhancing decision-making processes. Reduced Operational Friction: The integration minimizes technical complexity and operational overhead by automating schema evolution, which is crucial for environments with frequently changing data models. Future-Proofing Data Infrastructure: The architecture’s inherent flexibility allows it to adapt to various use cases, ensuring that organizations can respond effectively to evolving data needs. Caveats and Limitations While the integrated solution offers numerous advantages, there are limitations to consider. Notably, certain schema changes—such as dropping or renaming columns—may still require manual intervention. Furthermore, organizations must ensure they have the necessary AWS infrastructure and IAM permissions set up to leverage these capabilities fully. Performance may also be contingent upon how well the data sources are managed and the frequency of changes occurring within the source systems. Future Implications and AI Developments The impact of artificial intelligence (AI) on data engineering practices is poised to be transformative. As AI technologies evolve, the automation of data processing and schema evolution could become more sophisticated, further reducing the need for human oversight. Enhanced predictive analytics, powered by AI, may enable organizations to anticipate data changes and adjust their schemas proactively. Moreover, the integration of AI could lead to smarter data pipelines that optimize performance, improve data quality, and reduce latency even further, thus reshaping the role of data engineers in the future. Conclusion This exploration of the integration of Amazon MSK Serverless, Apache Iceberg, and AWS Glue streaming illustrates a path toward unlocking real-time data insights through schema evolution. By addressing the challenges of data latency and accuracy, organizations can enhance their analytical capabilities, ultimately driving better business strategies. As the field of big data engineering continues to evolve, embracing such innovative solutions will be critical for maintaining a competitive edge in a data-driven world. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

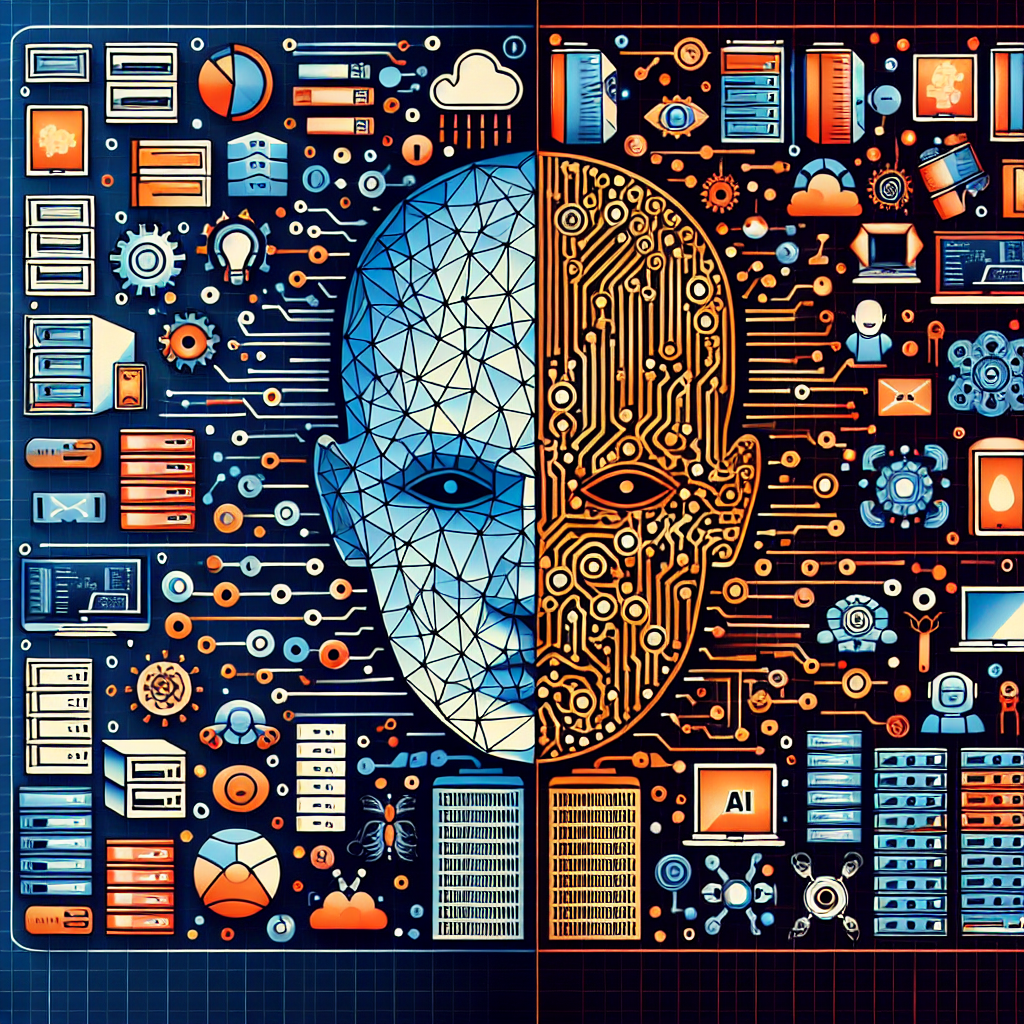

Context: The Evolving Landscape of Data Engineering in AI As artificial intelligence (AI) technology continues to permeate various sectors, the role of data engineering becomes increasingly pivotal. Data engineers are tasked with managing the complexities of unstructured data and the demands of real-time data pipelines, which are significantly heightened by advanced AI models. With the growing sophistication of these models, data engineers must navigate an environment characterized by escalating workloads and a pressing need for efficient data management strategies. This transformation necessitates a reevaluation of the data engineering landscape, as professionals in this field are expected to adapt to the evolving requirements of AI-driven projects. Main Goal: Enhancing the Role of Data Engineers in AI Integration The central aim emerging from this discourse is to recognize and enhance the integral role of data engineers within organizations leveraging AI technologies. This can be achieved through targeted investment in skills development, strategic resource allocation, and the adoption of advanced data management tools. By empowering data engineers with the necessary skills and resources, organizations can optimize their data workflows and facilitate a more seamless integration of AI capabilities into their operations. Advantages of a Strong Data Engineering Framework Increased Organizational Value: A significant 72% of technology leaders acknowledge that data engineers are crucial to business success, with the figure rising to 86% in larger organizations where AI maturity is more pronounced. This alignment underscores the value that proficient data engineering brings to organizations, particularly in sectors such as financial services and manufacturing. Enhanced Productivity: Data engineers are dedicating an increasing proportion of their time to AI projects, with engagement levels nearly doubling from 19% to 37% over two years. This trend is expected to escalate further, with projections indicating an average of 61% involvement in AI initiatives in the near future. Such engagement fosters greater efficiency and innovation within data management processes. Adaptability to Growing Workloads: The demand for data engineers to manage expanding workloads is evident, as 77% of surveyed professionals anticipate an increase in their responsibilities. By recognizing these challenges and providing adequate support, organizations can ensure that data engineers remain effective amidst growing demands. Future Implications: The Path Forward for AI and Data Engineering The trajectory of AI advancements suggests a continued integration of sophisticated technologies within data engineering practices. As organizations increasingly rely on AI-driven insights, the implications for data engineers will be profound. Future developments may include the automation of routine data management tasks, enabling data engineers to focus on higher-level analytical functions. However, this evolution must be approached with caution, ensuring that data engineers are equipped with the necessary skills to leverage emerging technologies effectively. Continuous professional development and adaptive strategies will be essential for data engineers to thrive in this dynamic landscape. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context The ongoing evolution of artificial intelligence (AI) is significantly influenced by advancements in computational technologies. At the recent NVIDIA AI Day held in Sydney, industry leaders gathered to explore the implications of what they refer to as “sovereign AI.” Notably, Brendan Hopper, the Chief Information Officer for Technology at the Commonwealth Bank of Australia, articulated how next-generation compute capabilities are driving AI innovations. This gathering underscored the essence of collaboration between technology providers and local ecosystems, setting the stage for a transformative era in AI applications. Main Goal of the Event The primary objective of the event, as articulated by the technology leaders present, was to highlight how emerging compute technologies can enhance AI capabilities. This goal can be achieved through a concerted effort involving infrastructure development, strategic partnerships, and a commitment to innovation. The discussions emphasized the importance of high-performance computing and the role it plays in fostering an environment conducive to AI advancements. Advantages of Advancements in AI and Compute Technologies Enhanced Computational Power: The integration of quantum and high-performance computing is redefining the pace of scientific discovery. As highlighted by Giuseppe M. J. Barca, co-founder and head of research at QDX Technologies, these advancements empower AI to tackle complex problems with greater accuracy and efficiency. Growth of the AI Ecosystem: The event illustrated a growing ecosystem of over 600 Australia-based NVIDIA Inception startups and numerous higher education institutions leveraging NVIDIA technologies. This ecosystem fosters innovation and provides a platform for collaboration among researchers and industry leaders. Cross-Industry Collaboration: NVIDIA AI Day showcased partnerships between technology developers and various sectors, including finance and public services. This collaboration presents opportunities for industries to leverage AI for transformative solutions, enhancing service delivery and operational efficiencies. Caveats and Limitations While the advancements in AI and computational technologies present numerous benefits, there are inherent limitations and challenges. The rapid pace of technological change may outstrip regulatory frameworks, leading to ethical concerns regarding data usage and governance. Furthermore, the dependency on advanced infrastructure may pose barriers for smaller organizations and startups striving to enter the market. Future Implications The implications of AI advancements are profound, particularly concerning the role of generative AI models. As computational capabilities continue to evolve, they will enable AI systems to generate more sophisticated outputs, enhancing applications in various fields, including healthcare, finance, and creative industries. The ongoing developments will likely lead to an increase in AI-driven solutions, promoting efficiency, personalization, and innovation. However, it will also necessitate ongoing scrutiny regarding ethical practices and the societal impacts of widespread AI integration. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview The recent advancements in the Qwen Deep Research tool, introduced by Alibaba’s Qwen Team, signify a transformative shift in the generative AI landscape, particularly for professionals engaged in research and content creation. This update enables users to swiftly convert comprehensive research reports into various digital formats, including interactive web pages and podcasts, with minimal effort. The integration of functionalities such as Qwen3-Coder, Qwen-Image, and Qwen3-TTS illustrates a significant proprietary expansion that enhances the utility of AI in research environments. By facilitating an integrated workflow, the Qwen Deep Research tool empowers users to generate, publish, and disseminate knowledge efficiently, thus aligning with the demands of modern content consumption. Main Objective and Achievement Mechanism The primary goal of the Qwen Deep Research update is to streamline the research process from initiation to publication by enabling multi-format output. Users can achieve this by utilizing the Qwen Chat interface to request specific information, after which the AI generates a comprehensive report. This report can subsequently be transformed into a live web page or an audio podcast through a straightforward user interface. The effective combination of AI capabilities allows for a seamless transition from text-based research to interactive and auditory formats, catering to diverse audience preferences. Advantages of Qwen Deep Research – **Multi-Modal Output**: The tool allows for the creation of diverse content forms—written reports, interactive web pages, and audio podcasts—enabling comprehensive knowledge dissemination across various platforms. – **User-Friendly Interface**: The design of the Qwen Chat interface facilitates a smooth user experience, allowing researchers to generate complex content with just a few clicks, thus reducing the time and effort typically required in traditional research workflows. – **Integrated Workflow**: By hosting the entire process—from research execution to content deployment—Qwen eliminates the need for users to configure or maintain separate infrastructures, which enhances productivity and reduces overhead. – **Customization Options**: The podcast feature offers a selection of different voice outputs, adding a personalized touch to audio content, which can appeal to a broader audience. – **Real-Time Data Analysis**: The platform’s capability to pull data from multiple sources and analyze discrepancies in real time supports accurate and reliable research outputs. However, it is crucial to note certain limitations: – **Audio Quality and Language Constraints**: Early users have reported that the voice outputs may sound robotic compared to other AI tools. Additionally, the current version may not support language changes, limiting accessibility for non-English speakers. – **Dependency on Proprietary Infrastructure**: While the tool offers integrated services, it also confines users within a proprietary ecosystem, potentially hindering those who prefer or require more customizable solutions. Future Implications of AI Developments As generative AI continues to evolve, tools like Qwen Deep Research are likely to redefine the landscape of research and content creation. The implications of this development are far-reaching: – **Enhanced Accessibility**: The ability to generate multiple content formats from a single source could democratize access to information, allowing diverse audiences to engage with research findings in ways that suit their preferences. – **Shift in Research Methodologies**: Traditional research practices may need to adapt to incorporate AI-driven tools that emphasize efficiency and multi-format output, potentially leading to a more collaborative and dynamic research environment. – **Emergence of New Content Standards**: As tools become more advanced, expectations regarding the quality and presentation of research outputs may rise, prompting users to seek even greater sophistication in AI capabilities. In summary, the Qwen Deep Research update exemplifies a significant stride in the deployment of generative AI models within the research domain, underscoring the potential for AI to enhance productivity and accessibility in knowledge-sharing. The future will likely see continued integration of such technologies, further shaping the way research is conducted and communicated. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context and Significance The phenomenon of coastal flooding poses a significant risk to communities in the United States, with a staggering 26% probability of flooding occurring within a 30-year timeframe. This risk is expected to escalate due to climate change and rising sea levels, rendering coastal areas increasingly susceptible to natural disasters. The research led by Michael Beck at the Center for Coastal Climate Resilience at UC Santa Cruz exemplifies the integration of advanced computational techniques and ecological modeling to address these challenges. By utilizing NVIDIA GPU-accelerated visualizations, Beck’s team aims to elucidate flood risks for governmental bodies and organizations, thus promoting nature-based solutions that mitigate potential damages. Main Goal and Achievements The principal objective of the UC Santa Cruz initiative is to enhance the understanding of coastal flooding through precise modeling and visualizations, which inform decision-making regarding adaptation and preservation strategies. The integration of NVIDIA CUDA-X software and high-performance GPUs significantly expedites the simulation processes, reducing computation times and enabling detailed scenario analyses. This achievement is crucial in demonstrating the efficacy of natural infrastructure, such as coral reefs and mangroves, in mitigating flood risks and supporting coastal resilience. Advantages of Advanced Flood Modeling Accelerated Simulations: The use of NVIDIA RTX GPUs has decreased model computation times from approximately six hours to around 40 minutes, allowing for more efficient analyses. Enhanced Visualization: High-resolution visualizations facilitate a clearer understanding of complex flooding scenarios, which is essential for motivating action among stakeholders. Global Mapping Initiatives: The initiative aims to map small-island developing states globally, providing critical data for international climate conferences and enhancing global awareness of flood risks. Integration of Nature-Based Solutions: By demonstrating the protective benefits of coral reefs and mangroves, the modeling efforts promote strategies that leverage natural ecosystems for flood risk reduction. However, it is essential to acknowledge potential limitations. The reliance on advanced computational resources may not be feasible for all research institutions, and the efficacy of nature-based solutions can vary based on local ecological conditions. Future Implications of AI in Flood Modeling The evolution of artificial intelligence (AI) and its applications in environmental modeling is poised to revolutionize the field. As AI technologies continue to advance, researchers will likely develop more sophisticated algorithms capable of analyzing larger datasets and generating predictive models with greater accuracy. This could lead to enhanced real-time flood forecasting, improved risk assessments, and more effective disaster response strategies. Moreover, the increasing accessibility of AI tools may empower more institutions to engage in similar research initiatives, thereby broadening the scope of flood risk management globally. In conclusion, the intersection of advanced computing and ecological modeling, as demonstrated by UC Santa Cruz’s initiative, not only addresses immediate flood risk challenges but also sets a precedent for future research endeavors in the field of environmental resilience. The ongoing development of AI technologies will undoubtedly play a critical role in shaping responses to climate change and enhancing the sustainability of coastal communities around the world. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

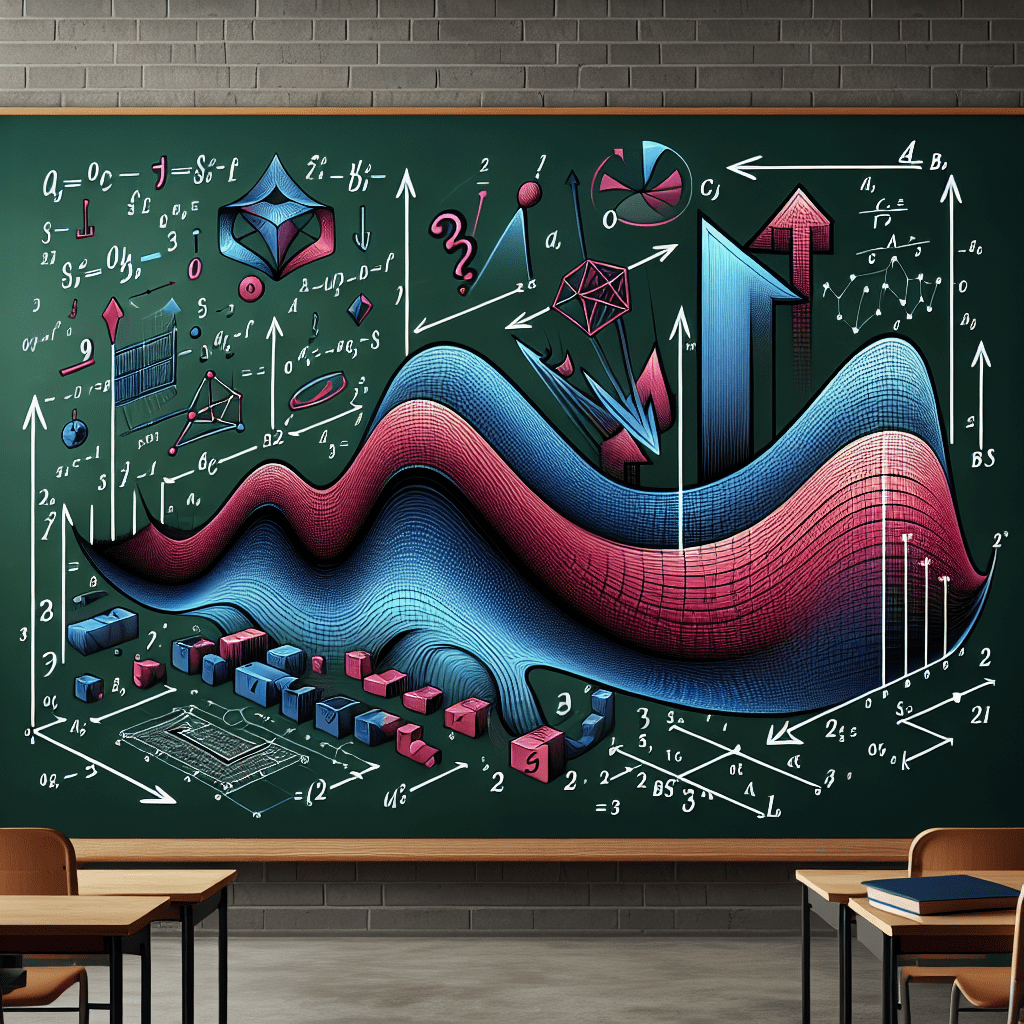

Context Computer vision represents a significant domain in the analysis of images and videos. Although machine learning models often dominate discussions surrounding computer vision, it is crucial to recognize that numerous existing algorithms can sometimes outperform AI approaches. Within this expansive field, feature detection plays a pivotal role by identifying distinct regions of interest within images. These identified features are subsequently utilized to create feature descriptors, which are numerical vectors that represent localized areas of an image. By combining these feature descriptors from multiple images of the same scene, practitioners can engage in tasks like image matching or scene reconstruction. This article draws parallels to calculus to elucidate the concepts of image derivatives and gradients. A comprehensive understanding of these concepts is essential for grasping the underlying principles of convolutional kernels, particularly the Sobel operator, a vital tool in edge detection within images. Main Goal and Achievement The primary objective of the original post is to provide a foundational understanding of image derivatives, gradients, and the Sobel operator as essential tools in feature detection within computer vision. This understanding can be achieved through a structured approach that encompasses the mathematical representations of image properties, practical examples of applying convolutional kernels, and the implementation of these concepts in programming environments such as OpenCV. Advantages of Understanding Image Derivatives and Gradients Enhanced Feature Detection: Understanding image derivatives and gradients enables the identification of significant variations in pixel intensity, facilitating the detection of edges and features within images. This is critical in applications such as object recognition, image segmentation, and scene reconstruction. Robustness Against Noise: The Sobel operator, in particular, demonstrates increased resilience to noise in images compared to simpler methods, as it considers neighboring pixel values for more stable edge detection. Improved Image Processing Techniques: By applying techniques such as convolutional kernels, machine learning practitioners can enhance the quality of input data for algorithms, ultimately leading to more accurate predictions and analyses. Foundation for Advanced Techniques: Knowledge of first-order derivatives and the Sobel operator serves as a stepping stone for understanding more complex image analysis algorithms, such as those involving convolutional neural networks (CNNs). It is essential to acknowledge potential limitations, such as the computational cost associated with processing high-resolution images and the challenges posed by varying lighting conditions that can affect gradient calculations. Future Implications As artificial intelligence continues to evolve, particularly in the realm of computer vision, the methodologies surrounding feature detection, including image derivatives and operators like Sobel and Scharr, are expected to undergo significant advancements. Innovations in AI are likely to enhance the efficiency of these processes, allowing for real-time applications in diverse fields such as autonomous vehicles, medical imaging, and augmented reality. Moreover, the integration of deep learning techniques may further augment traditional methods, leading to more sophisticated and accurate feature detection capabilities. In conclusion, understanding image derivatives, gradients, and the Sobel operator is crucial for professionals in the applied machine learning industry. This knowledge not only enhances feature detection capabilities but also lays the groundwork for future advancements in image analysis technologies. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context of Personalization in Pharmaceutical Sales and Marketing The dynamic landscape of the pharmaceutical industry necessitates a paradigm shift towards personalization, particularly in sales and marketing operations. As pharmaceutical companies strive to capture the attention of healthcare professionals (HCPs), the imperative for tailored communication becomes increasingly evident. Recent estimates indicate that biopharmaceutical firms reached only 45% of HCPs in 2024, a significant decline from 60% in 2022. This decline underscores the necessity for innovative strategies emphasizing personalization, real-time communication, and relevant content. Such strategies are essential for fostering trust and effectively engaging HCPs in a competitive market. However, the rising volume of content requiring medical, legal, and regulatory (MLR) review presents substantial challenges, potentially leading to delays and missed opportunities. Main Goal and Achievement Strategies The primary goal articulated in the original discourse revolves around enhancing the ability of pharmaceutical companies to engage HCPs through personalized communication strategies. Achieving this goal necessitates the implementation of advanced AI solutions capable of automating MLR processes. By leveraging agentic AI, pharmaceutical firms can streamline content generation, ensure compliance with regulatory standards, and expedite the review process. This transformation is not merely aspirational but essential for maintaining competitive advantage in an evolving marketplace. Advantages of Implementing Agentic AI Increased Engagement: Personalized outreach, facilitated by AI-driven insights, can significantly enhance engagement with HCPs. By tailoring content to meet the specific needs and preferences of healthcare providers, companies can effectively capture their attention. Enhanced Efficiency: The integration of agentic AI into MLR processes can reduce the time required for content approval, thereby minimizing delays and optimizing the speed of market entry for new products. Improved Compliance: AI systems can assist in ensuring that all materials comply with regulatory standards, reducing the risk of non-compliance and associated penalties. Cost Reduction: Streamlining the content review process through automation can lead to substantial cost savings, allowing resources to be reallocated towards more strategic initiatives. Data-Driven Insights: AI can analyze vast amounts of data to provide actionable insights into HCP preferences and behaviors, enabling pharmaceutical companies to tailor their approaches effectively. Nevertheless, it is essential to consider potential limitations, such as the reliance on technology that may not fully capture the nuances of human interaction and the ethical implications surrounding data privacy and security. Future Implications of AI Developments in Pharmaceutical Marketing The growing integration of AI into pharmaceutical marketing strategies promises significant future implications. As technology continues to evolve, we can anticipate even more sophisticated AI applications that will enhance the personalization of marketing efforts. Future advancements may enable real-time adjustments to marketing strategies based on emerging trends and HCP feedback, fostering a more agile and responsive approach. Moreover, the potential for enhanced predictive analytics will enable pharmaceutical companies to anticipate HCP needs and preferences more accurately, leading to more effective engagement strategies. However, as these technologies develop, ongoing ethical considerations regarding data usage and patient privacy will remain paramount, necessitating a balanced approach that prioritizes both innovation and compliance. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview of AI Agents and Their Definitions The term ‘AI agent’ has emerged as a focal point of debate within the technology sector, particularly in Silicon Valley. This term, akin to a Rorschach test, reflects the diverse interpretations held by various stakeholders, including CTOs, CMOs, business leaders, and AI researchers. These conflicting perceptions have led to significant misalignments in investments, as enterprises allocate billions into disparate interpretations of agentic AI systems. Consequently, the disparity between marketing rhetoric and actual capabilities poses a substantial risk to the digital transformation endeavors across numerous industries. Three Distinct Perspectives on AI Agents 1. The Executive Perspective: AI as an Enhanced Workforce From the viewpoint of business executives, AI agents epitomize the ultimate solution for improving operational efficiency. These leaders envision intelligent systems designed to manage customer interactions, automate intricate workflows, and scale human expertise. While there are examples, such as Klarna’s AI assistants managing a significant portion of customer service inquiries, the discrepancy between current implementations and the ideal of true autonomous decision-making remains considerable. 2. The Developer Perspective: The Role of the Model Context Protocol (MCP) Developers have adopted a more nuanced definition of AI agents, largely influenced by the Model Context Protocol (MCP) pioneered by Anthropic. This framework allows large language models (LLMs) to interact with external systems, databases, and APIs, effectively acting as connectors rather than autonomous entities. These MCP agents enhance the capabilities of LLMs by providing access to real-time data and specialized tools, although labeling these interfaces as “agents” can be misleading, as they do not possess true autonomy. 3. The Researcher Perspective: Autonomous Systems Research institutions and tech R&D departments focus on what they classify as autonomous agents—sophisticated software modules capable of independent decision-making without human intervention. These agents are characterized by their ability to learn from their environment and adapt strategies in real-time. The concept encompasses independent, goal-oriented entities that can reason and execute complex processes, which introduces a level of unpredictability not seen in traditional systems. Risks Associated with Autonomous Agents While the potential for autonomous agents to tackle complex business problems is promising, significant risks accompany their deployment. The ability of these agents to make independent decisions in sensitive domains such as finance and healthcare raises concerns regarding accountability and error management. Past events, such as “flash crashes” in algorithmic trading, underscore the dangers posed by unregulated autonomous decision-making. Knowledge Graphs: Enabling Accountability in AI Knowledge graphs emerge as a critical solution for addressing the autonomy problem associated with AI agents. By offering a structured representation of relationships and decision pathways, knowledge graphs can transform opaque AI systems into accountable entities. They serve as both a repository of contextual information and a mechanism for enforcing constraints, thus ensuring that agents operate within ethical and legal boundaries. Five Principles for Governing Autonomous Agents Leading enterprises are beginning to embrace architectures that combine LLMs with knowledge graphs. Here are five guiding principles for implementing accountable AI systems: 1. **Define Autonomy Boundaries**: Clearly delineate areas of operation for agents, distinguishing between autonomous and human-supervised activities. 2. **Implement Semantic Governance**: Utilize knowledge graphs to encode essential business rules and compliance requirements that agents must adhere to. 3. **Create Audit Trails**: Ensure that each decision made by an agent can be traced back to specific nodes within the knowledge graph, facilitating transparency and continuous improvement. 4. **Enable Dynamic Learning**: Allow agents to suggest updates to the knowledge graph, contingent upon human oversight or validation protocols. 5. **Foster Agent Collaboration**: Design multi-agent systems where specialized agents operate collectively, using the knowledge graph as their common reference. Main Goals and Achievements The primary objective articulated in the original content is to establish a framework for developing accountable AI agents through the integration of knowledge graphs. This can be achieved by ensuring that AI systems are governed by clear principles that promote transparency, accountability, and ethical compliance. By adhering to these guidelines, organizations can leverage AI technologies while mitigating the associated risks. Advantages of Implementing Knowledge Graphs in AI Systems 1. **Enhanced Accountability**: Knowledge graphs provide a structured framework for tracking decision lineage, which can enhance accountability in AI systems. 2. **Improved Contextual Awareness**: They facilitate a deeper understanding of relationships and historical patterns, which is crucial for informed decision-making. 3. **Regulatory Compliance**: By enforcing constraints, knowledge graphs help organizations navigate the complex landscape of legal and ethical requirements. 4. **Dynamic Learning Capabilities**: They allow for the integration of new insights into the operational framework of AI agents, promoting continuous learning. 5. **Operational Efficiency**: Early adopters of accountable AI agents have reported significant reductions in decision-making time, thereby enhancing operational efficiency. Despite these advantages, it is essential to recognize potential limitations, such as the challenges associated with maintaining the accuracy and relevance of knowledge graphs over time. Future Implications for AI Development The trajectory of AI development suggests that the integration of knowledge graphs will be paramount in shaping the future landscape of Natural Language Understanding and Language Understanding technologies. As AI systems become more autonomous, the importance of accountability and transparency will only increase. Future advancements may lead to the emergence of more sophisticated autonomous agents capable of complex decision-making across various domains. However, the success of these developments will hinge on the establishment of robust governance structures that prioritize ethical considerations and regulatory compliance. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here