Contextualizing AI Implementation in Enterprises In the rapidly evolving landscape of artificial intelligence (AI), many organizations have hastily embarked on the implementation of generative AI technologies, only to face challenges that hinder the realization of expected value. As organizations strive for measurable outcomes, the pressing question arises: how can they design AI systems that truly deliver success? At the forefront of this endeavor, Mistral AI collaborates with leading global enterprises to co-create bespoke AI solutions that address their most formidable challenges. From enhancing customer experience productivity with Cisco to innovating automotive intelligence with Stellantis and accelerating product innovation with ASML, Mistral AI employs foundational models and tailors AI systems to fit the unique contexts of each organization. Central to Mistral AI’s methodology is the identification of what they term an “iconic use case.” This crucial first step acts as a blueprint for AI transformation, distinguishing between genuine advancements and mere experimentation with technology. The careful selection of an impactful use case can significantly influence the trajectory of an organization’s AI journey. Defining the Main Goal of AI Use Case Selection The primary goal articulated in the original content is to identify an appropriate use case that serves as the initial catalyst for broader AI transformation within an organization. This involves selecting a project that is not only strategically sound but also urgent, impactful, and feasible. The effective identification of such a use case lays the groundwork for a successful AI deployment, steering organizations towards measurable success rather than aimless experimentation. Achieving this goal necessitates a structured approach, which includes evaluating potential use cases against specific criteria—strategic importance, urgency, impact, and feasibility. By systematically assessing these factors, organizations can prioritize projects that promise the greatest return on investment and align with their long-term strategic objectives. Advantages of an Effective Use Case Selection 1. **Strategic Alignment**: Selecting a use case that aligns with core business objectives ensures that AI initiatives have the backing of executive leadership, fostering organizational buy-in and support. 2. **Urgency in Problem-Solving**: A well-chosen use case addresses immediate business challenges, making it relevant to stakeholders and justifying the investment of time and resources. 3. **Pragmatic Impact**: Projects that are designed to be impactful from the outset enable organizations to deploy solutions in real-world environments, facilitating real user testing and feedback. 4. **Feasibility for Quick ROI**: Choosing projects that can be operationalized swiftly maintains momentum, as organizations can witness early successes that encourage further investment in AI initiatives. 5. **Learning and Adaptation**: The identification of an iconic use case fosters an iterative learning environment, allowing organizations to refine their AI strategies based on initial results and user feedback. Despite these advantages, it is essential to remain cognizant of potential limitations. For instance, overly ambitious projects may lack a clear path to quick ROI, and tactical fixes may not contribute significantly to long-term strategic goals. Future Implications of AI Developments Looking ahead, the implications of AI advancements in enterprise contexts are profound. As organizations increasingly adopt AI technologies, the landscape of business operations will continue to transform. The ability to leverage AI for strategic decision-making, customer engagement, and operational efficiency will become essential for competitive advantage. Moreover, as organizations refine their approach to selecting and implementing AI use cases, they will likely establish more robust frameworks for AI governance and ethics. This evolution will not only enhance the effectiveness of AI solutions but will also address concerns regarding transparency and accountability in AI deployments. In conclusion, the path to successful AI implementation begins with the strategic selection of an iconic use case. Organizations that adopt a structured, criteria-based approach to identifying their first AI project will pave the way for scalable transformations, unlocking the full potential of AI technologies for enhanced business outcomes. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextualizing the NPM Package in Computer Vision & Image Processing The exploration of innovative software solutions within the realm of Computer Vision and Image Processing is paramount for enhancing the capabilities of Vision Scientists. One such solution is the NPM package featured in the original post, which is designed to facilitate the transformation of complex data sets into comprehensible narratives. The concept of narrating Git history through the Terminal Time Machine, as proposed by Mayuresh Smita Suresh, extends beyond mere data management; it embodies a methodological shift towards more intuitive understanding and communication of technological processes. By leveraging such tools, Vision Scientists can articulate complex findings in a manner that is accessible not only to peers but also to stakeholders and the broader public. Main Goal and Its Achievement The primary objective of the Terminal Time Machine NPM package is to simplify the interpretation of Git history, allowing users to visualize their version control narratives effectively. Achieving this goal involves integrating the NPM package into existing workflows, enabling users to generate stories from their Git repositories. This tool aids in contextualizing past developments and fosters a culture of transparency and collaboration among team members. For Vision Scientists, this means they can better document their methodologies, share insights on algorithmic developments, and provide a clearer picture of project trajectories, which is essential for peer review and funding applications. Advantages of Utilizing the NPM Package The integration of the Terminal Time Machine package offers several notable advantages: 1. **Enhanced Communication**: It allows Vision Scientists to present their findings and project histories in a narrative form, making complex data more digestible for non-expert audiences. 2. **Improved Collaboration**: By visualizing Git histories, teams can better understand contributions and workflows, leading to more effective collaboration on interdisciplinary projects. 3. **Comprehensive Documentation**: The package aids in maintaining accurate documentation of code changes and project evolution, which is crucial in an era where reproducibility is a major concern in scientific research. 4. **Increased Engagement**: Presenting research through engaging narratives can attract interest from diverse audiences, potentially facilitating broader participation in research discussions and initiatives. However, it is essential to recognize certain limitations. The effectiveness of the package hinges on the comprehensive and consistent use of Git by all team members, which may not always be feasible. Furthermore, the narrative style may not capture all technical nuances, necessitating supplementary documentation for more complex methodologies. Future Implications of AI Developments in Vision Science As advancements in artificial intelligence continue to reshape the landscape of Computer Vision, the implications for Vision Scientists are profound. The integration of AI technologies is expected to refine the capabilities of tools like the Terminal Time Machine, enhancing their functionality and user experience. For instance, future iterations may incorporate machine learning algorithms to automate the narrative generation process, providing real-time insights based on user engagement and project dynamics. Moreover, as AI becomes increasingly embedded in research methodologies, it will enable Vision Scientists to delve deeper into data analysis, extracting patterns and correlations that were previously obscured. This evolution could lead to a new paradigm in scientific inquiry, where the synthesis of human insight and machine learning capabilities fosters unprecedented discoveries in image processing and computer vision. In conclusion, the Terminal Time Machine NPM package exemplifies the intersection of narrative techniques and technical advancements that can significantly benefit Vision Scientists. By embracing such tools, researchers can enhance their documentation practices, improve collaboration, and engage broader audiences, all while preparing for an exciting future where AI continues to drive innovation in their field. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Introduction In an era characterized by interconnected systems and data-driven decision-making, organizations face increasing pressure to maintain data resiliency. This foundational element is critical for ensuring that mission-critical applications remain operational, teams can function effectively, and compliance standards are adhered to. The introduction of advanced storage solutions, such as Azure NetApp Files Elastic Zone-Redundant Storage (ANF Elastic ZRS), represents a significant leap forward in enhancing data availability and minimizing disruptions, which is essential for modern enterprises, particularly in the domain of Big Data Engineering. Contextual Understanding of Data Resiliency Data resiliency is no longer merely a choice; it has become a necessity for organizations aiming to mitigate risks associated with downtime and data loss. In a landscape where every minute of inaccessibility can lead to substantial financial losses, implementing robust data management strategies is paramount. Azure NetApp Files (ANF) serves as a premier cloud-based storage solution designed to address these critical needs, particularly with the introduction of its Elastic ZRS service, which provides enhanced redundancy and rapid deployment capabilities. Main Goals and Achievements The primary objective of ANF Elastic ZRS is to ensure continuous data availability while achieving zero data loss, thereby safeguarding mission-critical applications against unexpected disruptions. This goal is realized through the implementation of synchronous replication across multiple availability zones (AZs) within a region. By automatically routing traffic to an alternative zone in the event of a failure, ANF Elastic ZRS effectively minimizes the risk of downtime and ensures seamless operational continuity. Advantages of ANF Elastic ZRS Enhanced Data Availability: By employing synchronous replication across multiple AZs, ANF Elastic ZRS assures that even during outages, data remains accessible, thereby facilitating uninterrupted business operations. Service Managed Failover: The automated failover mechanism enables organizations to maintain operational continuity without requiring manual intervention, significantly reducing the potential for human error during critical incidents. Cost Efficiency: Organizations can achieve high availability without the need for multiple separate storage volumes, thus optimizing costs associated with data management. Rich Data Management Features: ANF Elastic ZRS is built on the ONTAP® platform, supporting instant snapshots, cloning, and tiering, which are essential for effective enterprise data management. Support for Multiple Protocols: The service accommodates both NFS and SMB protocols, enhancing its flexibility for diverse workloads across different environments. Caveats and Limitations While the advantages of ANF Elastic ZRS are numerous, it is essential to consider potential limitations. For instance, organizations must ensure their applications are optimized for multi-AZ deployments to fully leverage the capabilities of this service. Additionally, there may be initial costs associated with migrating existing data to the new system, which could pose challenges for some businesses. Future Implications in the Context of AI Developments As artificial intelligence (AI) technologies continue to evolve, their integration with data storage solutions like ANF Elastic ZRS will likely enhance data management capabilities. Future advancements may include automated data optimization processes, predictive analytics for system performance, and intelligent decision-making frameworks that further minimize downtime and enhance overall data resiliency. Furthermore, AI may facilitate enhanced security measures, ensuring that data remains protected against emerging threats while maintaining compliance with regulatory standards. Conclusion In conclusion, implementing Azure NetApp Files Elastic Zone-Redundant Storage represents a significant advancement in achieving data resiliency in today’s complex digital landscape. By ensuring continuous data availability and zero data loss, organizations can safeguard their mission-critical applications against disruptions, thereby enhancing operational efficiency and compliance. The future of data management will undoubtedly be shaped by ongoing advancements in AI, which will further optimize these processes and ensure sustained resilience in the face of evolving challenges. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Introduction In the rapidly evolving landscape of Generative AI Models and Applications, understanding the nuances of context and real-time processing has emerged as a critical challenge. The term “brownie recipe problem,” coined by Instacart’s CTO Anirban Kundu, encapsulates the complexity faced by large language models (LLMs) in grasping user intent and contextual relevance. This discussion elucidates how fine-grained context is essential for LLMs to effectively assist users in real-time scenarios, particularly within the domain of grocery delivery services. Main Goal and Achievement Strategies The primary objective highlighted in the original content is the necessity for LLMs to possess a nuanced understanding of context to deliver timely and relevant assistance. Achieving this goal involves a multi-faceted approach that integrates user preferences, real-world availability of products, and logistical considerations. By breaking down the processing into manageable chunks—utilizing both large foundational models and smaller language models (SLMs)—companies like Instacart can streamline their AI systems. This segmentation enables LLMs to better interpret user intent and recommend appropriate products based on current market conditions, thereby enhancing user experience and engagement. Advantages of Fine-Grained Contextual Understanding Enhanced User Engagement: By providing tailored recommendations, LLMs can significantly improve user satisfaction. As Kundu notes, if reasoning takes too long, users may abandon the application altogether. Informed Decision-Making: The ability to discern between user preferences—such as organic versus regular products—enables LLMs to offer personalized options, thereby facilitating better choices. Logistical Efficiency: Understanding the perishability of items (e.g., ice cream and frozen vegetables) allows for optimized delivery schedules, reducing waste and ensuring customer satisfaction. Dynamic Adaptability: The integration of small language models allows for rapid re-evaluation of product availability, aiding in real-time problem-solving for stock shortages. Modular System Architecture: By adopting a microagent approach, firms can manage various tasks more efficiently, leading to improved reliability and reduced complexity in handling multiple third-party integrations. Caveats and Limitations Despite the advantages, there are notable challenges. As highlighted by Kundu, the integration of various agents requires meticulous management to ensure consistent performance across different platforms. Additionally, the system’s reliance on real-time data can lead to discrepancies in availability and response times, necessitating a robust error-handling mechanism to mitigate user dissatisfaction. Future Implications The advancements in AI technology are poised to significantly reshape the landscape of real-time assistance in various applications, not limited to grocery delivery. As LLMs become more adept at processing fine-grained contextual information, we can expect a paradigm shift toward more intelligent, responsive systems capable of meeting user needs with unprecedented efficiency. Furthermore, the increasing integration of standards like OpenAI’s Model Context Protocol (MCP) and Google’s Universal Commerce Protocol (UCP) will likely enhance interoperability among AI agents, fostering innovation across industries. Conclusion In conclusion, the challenges posed by the “brownie recipe problem” serve as a profound reminder of the importance of context in the application of Generative AI. By focusing on fine-grained contextual understanding, organizations can better harness the capabilities of LLMs to provide timely, personalized, and effective user experiences. The future of AI applications lies in the continuous improvement of these models, ensuring they not only comprehend user intent but also adapt to the complexities of the real world. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextualizing Scarcity and Intelligence in AI The current landscape of artificial intelligence (AI) encapsulates a paradox where computational power and model size are often mistaken as direct indicators of intelligence. In a world where colossal models are lauded for their billions of parameters, the fundamental principle of efficiency risks being overlooked. Historical examples, such as interstellar spacecraft and the human brain, illustrate that effective intelligence does not stem from sheer size but rather from optimizing limited resources. This notion posits that scarcity should not be perceived merely as a limitation, but as a catalyst for innovation and advancement in AI. The Main Goal: Efficiency Over Size The crux of the original discussion advocates for a paradigm shift in AI development, emphasizing that true intelligence manifests through efficiency rather than scale. This goal can be realized by prioritizing the design of compact, effective models that maximize performance while minimizing resource consumption. As we navigate through the complexities of AI, the emphasis should be placed on how to derive greater value from limited inputs, thereby fostering a culture of innovation that thrives within constraints. Structured Advantages of Efficiency in AI Cost-Effectiveness: Smaller, specialized models can achieve substantial functional value at a reduced cost compared to their larger counterparts. For instance, deploying a model with a trillion parameters for a specific task can be likened to using a supercomputer for basic calculations, illustrating the inefficiency of overkill. Reduced Latency: Models designed for edge inference can process data locally, diminishing the delays associated with remote data access. This characteristic is particularly beneficial in applications requiring real-time responses. Enhanced Privacy: By conducting inference on-device, sensitive information remains local, mitigating the risks associated with data transmission to cloud servers. Lower Environmental Impact: As AI systems increasingly require extensive energy resources, efficient models can significantly reduce the carbon footprint associated with large-scale data centers. Resilience and Adaptability: Systems that thrive within resource constraints demonstrate greater resilience, enabling them to adapt to varying environmental conditions and operational demands. However, it is important to note that while transitioning to smaller models offers clear advantages, potential limitations exist. For example, certain complex tasks may still require more extensive models to achieve desired accuracy levels, leading to a careful balance that must be maintained between size and performance. Future Implications for AI Development As the field of AI continues to evolve, the focus on efficiency over size is expected to gain momentum. The rise of technologies such as TinyML and edge AI signifies a shift towards localized solutions that can operate independently of expansive infrastructure. This trend not only democratizes access to AI capabilities in resource-limited environments but also aligns with the global push for sustainable and energy-efficient practices. Future developments in AI are likely to emphasize architectures that prioritize efficiency, ultimately reshaping the landscape of machine learning and its applications across various sectors. Conclusion The evolution of artificial intelligence is increasingly characterized by a commitment to efficiency as a measure of intelligence. By embracing the constraints of scarcity, practitioners can innovate and refine their approaches to machine learning, leading to sustainable and effective AI solutions. The future of AI will not be dictated by the magnitude of data or models but by the ingenuity to extract more from less, ensuring that intelligence is defined by its capacity for effective problem-solving in a resource-conscious manner. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

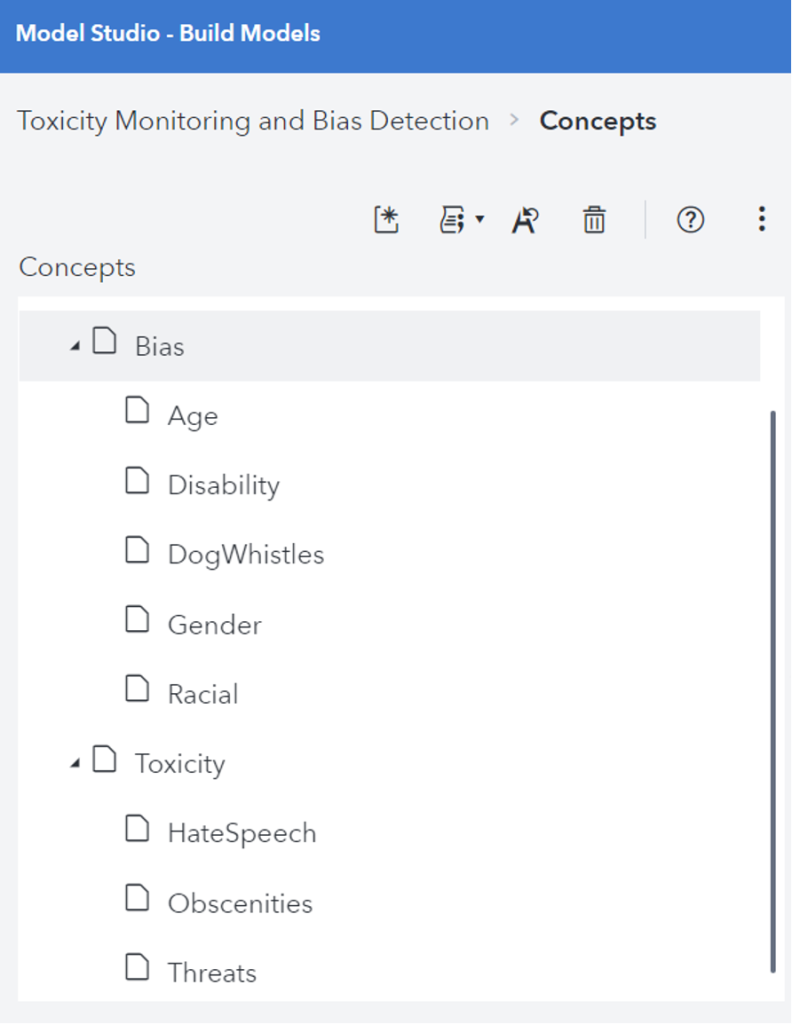

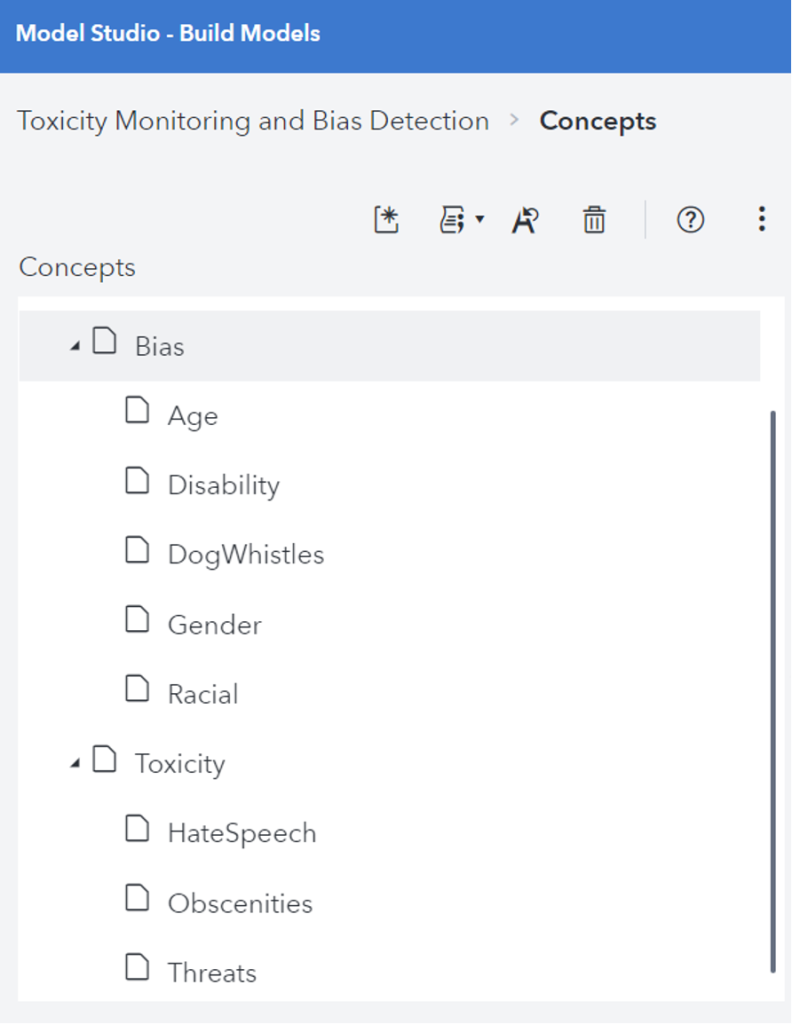

Introduction In recent years, Large Language Models (LLMs) have significantly advanced the field of artificial intelligence, particularly in Natural Language Processing (NLP) and understanding. These models, trained on vast datasets, enable machines to produce human-like text responses. However, their deployment raises critical concerns regarding toxicity, bias, and exploitation by malicious entities. It is imperative for organizations utilizing LLMs to navigate these challenges to ensure ethical and effective AI solutions. Understanding Toxicity and Bias in LLMs The capabilities of LLMs are accompanied by inherent risks, notably the inadvertent perpetuation of toxic and biased content. Toxicity encompasses the generation of harmful or abusive language, while bias refers to the reinforcement of stereotypes and prejudices. Such issues can result in discriminatory outputs that adversely affect individuals and communities. Addressing these challenges is essential for fostering trust and reliability in AI-driven applications. Main Goal and Achievement Strategies The primary goal outlined in the original post is to manage toxicity and bias within LLM outputs to ensure trustworthy and equitable interactions. Achieving this involves a multifaceted approach that includes: Data Transparency: Organizations must prioritize transparency regarding the datasets used for training LLMs. Understanding the training data’s composition aids in identifying potential biases and toxic language. Content Moderation Tools: Employing advanced content moderation APIs and tools can help mitigate the effects of toxicity and bias. For instance, utilizing technologies like SAS’s LITI can enhance the identification and prefiltering of problematic content. Human Oversight: Continuous human involvement is crucial to monitor and review outputs, ensuring that new types of harmful content are recognized and addressed promptly. Advantages of Addressing Toxicity and Bias Addressing toxicity and bias in LLMs presents several advantages: Enhanced User Trust: By reducing instances of harmful language, organizations can foster a more trusted relationship with users, ultimately leading to greater user adoption and satisfaction. Improved Data Quality: Implementing robust monitoring and prefiltering systems enhances the overall quality of data fed into LLMs, resulting in more accurate and relevant outputs. Adaptability to Unique Concerns: Organizations can tailor content moderation strategies to address specific issues pertinent to their operations, allowing for nuanced handling of language-related challenges. Despite these advantages, challenges persist, particularly regarding the dynamic nature of language and the emergence of new harmful trends over time. Continuous adaptation and enhancement of moderation systems are crucial to overcoming these obstacles. Future Implications of AI Developments As AI technology continues to evolve, the implications for managing toxicity and bias in LLMs are profound. Future developments may include: Refined Algorithms: Advances in machine learning may lead to more sophisticated algorithms capable of detecting subtle biases and toxic language, enhancing the efficacy of content moderation. Greater Emphasis on Ethical AI: There will likely be an increasing focus on ethical AI practices, driving organizations to adopt more responsible approaches to AI deployment, particularly in sensitive applications. Legislative and Regulatory Frameworks: Governments may introduce stricter regulations governing the use of AI technologies, necessitating that organizations comply with enhanced standards for managing bias and toxicity. Ultimately, the future of LLMs hinges on the commitment of organizations to develop and implement responsible AI practices that prioritize ethical considerations while leveraging the transformative capabilities of these models. Conclusion In summary, the integration of LLMs into various applications necessitates a vigilant approach to managing toxicity, bias, and the potential for manipulation by bad actors. By prioritizing data transparency, employing effective content moderation tools, and ensuring continuous human oversight, organizations can cultivate a safer and more equitable AI landscape. The ongoing evolution of AI technologies underscores the need for responsible practices that benefit society while minimizing harm. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context and Importance of Prompt Injection in Large Language Models Large Language Models (LLMs) such as ChatGPT and Claude are designed to interpret and execute user instructions. However, this functionality presents a significant vulnerability: the phenomenon of prompt injection. This technique allows malicious actors to embed covert commands within standard user input, effectively manipulating the model’s behavior. This manipulation poses risks analogous to SQL injection attacks in database systems, leading to potentially harmful or misleading outputs. Understanding prompt injection and its implications is crucial for ensuring the security and reliability of AI systems, particularly in the data analytics sector. Defining Prompt Injection Prompt injection refers to the manipulation of AI systems by embedding misleading commands within user inputs. Attackers can disguise harmful instructions as innocuous text, leading the AI to execute unintended actions. This vulnerability arises from the LLMs’ inherent inability to differentiate between trusted system commands and untrusted user inputs, making them susceptible to exploitation. Main Goal of Addressing Prompt Injection Risks The primary objective of addressing prompt injection is to safeguard AI models from unauthorized manipulation, which can lead to data breaches, safety violations, and the dissemination of misleading information. By implementing robust measures to detect and mitigate prompt injections, organizations can enhance the integrity and reliability of their AI systems. This involves a comprehensive approach that includes input validation, structured prompt design, and output monitoring. Advantages of Mitigating Prompt Injection Risks Enhanced Data Security: Effective input sanitization can prevent unauthorized access to sensitive information, thereby protecting user data and organizational integrity. Improved Model Behavior: By controlling the prompts that the model executes, organizations can maintain alignment with intended use cases, minimizing the risk of harmful outputs. Compliance with Regulatory Standards: Proactively addressing prompt injection can help organizations adhere to privacy laws and regulations, reducing the risk of legal repercussions. Increased User Trust: When users are assured that AI systems are secure and reliable, their confidence in utilizing these technologies grows, fostering wider adoption. Adaptive Learning Opportunities: Continuous monitoring and testing can provide insights into model vulnerabilities, enabling iterative improvements in system design. Despite these advantages, it is essential to note that complete eradication of prompt injection risks is unattainable. Organizations must remain vigilant, as attackers continually evolve their tactics. Future Implications of AI Developments in Prompt Injection The future of AI development emphasizes the need for increasingly robust defenses against prompt injection as LLMs become more prevalent across various industries. The integration of advanced monitoring systems and machine learning algorithms for anomaly detection could provide enhanced resilience against these threats. Moreover, as AI applications expand into critical sectors, including healthcare and finance, ensuring the integrity of these systems will become paramount. Continuous investment in research and development, as well as collaboration across the tech industry, will be necessary to address the evolving landscape of prompt injection attacks effectively. Conclusion Prompt injection represents a significant challenge in the deployment of large language models, threatening the security and functionality of AI systems. While it is impossible to eliminate all risks associated with prompt injection, organizations can substantially mitigate these threats through a combination of proactive measures, ongoing vigilance, and adaptive strategies. As AI technologies continue to advance, prioritizing the security of these systems will be essential for fostering trust and ensuring their safe application in diverse fields. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview of the Low-Power Computer Vision Challenge 2026 The Low-Power Computer Vision Challenge (LPCV) has emerged as a significant event within the Computer Vision and Image Processing domain, fostering innovation and collaboration among industry professionals and academic researchers. This year, the LPCV features three distinct tracks, namely Image-to-Text Retrieval, Action Recognition in Video, and AI-Generated Images Detection. Each of these tracks offers substantial financial incentives, with over $10,000 in prizes designed to motivate participants and encourage advancements in low-power computing methodologies. The LPCV not only serves as a platform for competition but also acts as a catalyst for discussions and knowledge exchange among experts in the field. The challenge is set to take place on January 29th, 2026, featuring notable guests such as Yung-Hsiang Lu, a Professor of Electrical and Computer Engineering, who will provide insights into the event’s objectives and significance. This initiative aligns with the overarching goals of enhancing the efficiency and effectiveness of computer vision algorithms, which is crucial for various applications ranging from smart devices to autonomous systems. Main Goals of the LPCV and Achievement Strategies The primary goal of the LPCV is to stimulate innovation in low-power computer vision applications. This objective can be achieved through several strategies: 1. **Encouraging Participation**: By offering substantial prize money and recognition, the challenge motivates participants from diverse backgrounds to engage in the competition. This creates a rich environment for idea exchange and interdisciplinary collaboration. 2. **Fostering Research and Development**: The LPCV provides a structured framework for participants to test and refine their algorithms under competitive conditions, thereby pushing the boundaries of current capabilities in low-power computer vision. 3. **Promoting Real-World Applications**: Each competition track is designed to address real-world challenges, thereby ensuring that the research conducted is not only theoretical but also practical and applicable in industry settings. Through these strategies, the LPCV aims to catalyze advancements in computer vision technology that are not only innovative but also sustainable in terms of power consumption. Advantages of Participating in the LPCV Engagement in the LPCV offers several advantages for both individual participants and the broader field of computer vision: – **Financial Incentives**: With over $30,000 in prize money available, participants have a clear financial motivation to develop and showcase their innovative solutions. – **Visibility and Recognition**: Participants gain visibility within the research community, which can lead to future collaborations, funding opportunities, and career advancements. – **Skill Development**: Involvement in the challenge allows participants to hone their skills in algorithm design, testing, and real-time application deployment, which are invaluable in the rapidly evolving tech landscape. – **Networking Opportunities**: The LPCV serves as a gathering point for professionals in the field, facilitating networking and knowledge sharing that can lead to future partnerships and projects. Despite these advantages, some caveats exist, including the potential for high competition levels, which may deter newcomers, and the necessity for participants to have a solid foundational understanding of computer vision principles. Future Implications of AI Developments in Low-Power Computer Vision The intersection of artificial intelligence (AI) and low-power computer vision is poised to transform various industries, particularly as AI technologies continue to advance. Future implications include: – **Enhanced Algorithm Efficiency**: As AI techniques evolve, they will enable the development of more efficient algorithms that can operate on low-power devices without sacrificing performance, thereby broadening the applicability of computer vision technologies. – **Increased Adoption of Smart Devices**: With improvements in low-power computer vision, smart devices will become more capable, leading to increased adoption across sectors such as healthcare, automotive, and smart home technologies. – **Sustainability Focus**: As environmental concerns grow, the demand for energy-efficient solutions will drive innovation in low-power computer vision, aligning technological advancement with sustainability goals. In conclusion, the LPCV represents a vital opportunity for the advancement of low-power computer vision technology, fostering a competitive yet collaborative environment that is essential for addressing contemporary challenges in the field. As AI continues to develop, its integration with low-power computer vision will undoubtedly yield transformative impacts across various applications, ultimately shaping the future of this critical area of research. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context and Importance of Unified Metadata in Data Engineering In the evolving landscape of data engineering, the integration of various platforms for effective data management is critical. As organizations endeavor to leverage data for analytics and artificial intelligence (AI) applications, the challenges they encounter often extend beyond mere coding issues. Data engineers, analysts, and scientists require a coherent understanding of data lineage, transformations, and operational expectations. This necessitates a unified approach to metadata management that encapsulates business context, technical metadata, and governance across diverse platforms such as Alation and Amazon SageMaker Unified Studio. When metadata is siloed within different teams or systems, inefficiencies arise, leading to duplicated efforts and conflicting definitions. A unified metadata foundation is essential for ensuring that data remains trustworthy, accessible, and actionable across various analytics and AI initiatives. The recent integration between Alation and Amazon SageMaker Unified Studio aims to address these challenges by synchronizing catalog metadata. This synchronization fosters collaboration between technical and business teams, allowing them to work with the same metadata, thereby enhancing data traceability and understanding across the data lifecycle. Main Goal and Its Achievement The primary objective of the Alation and Amazon SageMaker Unified Studio integration is to establish a unified metadata governance framework that enhances data discoverability, governance, and compliance. Achieving this goal involves the automatic synchronization of metadata between the two platforms, which allows for a centralized view of assets and their associated information. This integration provides clear provenance, allowing organizations to track data origins and ensure regulatory compliance effectively. By leveraging this integration, organizations can streamline their data workflows, reduce metadata duplication, and foster a more collaborative environment for data professionals. Structured Advantages of the Integration 1. **Enhanced Data Discoverability**: With a unified metadata layer, data engineers and scientists can quickly locate and access relevant datasets, significantly reducing the time spent on data discovery. 2. **Improved Collaboration**: The synchronization of metadata fosters better collaboration between technical teams using SageMaker and business teams utilizing Alation, reducing conflicts and misunderstandings. 3. **Consistent Governance**: A singular source of truth for metadata enables consistent governance policies, which is crucial for compliance with regulatory requirements and maintaining data integrity. 4. **Traceability and Auditability**: The integration ensures that all metadata updates include comprehensive provenance information, which supports audit trails necessary for compliance and data stewardship. 5. **Operational Efficiency**: By automating metadata extraction and synchronization, organizations can reduce manual efforts in metadata management, allowing data teams to focus on value-added activities such as analysis and insight generation. 6. **Security and Compliance Assurance**: The integration adheres to enterprise security practices by employing least-privilege access controls and encrypted communication, ensuring that sensitive data remains protected during synchronization processes. While these advantages are compelling, organizations must also consider potential limitations, such as the initial setup complexity and the need for ongoing governance to ensure metadata remains accurate and relevant. Future Implications of AI Developments As artificial intelligence continues to evolve, its integration within data engineering processes will likely deepen. Enhanced capabilities in AI are expected to automate data governance tasks further, including lineage tracking and anomaly detection in data quality. The future may also see the introduction of bi-directional synchronization capabilities, enabling metadata updates from either Alation or SageMaker, thus providing greater flexibility in managing data changes. This shift will empower organizations to adopt more agile and responsive data practices, aligning them with fast-paced business needs. In conclusion, the integration of Alation and Amazon SageMaker Unified Studio represents a significant advancement in unified metadata governance, positioning organizations to better navigate the complexities of data engineering while maximizing the value derived from their data assets. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context In the realm of applied machine learning, effective network traffic monitoring is crucial for maintaining system performance and security. As machine learning practitioners increasingly leverage cloud-based infrastructures and distributed systems, understanding network traffic becomes paramount. This knowledge allows for the optimization of data pipelines, detection of anomalies, and safeguarding against potential cyber threats. The command-line utility ‘iftop’ serves as a lightweight yet powerful tool for monitoring network traffic in Linux environments, providing real-time insights that can significantly enhance the operational efficiency of machine learning workflows. Main Goal and Achievement The primary objective of utilizing the ‘iftop’ command is to facilitate the monitoring of incoming and outgoing network traffic on a specified interface. This command enables users to visualize data flow in a clear and concise manner, thereby simplifying the management of network resources. To achieve this goal, practitioners simply need to install ‘iftop’ using their preferred package manager and execute it with the appropriate interface specified. This straightforward approach empowers users to keep track of network activity and identify any irregularities that may affect machine learning applications. Advantages of Using ‘iftop’ Simplicity and Efficiency: The ‘iftop’ command presents network data in an easily interpretable table format, allowing for rapid assessment of bandwidth usage without the complexities often associated with more comprehensive tools. Real-Time Monitoring: ‘iftop’ provides real-time insights into network traffic, enabling practitioners to make informed decisions promptly, which is critical for maintaining the performance of machine learning models operating in dynamic environments. Minimal Resource Consumption: Unlike heavier graphical interfaces, ‘iftop’ operates with minimal resource overhead, making it suitable for environments where computational resources are limited. Customizability: While ‘iftop’ offers various options for advanced users, its basic functionality is easily accessible, allowing users to adapt it to their specific monitoring needs without being overwhelmed by options. Security Insights: By monitoring outgoing traffic, practitioners can detect potential unauthorized data transmissions or telemetry, which is particularly significant in environments dealing with sensitive data. Caveats and Limitations Interface Dependency: ‘iftop’ requires users to specify the correct network interface to monitor. Failure to do so may lead to misleading data, as it defaults to the first available interface. Command-Line Proficiency: While ‘iftop’ is relatively simple to use, it still necessitates a basic understanding of command-line operations, which may pose a barrier for some users. Limited Historical Data: ‘iftop’ primarily focuses on real-time traffic and does not retain historical data, which may be a limitation for users needing long-term analysis. Future Implications As the landscape of machine learning continues to evolve, the integration of artificial intelligence into network monitoring tools is likely to enhance their capabilities significantly. Future advancements may include predictive analytics, enabling practitioners to forecast network traffic patterns and automatically adjust resources accordingly. Moreover, machine learning algorithms could be employed to identify anomalies in data flows, thereby increasing the efficacy of security measures against potential cyber threats. Overall, the intersection of machine learning and network traffic monitoring will become increasingly critical as organizations strive to optimize their data-driven initiatives. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here