Contextualizing Greenhouse Utilization in February In February, agricultural activities in many regions are significantly subdued due to winter’s harsh conditions, with snow blanketing the ground and temperatures often dipping below freezing. During this dormant phase, agricultural producers face challenges in maintaining productivity, as most outdoor crops are inactive. However, the greenhouse environment presents an opportunity for continued agricultural activity, allowing for the cultivation of late spring transplants and fast-maturing crops that cater to early market demands. The effectiveness of greenhouse production is contingent upon the specific climatic conditions of the region and the type of greenhouse infrastructure employed. Farmers can optimize their operations by utilizing various greenhouse types, irrespective of their technological sophistication. Main Goal and Its Achievement The primary objective presented in the original post is to maximize agricultural productivity in February by leveraging greenhouse technology. This can be achieved by initiating the growth of crops that benefit from a controlled environment, such as tomatoes and peppers, which require consistent warmth and moisture. To facilitate this, farmers must ensure that their greenhouses are adequately equipped to maintain optimal temperature and humidity levels, enhancing germination and growth rates. The strategic planning of crop selection, coupled with timely execution, can significantly boost yield potential during the otherwise dormant winter months. Advantages and Evidence-Based Assertions Extended Growing Season: Greenhouses allow for the cultivation of crops outside of their natural growing seasons, effectively extending the agricultural calendar. This is particularly advantageous in regions with harsh winters. Controlled Environment: The enclosure of a greenhouse provides a stable climate, reducing exposure to extreme weather conditions. This control aids in minimizing plant stress, which can lead to higher yields. Pest and Disease Management: A greenhouse setting can mitigate pest intrusion and disease spread, particularly during the winter months, offering a protective barrier against common agricultural threats. Resource Efficiency: Greenhouses can optimize resource usage, including water and nutrients, through advanced irrigation and climate control systems that minimize waste. It is crucial to note, however, that not all greenhouse types offer the same benefits. For instance, simpler structures may lack the necessary ventilation and climate control features that more sophisticated greenhouses possess, which can limit their effectiveness in maintaining optimal growing conditions. Future Implications of AI in Greenhouse Management Looking ahead, advancements in artificial intelligence (AI) are poised to revolutionize greenhouse management practices. AI technologies can enhance data collection and analysis, allowing for more precise monitoring of environmental conditions such as temperature, humidity, and soil moisture. Smart sensors and IoT devices can facilitate real-time adjustments to greenhouse conditions, optimizing plant growth and resource utilization. Furthermore, predictive analytics can assist farmers in making informed decisions regarding crop selection and management strategies, ultimately improving productivity and sustainability in the agricultural sector. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextualizing Scarcity and Intelligence in AI The current landscape of artificial intelligence (AI) encapsulates a paradox where computational power and model size are often mistaken as direct indicators of intelligence. In a world where colossal models are lauded for their billions of parameters, the fundamental principle of efficiency risks being overlooked. Historical examples, such as interstellar spacecraft and the human brain, illustrate that effective intelligence does not stem from sheer size but rather from optimizing limited resources. This notion posits that scarcity should not be perceived merely as a limitation, but as a catalyst for innovation and advancement in AI. The Main Goal: Efficiency Over Size The crux of the original discussion advocates for a paradigm shift in AI development, emphasizing that true intelligence manifests through efficiency rather than scale. This goal can be realized by prioritizing the design of compact, effective models that maximize performance while minimizing resource consumption. As we navigate through the complexities of AI, the emphasis should be placed on how to derive greater value from limited inputs, thereby fostering a culture of innovation that thrives within constraints. Structured Advantages of Efficiency in AI Cost-Effectiveness: Smaller, specialized models can achieve substantial functional value at a reduced cost compared to their larger counterparts. For instance, deploying a model with a trillion parameters for a specific task can be likened to using a supercomputer for basic calculations, illustrating the inefficiency of overkill. Reduced Latency: Models designed for edge inference can process data locally, diminishing the delays associated with remote data access. This characteristic is particularly beneficial in applications requiring real-time responses. Enhanced Privacy: By conducting inference on-device, sensitive information remains local, mitigating the risks associated with data transmission to cloud servers. Lower Environmental Impact: As AI systems increasingly require extensive energy resources, efficient models can significantly reduce the carbon footprint associated with large-scale data centers. Resilience and Adaptability: Systems that thrive within resource constraints demonstrate greater resilience, enabling them to adapt to varying environmental conditions and operational demands. However, it is important to note that while transitioning to smaller models offers clear advantages, potential limitations exist. For example, certain complex tasks may still require more extensive models to achieve desired accuracy levels, leading to a careful balance that must be maintained between size and performance. Future Implications for AI Development As the field of AI continues to evolve, the focus on efficiency over size is expected to gain momentum. The rise of technologies such as TinyML and edge AI signifies a shift towards localized solutions that can operate independently of expansive infrastructure. This trend not only democratizes access to AI capabilities in resource-limited environments but also aligns with the global push for sustainable and energy-efficient practices. Future developments in AI are likely to emphasize architectures that prioritize efficiency, ultimately reshaping the landscape of machine learning and its applications across various sectors. Conclusion The evolution of artificial intelligence is increasingly characterized by a commitment to efficiency as a measure of intelligence. By embracing the constraints of scarcity, practitioners can innovate and refine their approaches to machine learning, leading to sustainable and effective AI solutions. The future of AI will not be dictated by the magnitude of data or models but by the ingenuity to extract more from less, ensuring that intelligence is defined by its capacity for effective problem-solving in a resource-conscious manner. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

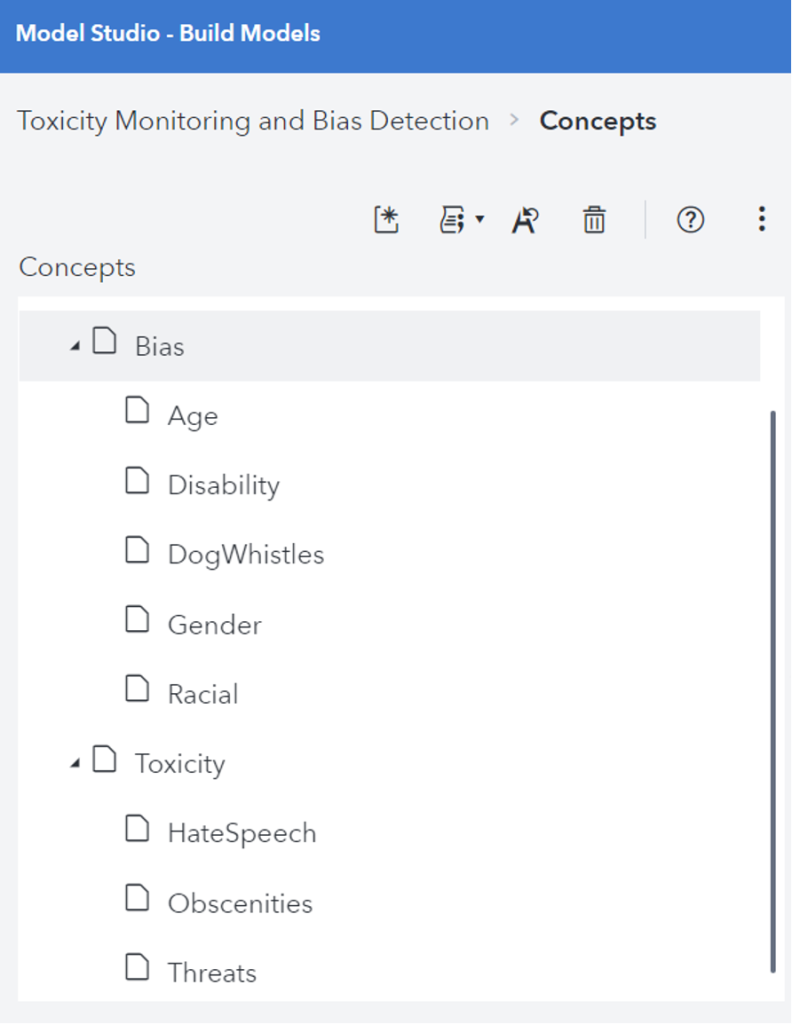

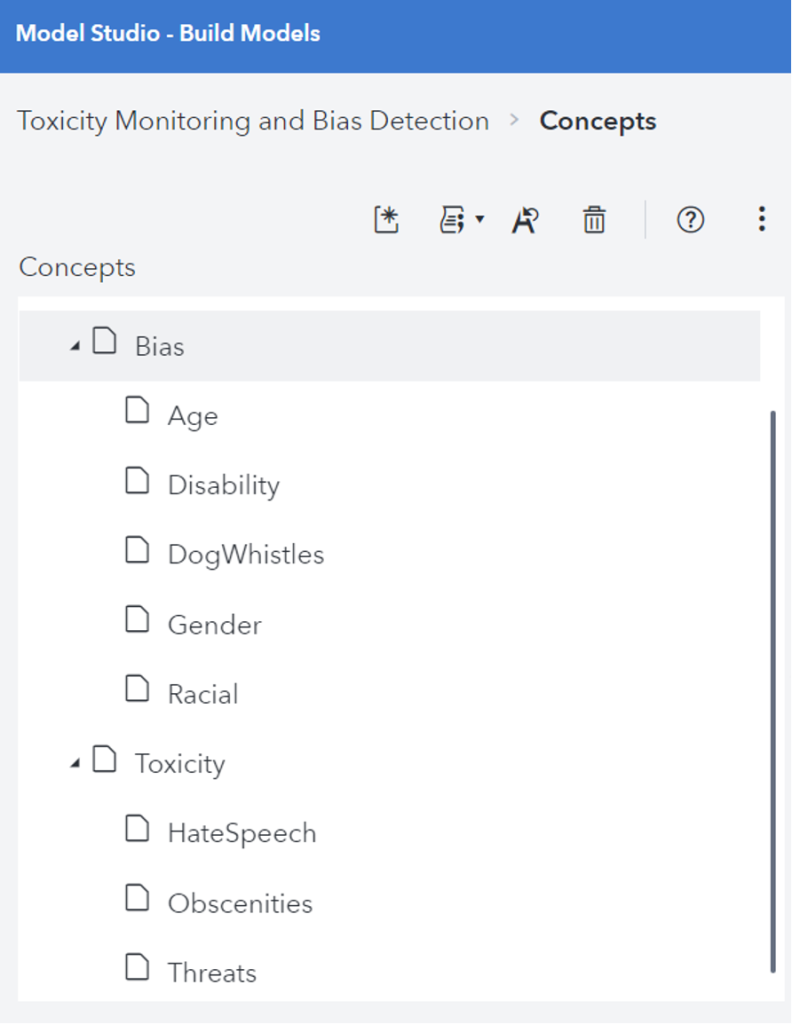

Introduction In recent years, Large Language Models (LLMs) have significantly advanced the field of artificial intelligence, particularly in Natural Language Processing (NLP) and understanding. These models, trained on vast datasets, enable machines to produce human-like text responses. However, their deployment raises critical concerns regarding toxicity, bias, and exploitation by malicious entities. It is imperative for organizations utilizing LLMs to navigate these challenges to ensure ethical and effective AI solutions. Understanding Toxicity and Bias in LLMs The capabilities of LLMs are accompanied by inherent risks, notably the inadvertent perpetuation of toxic and biased content. Toxicity encompasses the generation of harmful or abusive language, while bias refers to the reinforcement of stereotypes and prejudices. Such issues can result in discriminatory outputs that adversely affect individuals and communities. Addressing these challenges is essential for fostering trust and reliability in AI-driven applications. Main Goal and Achievement Strategies The primary goal outlined in the original post is to manage toxicity and bias within LLM outputs to ensure trustworthy and equitable interactions. Achieving this involves a multifaceted approach that includes: Data Transparency: Organizations must prioritize transparency regarding the datasets used for training LLMs. Understanding the training data’s composition aids in identifying potential biases and toxic language. Content Moderation Tools: Employing advanced content moderation APIs and tools can help mitigate the effects of toxicity and bias. For instance, utilizing technologies like SAS’s LITI can enhance the identification and prefiltering of problematic content. Human Oversight: Continuous human involvement is crucial to monitor and review outputs, ensuring that new types of harmful content are recognized and addressed promptly. Advantages of Addressing Toxicity and Bias Addressing toxicity and bias in LLMs presents several advantages: Enhanced User Trust: By reducing instances of harmful language, organizations can foster a more trusted relationship with users, ultimately leading to greater user adoption and satisfaction. Improved Data Quality: Implementing robust monitoring and prefiltering systems enhances the overall quality of data fed into LLMs, resulting in more accurate and relevant outputs. Adaptability to Unique Concerns: Organizations can tailor content moderation strategies to address specific issues pertinent to their operations, allowing for nuanced handling of language-related challenges. Despite these advantages, challenges persist, particularly regarding the dynamic nature of language and the emergence of new harmful trends over time. Continuous adaptation and enhancement of moderation systems are crucial to overcoming these obstacles. Future Implications of AI Developments As AI technology continues to evolve, the implications for managing toxicity and bias in LLMs are profound. Future developments may include: Refined Algorithms: Advances in machine learning may lead to more sophisticated algorithms capable of detecting subtle biases and toxic language, enhancing the efficacy of content moderation. Greater Emphasis on Ethical AI: There will likely be an increasing focus on ethical AI practices, driving organizations to adopt more responsible approaches to AI deployment, particularly in sensitive applications. Legislative and Regulatory Frameworks: Governments may introduce stricter regulations governing the use of AI technologies, necessitating that organizations comply with enhanced standards for managing bias and toxicity. Ultimately, the future of LLMs hinges on the commitment of organizations to develop and implement responsible AI practices that prioritize ethical considerations while leveraging the transformative capabilities of these models. Conclusion In summary, the integration of LLMs into various applications necessitates a vigilant approach to managing toxicity, bias, and the potential for manipulation by bad actors. By prioritizing data transparency, employing effective content moderation tools, and ensuring continuous human oversight, organizations can cultivate a safer and more equitable AI landscape. The ongoing evolution of AI technologies underscores the need for responsible practices that benefit society while minimizing harm. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context and Importance of Prompt Injection in Large Language Models Large Language Models (LLMs) such as ChatGPT and Claude are designed to interpret and execute user instructions. However, this functionality presents a significant vulnerability: the phenomenon of prompt injection. This technique allows malicious actors to embed covert commands within standard user input, effectively manipulating the model’s behavior. This manipulation poses risks analogous to SQL injection attacks in database systems, leading to potentially harmful or misleading outputs. Understanding prompt injection and its implications is crucial for ensuring the security and reliability of AI systems, particularly in the data analytics sector. Defining Prompt Injection Prompt injection refers to the manipulation of AI systems by embedding misleading commands within user inputs. Attackers can disguise harmful instructions as innocuous text, leading the AI to execute unintended actions. This vulnerability arises from the LLMs’ inherent inability to differentiate between trusted system commands and untrusted user inputs, making them susceptible to exploitation. Main Goal of Addressing Prompt Injection Risks The primary objective of addressing prompt injection is to safeguard AI models from unauthorized manipulation, which can lead to data breaches, safety violations, and the dissemination of misleading information. By implementing robust measures to detect and mitigate prompt injections, organizations can enhance the integrity and reliability of their AI systems. This involves a comprehensive approach that includes input validation, structured prompt design, and output monitoring. Advantages of Mitigating Prompt Injection Risks Enhanced Data Security: Effective input sanitization can prevent unauthorized access to sensitive information, thereby protecting user data and organizational integrity. Improved Model Behavior: By controlling the prompts that the model executes, organizations can maintain alignment with intended use cases, minimizing the risk of harmful outputs. Compliance with Regulatory Standards: Proactively addressing prompt injection can help organizations adhere to privacy laws and regulations, reducing the risk of legal repercussions. Increased User Trust: When users are assured that AI systems are secure and reliable, their confidence in utilizing these technologies grows, fostering wider adoption. Adaptive Learning Opportunities: Continuous monitoring and testing can provide insights into model vulnerabilities, enabling iterative improvements in system design. Despite these advantages, it is essential to note that complete eradication of prompt injection risks is unattainable. Organizations must remain vigilant, as attackers continually evolve their tactics. Future Implications of AI Developments in Prompt Injection The future of AI development emphasizes the need for increasingly robust defenses against prompt injection as LLMs become more prevalent across various industries. The integration of advanced monitoring systems and machine learning algorithms for anomaly detection could provide enhanced resilience against these threats. Moreover, as AI applications expand into critical sectors, including healthcare and finance, ensuring the integrity of these systems will become paramount. Continuous investment in research and development, as well as collaboration across the tech industry, will be necessary to address the evolving landscape of prompt injection attacks effectively. Conclusion Prompt injection represents a significant challenge in the deployment of large language models, threatening the security and functionality of AI systems. While it is impossible to eliminate all risks associated with prompt injection, organizations can substantially mitigate these threats through a combination of proactive measures, ongoing vigilance, and adaptive strategies. As AI technologies continue to advance, prioritizing the security of these systems will be essential for fostering trust and ensuring their safe application in diverse fields. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context of Kimwolf Botnet Threats in Corporate and Government Networks The emergence of the Kimwolf botnet presents a significant security threat to both corporate and governmental infrastructures. This Internet-of-Things (IoT) botnet has reportedly infiltrated over 2 million devices, leveraging compromised systems to conduct extensive distributed denial-of-service (DDoS) attacks and distribute other forms of malicious Internet traffic. The botnet’s unique capability to scan local networks for additional vulnerable IoT devices exacerbates its threat level, revealing alarming prevalence within critical sectors, including government and corporate environments. Main Goal of Addressing Kimwolf Botnet Risks The primary objective in addressing the Kimwolf botnet risk is to mitigate its infiltration into sensitive networks and prevent the abuse of compromised devices. This can be achieved through a multi-faceted approach that includes strengthening network security protocols, enhancing device authentication measures, and fostering awareness among IT professionals regarding the risks associated with unsecured IoT devices. Implementing robust cybersecurity frameworks can significantly reduce the potential for lateral movement by threat actors within corporate and governmental networks. Advantages of Addressing the Kimwolf Threat Enhanced Network Security: By identifying and patching vulnerabilities exploited by Kimwolf, organizations can fortify their defenses against similar threats, thereby reducing the risk of data breaches and DDoS attacks. Increased Awareness: Education and training for cybersecurity professionals on the nature of botnets like Kimwolf can lead to improved detection and response strategies, fostering a proactive security culture. Improved Device Management: Implementing stricter controls on IoT devices, especially those with pre-installed proxy software, can prevent unauthorized access and reduce susceptibility to malware infections. Regulatory Compliance: Organizations that proactively address these threats may find themselves better positioned to comply with cybersecurity regulations, thereby avoiding potential legal and financial repercussions. Future Implications of AI Developments in Cybersecurity As artificial intelligence (AI) continues to evolve, its integration into cybersecurity frameworks is likely to transform the landscape of threat detection and mitigation. AI systems can analyze vast amounts of network traffic in real-time, identifying patterns indicative of botnet activity, such as that exhibited by Kimwolf. This capability not only enhances the speed and accuracy of threat identification but also facilitates automated responses to emerging threats, thereby reducing the window of vulnerability for organizations. Moreover, as AI technology becomes increasingly sophisticated, it may also be employed by malicious actors to develop more advanced forms of malware, creating a continuous arms race between cybersecurity defenders and attackers. Therefore, the ongoing development and implementation of AI solutions will be crucial in maintaining robust defenses against evolving threats in the cybersecurity domain. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview The dynamic landscape of the healthcare and technology sectors necessitates continuous updates on personnel movements. The original blog post from STAT+ underscores the importance of sharing information regarding new hires, promotions, and other significant shifts within organizations. The healthcare sector, particularly the AI in Health and Medicine domain, is witnessing transformative changes that not only enhance operational efficiency but also affect the professional trajectory of HealthTech professionals. As organizations evolve, it becomes paramount to communicate these changes effectively, fostering a culture of transparency and engagement within the industry. Main Goal and Its Achievement The primary objective articulated in the original post is to encourage organizations within the HealthTech sphere to disseminate information regarding personnel changes. This goal can be achieved by creating an accessible platform where companies can submit their updates. Such a platform serves as a repository for industry insights, enhancing networking opportunities and facilitating the sharing of knowledge among HealthTech professionals. By participating in this exchange, organizations not only promote their internal achievements but also contribute to building a cohesive community that values innovation and collaboration. Advantages of Sharing Personnel Changes Enhanced Visibility: Regular updates on personnel movements increase the visibility of organizations and their leadership. This visibility is crucial in attracting potential talent and investors, thereby fostering growth and sustainability. Networking Opportunities: Sharing personnel changes creates avenues for networking within the industry. HealthTech professionals can connect with peers, mentors, and leaders, facilitating collaboration and knowledge-sharing. Reputation Management: By proactively sharing updates, organizations can manage their reputation positively. Transparency regarding staffing changes reflects a commitment to organizational health and workforce stability. Informed Decision-Making: Insight into personnel movements allows stakeholders, including investors and partners, to make better-informed decisions regarding collaborations and investments. While the advantages are substantial, it is important to recognize potential limitations. For instance, the effectiveness of such a communication strategy may vary based on the size of the organization and its market presence. Smaller entities may not receive the same level of attention as larger firms, potentially limiting the impact of their personnel updates. Future Implications of AI Developments The continued evolution of AI technologies in Health and Medicine is poised to significantly alter the landscape of personnel management and organizational dynamics. As AI systems become more integrated into healthcare processes, they will not only enhance operational efficiencies but also influence the skills and roles required within organizations. HealthTech professionals will need to adapt to new technologies, necessitating continuous learning and development. Additionally, as AI gains traction, the demand for skilled professionals who can bridge the gap between technology and healthcare will increase, further underscoring the importance of effective communication regarding personnel changes. In conclusion, the trend of sharing personnel updates, as highlighted in the original blog post, is pivotal for fostering a vibrant and interconnected HealthTech ecosystem. As the industry embraces AI advancements, the ability to effectively communicate these movements will prove invaluable for organizational growth and professional development. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Background: The Intersection of Politics and Predictive Analytics The analysis of electoral dynamics and candidate viability has evolved significantly in recent years, paralleling advancements in fields like sports analytics. The examination of electoral probabilities, exemplified by discussions surrounding candidates like Donald Trump, provides a framework for understanding predictive modeling in various domains, including sports. Just as political analysts utilize polling data to gauge candidate strength and predict outcomes, sports analysts employ statistical methodologies to assess player performance and team success. This convergence not only reflects the growing sophistication of data analytics but also highlights its relevance to sports data enthusiasts who seek to leverage predictive insights for competitive advantage. Main Objective: Understanding Predictive Modeling in Candidate Viability The primary goal of the original analysis is to determine the likelihood of a political candidate, specifically Donald Trump, securing a nomination based on current polling data. This is achieved through the application of statistical models that translate early polling averages into probabilistic forecasts. The insights drawn from these models serve to inform stakeholders about the dynamics of the political landscape, which can be paralleled to how sports analysts assess the probability of outcomes based on player and team statistics. By employing validated methodologies, analysts can provide a clearer picture of potential scenarios, which is crucial for strategic decision-making. Advantages of Predictive Modeling in Political and Sports Analytics Enhanced Decision-Making: Predictive models offer stakeholders actionable insights, enabling informed decisions in both political campaigns and sports management. Historical Contextualization: By referencing historical polling data and outcomes, models can highlight patterns that may influence current scenarios, enhancing the credibility of predictions. Dynamic Adjustments: Advanced models account for volatility and measurement error, allowing for real-time updates that reflect shifts in public sentiment or player performance. Comparative Analysis: Just as political analysts compare candidates, sports analysts can benchmark player performance against historical data to identify emerging trends. However, it is essential to acknowledge certain limitations inherent in predictive modeling: Data Volatility: Political landscapes and sports seasons are subject to rapid changes, which can impact the reliability of forecasts. Sample Size Constraints: Early polling data may not provide a comprehensive view, as it is often limited in terms of sample diversity and size. External Influences: Unforeseen events, such as scandals in politics or injuries in sports, can drastically alter the trajectory of predictions, complicating analyses. Future Implications of AI in Predictive Analytics The future of predictive analytics in both politics and sports is poised for transformative developments driven by advancements in artificial intelligence (AI). As AI technologies continue to evolve, they will enhance the granularity and accuracy of predictive models. For instance, machine learning algorithms can analyze vast datasets to identify complex patterns that traditional statistical methods may overlook. This capability will not only improve prediction accuracy but also facilitate real-time adjustments, allowing analysts to respond swiftly to dynamic changes. Moreover, the integration of AI in predictive analytics will empower sports data enthusiasts to explore new avenues for enhancing team performance and fan engagement. By harnessing AI-driven insights, stakeholders can develop more effective strategies, optimize player selections, and elevate overall decision-making processes in both the political and sports arenas. In conclusion, the evolving landscape of predictive analytics, fueled by AI advancements, holds significant promise for enhancing our understanding of candidate viability and sports performance alike. By leveraging data-driven insights, stakeholders can navigate complexities with greater confidence, ultimately leading to more informed outcomes in both domains. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview of the Low-Power Computer Vision Challenge 2026 The Low-Power Computer Vision Challenge (LPCV) has emerged as a significant event within the Computer Vision and Image Processing domain, fostering innovation and collaboration among industry professionals and academic researchers. This year, the LPCV features three distinct tracks, namely Image-to-Text Retrieval, Action Recognition in Video, and AI-Generated Images Detection. Each of these tracks offers substantial financial incentives, with over $10,000 in prizes designed to motivate participants and encourage advancements in low-power computing methodologies. The LPCV not only serves as a platform for competition but also acts as a catalyst for discussions and knowledge exchange among experts in the field. The challenge is set to take place on January 29th, 2026, featuring notable guests such as Yung-Hsiang Lu, a Professor of Electrical and Computer Engineering, who will provide insights into the event’s objectives and significance. This initiative aligns with the overarching goals of enhancing the efficiency and effectiveness of computer vision algorithms, which is crucial for various applications ranging from smart devices to autonomous systems. Main Goals of the LPCV and Achievement Strategies The primary goal of the LPCV is to stimulate innovation in low-power computer vision applications. This objective can be achieved through several strategies: 1. **Encouraging Participation**: By offering substantial prize money and recognition, the challenge motivates participants from diverse backgrounds to engage in the competition. This creates a rich environment for idea exchange and interdisciplinary collaboration. 2. **Fostering Research and Development**: The LPCV provides a structured framework for participants to test and refine their algorithms under competitive conditions, thereby pushing the boundaries of current capabilities in low-power computer vision. 3. **Promoting Real-World Applications**: Each competition track is designed to address real-world challenges, thereby ensuring that the research conducted is not only theoretical but also practical and applicable in industry settings. Through these strategies, the LPCV aims to catalyze advancements in computer vision technology that are not only innovative but also sustainable in terms of power consumption. Advantages of Participating in the LPCV Engagement in the LPCV offers several advantages for both individual participants and the broader field of computer vision: – **Financial Incentives**: With over $30,000 in prize money available, participants have a clear financial motivation to develop and showcase their innovative solutions. – **Visibility and Recognition**: Participants gain visibility within the research community, which can lead to future collaborations, funding opportunities, and career advancements. – **Skill Development**: Involvement in the challenge allows participants to hone their skills in algorithm design, testing, and real-time application deployment, which are invaluable in the rapidly evolving tech landscape. – **Networking Opportunities**: The LPCV serves as a gathering point for professionals in the field, facilitating networking and knowledge sharing that can lead to future partnerships and projects. Despite these advantages, some caveats exist, including the potential for high competition levels, which may deter newcomers, and the necessity for participants to have a solid foundational understanding of computer vision principles. Future Implications of AI Developments in Low-Power Computer Vision The intersection of artificial intelligence (AI) and low-power computer vision is poised to transform various industries, particularly as AI technologies continue to advance. Future implications include: – **Enhanced Algorithm Efficiency**: As AI techniques evolve, they will enable the development of more efficient algorithms that can operate on low-power devices without sacrificing performance, thereby broadening the applicability of computer vision technologies. – **Increased Adoption of Smart Devices**: With improvements in low-power computer vision, smart devices will become more capable, leading to increased adoption across sectors such as healthcare, automotive, and smart home technologies. – **Sustainability Focus**: As environmental concerns grow, the demand for energy-efficient solutions will drive innovation in low-power computer vision, aligning technological advancement with sustainability goals. In conclusion, the LPCV represents a vital opportunity for the advancement of low-power computer vision technology, fostering a competitive yet collaborative environment that is essential for addressing contemporary challenges in the field. As AI continues to develop, its integration with low-power computer vision will undoubtedly yield transformative impacts across various applications, ultimately shaping the future of this critical area of research. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context and Importance of Unified Metadata in Data Engineering In the evolving landscape of data engineering, the integration of various platforms for effective data management is critical. As organizations endeavor to leverage data for analytics and artificial intelligence (AI) applications, the challenges they encounter often extend beyond mere coding issues. Data engineers, analysts, and scientists require a coherent understanding of data lineage, transformations, and operational expectations. This necessitates a unified approach to metadata management that encapsulates business context, technical metadata, and governance across diverse platforms such as Alation and Amazon SageMaker Unified Studio. When metadata is siloed within different teams or systems, inefficiencies arise, leading to duplicated efforts and conflicting definitions. A unified metadata foundation is essential for ensuring that data remains trustworthy, accessible, and actionable across various analytics and AI initiatives. The recent integration between Alation and Amazon SageMaker Unified Studio aims to address these challenges by synchronizing catalog metadata. This synchronization fosters collaboration between technical and business teams, allowing them to work with the same metadata, thereby enhancing data traceability and understanding across the data lifecycle. Main Goal and Its Achievement The primary objective of the Alation and Amazon SageMaker Unified Studio integration is to establish a unified metadata governance framework that enhances data discoverability, governance, and compliance. Achieving this goal involves the automatic synchronization of metadata between the two platforms, which allows for a centralized view of assets and their associated information. This integration provides clear provenance, allowing organizations to track data origins and ensure regulatory compliance effectively. By leveraging this integration, organizations can streamline their data workflows, reduce metadata duplication, and foster a more collaborative environment for data professionals. Structured Advantages of the Integration 1. **Enhanced Data Discoverability**: With a unified metadata layer, data engineers and scientists can quickly locate and access relevant datasets, significantly reducing the time spent on data discovery. 2. **Improved Collaboration**: The synchronization of metadata fosters better collaboration between technical teams using SageMaker and business teams utilizing Alation, reducing conflicts and misunderstandings. 3. **Consistent Governance**: A singular source of truth for metadata enables consistent governance policies, which is crucial for compliance with regulatory requirements and maintaining data integrity. 4. **Traceability and Auditability**: The integration ensures that all metadata updates include comprehensive provenance information, which supports audit trails necessary for compliance and data stewardship. 5. **Operational Efficiency**: By automating metadata extraction and synchronization, organizations can reduce manual efforts in metadata management, allowing data teams to focus on value-added activities such as analysis and insight generation. 6. **Security and Compliance Assurance**: The integration adheres to enterprise security practices by employing least-privilege access controls and encrypted communication, ensuring that sensitive data remains protected during synchronization processes. While these advantages are compelling, organizations must also consider potential limitations, such as the initial setup complexity and the need for ongoing governance to ensure metadata remains accurate and relevant. Future Implications of AI Developments As artificial intelligence continues to evolve, its integration within data engineering processes will likely deepen. Enhanced capabilities in AI are expected to automate data governance tasks further, including lineage tracking and anomaly detection in data quality. The future may also see the introduction of bi-directional synchronization capabilities, enabling metadata updates from either Alation or SageMaker, thus providing greater flexibility in managing data changes. This shift will empower organizations to adopt more agile and responsive data practices, aligning them with fast-paced business needs. In conclusion, the integration of Alation and Amazon SageMaker Unified Studio represents a significant advancement in unified metadata governance, positioning organizations to better navigate the complexities of data engineering while maximizing the value derived from their data assets. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextualizing AI in Medical Imaging The landscape of healthcare is evolving with the integration of artificial intelligence (AI), particularly in the domain of medical imaging. The concept of automating medical report generation through AI systems is gaining traction as a means to enhance the efficiency and accuracy of radiological practices. This approach, exemplified by the Universal Report Generation (UniRG) framework, leverages multimodal reinforcement learning to align the training of AI models with the complexities of real-world clinical settings. By addressing the variability in reporting practices across different healthcare providers, UniRG aims to produce clinically relevant radiology reports, thereby alleviating the burdens on healthcare professionals while simultaneously improving workflow efficiency. Main Goals of UniRG The central objective of UniRG is to establish a robust framework for generating medical imaging reports that are both accurate and aligned with clinical needs. This goal is pursued through a distinctive approach that combines supervised fine-tuning with reinforcement learning. The reinforcement learning component is particularly crucial, as it enables the model to optimize its performance based on clinically meaningful evaluation metrics, rather than merely replicating existing report formats. By doing so, UniRG seeks to overcome the limitations of traditional models, which often struggle with generalization across diverse clinical practices and datasets. Advantages of UniRG 1. **Enhanced Efficiency**: AI-driven report generation significantly reduces the time and effort required from radiologists, allowing them to focus on more critical aspects of patient care. 2. **Improved Quality of Reports**: Through reinforcement learning, UniRG enhances the accuracy of generated reports, capturing essential clinical details that may be overlooked by conventional models. 3. **Generalization Across Diverse Settings**: UniRG demonstrates robustness across various institutions and patient demographics, minimizing the risk of overfitting to specific datasets. This is achieved through training on extensive and diverse data sources. 4. **Fewer Clinically Significant Errors**: The explicit optimization for clinical correctness results in reports that are not only linguistically coherent but also clinically valid, thus reducing the likelihood of misleading findings. 5. **Longitudinal Reporting Capabilities**: UniRG effectively incorporates historical patient data, allowing for more meaningful comparisons between current and previous imaging results. This feature is vital for assessing disease progression or resolution. 6. **Scalability**: The framework can be adapted to various imaging modalities and integrated with additional patient data, such as laboratory results and clinical notes, facilitating broader applications in medical practice. Limitations and Caveats While the advancements presented by UniRG are promising, there are limitations to consider. The framework is currently a research prototype and has not yet been validated for clinical use. Furthermore, the effectiveness of reinforcement learning relies heavily on the quality of the reward signals used during training. If these signals are poorly defined or do not reflect real-world clinical priorities, the model may still produce suboptimal results. Future Implications of AI in Medical Imaging The trajectory of AI in medical imaging suggests a future where automated systems significantly enhance diagnostic processes. As reinforcement learning models like UniRG continue to evolve, they are likely to set new benchmarks for accuracy and efficiency in medical report generation. The potential for integration with other data types, such as electronic health records and genomic data, may lead to a holistic view of patient health, further refining the decision-making processes in clinical settings. Moreover, advancements in AI are expected to facilitate personalized medicine, enabling tailored treatments based on comprehensive patient data analyses. In conclusion, the ongoing developments in AI-powered medical imaging, as exemplified by the UniRG framework, offer profound opportunities to improve healthcare delivery. By focusing on clinically aligned performance metrics and leveraging cutting-edge machine learning techniques, these innovations pave the way for more effective and reliable medical practices. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here