Contextual Overview of Advanced Account Security in AI In a rapidly evolving digital landscape, the security of personal and professional accounts, particularly those associated with artificial intelligence platforms such as ChatGPT and Codex, has become paramount. OpenAI’s recent announcement regarding the implementation of an optional “Advanced Account Security” feature reflects the growing concern over cyber threats targeting sensitive user data. This initiative is particularly relevant for individuals who utilize AI for high-stakes endeavors, including journalists, political figures, and researchers, who may face elevated risks of account compromise. OpenAI’s Advanced Account Security introduces stringent access controls designed to fortify account defenses against potential takeover attempts, marking a significant advancement in the domain of cybersecurity. Main Goals of Advanced Account Security The primary objective of OpenAI’s Advanced Account Security is to enhance user protection by mitigating the risks associated with account takeovers. This is achieved through the introduction of physical security keys and passkeys, which replace traditional password-based authentication methods. By eliminating reliance on potentially vulnerable recovery options such as email and SMS, OpenAI aims to establish a more robust security framework that significantly reduces the likelihood of phishing attacks. This initiative is not merely a response to the proliferation of AI technologies but is part of a broader cybersecurity strategy aimed at safeguarding sensitive information in an increasingly interconnected world. Advantages of Implementing Advanced Account Security 1. **Enhanced Security Protocols**: The requirement for physical security keys or passkeys provides a stronger defense against phishing attacks. This measure effectively creates a multi-factor authentication (MFA) environment, reducing the risk of unauthorized access. 2. **Elimination of Vulnerable Recovery Options**: By removing traditional recovery routes, OpenAI prevents attackers from exploiting support channels through social engineering tactics. This change ensures that only users who possess the necessary recovery keys can regain access to their accounts. 3. **Increased User Awareness and Control**: The introduction of alerts for each login attempt allows users to monitor their account activity closely. This feature provides an additional layer of awareness, enabling users to detect and respond to suspicious activities promptly. 4. **Default Privacy Settings**: For users opting into Advanced Account Security, conversations are automatically excluded from being used for model training. This default setting reinforces user privacy and control over personal data. 5. **Support for Cybersecurity Professionals**: The requirement for members of OpenAI’s Trusted Access for Cyber program to enable Advanced Account Security signifies a commitment to implementing best practices in cybersecurity protocols. This ensures that professionals in the field are equipped with the necessary tools to safeguard sensitive information effectively. Caveats and Limitations While the Advanced Account Security feature presents numerous advantages, it is essential to acknowledge certain limitations. Users must adapt to the new authentication methods, which may introduce initial challenges, especially for those accustomed to traditional password systems. Furthermore, the inability to seek assistance from OpenAI’s support team during account recovery may deter some users from enabling this feature, particularly those who may not have robust security practices already in place. Future Implications of AI in Cybersecurity Looking ahead, the integration of artificial intelligence in cybersecurity is poised to transform the landscape significantly. As AI technologies continue to advance, they will likely enable more sophisticated security mechanisms, facilitating proactive threat detection and response. The emergence of AI-driven security solutions may automate many aspects of account security, providing enhanced protection while simultaneously reducing the burden on users to manage their security practices. Moreover, as AI services become increasingly mainstream, the demand for comprehensive security measures will escalate, necessitating collaboration between AI developers and cybersecurity experts. This partnership will be vital in addressing emerging threats and ensuring that innovations in AI are accompanied by equally robust security protocols to protect sensitive user data. In conclusion, the introduction of OpenAI’s Advanced Account Security illustrates a proactive approach to safeguarding user accounts in an era defined by increasing cyber threats. By implementing advanced security measures, OpenAI sets a precedent for other technology companies to follow, highlighting the importance of prioritizing user safety in the rapidly evolving field of artificial intelligence. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview The geopolitical dynamics surrounding U.S.-China relations have profound implications for global markets, particularly in the context of the ongoing tensions surrounding Iran. Recent discussions indicate that the forthcoming summit between U.S. President Donald Trump and Chinese President Xi Jinping will heavily center on the Iran conflict, potentially overshadowing critical economic issues such as tariffs and rare earth mineral supplies. These developments occur against the backdrop of a complex global economic landscape where the intersection of political decisions and financial markets necessitates a keen understanding of AI’s role in finance and FinTech. Main Goals and Their Achievement The primary goal emerging from the context of the summit is to navigate the intricate balance between geopolitical stability and economic growth. Achieving this goal requires focused dialogue on mitigating tensions, particularly the Iranian conflict, while simultaneously fostering an environment conducive to trade and investment. Successful negotiation strategies could lead to decreased tariffs and improved access to critical resources, including rare earth elements essential for advanced technology applications in finance and FinTech. The convergence of these discussions could facilitate a more stable market environment, benefiting financial professionals by providing clearer operational frameworks. Advantages of Resolution and Negotiation Increased Market Stability: A successful resolution to geopolitical tensions can lead to enhanced market stability. As evidenced by past trends, geopolitical clarity supports investor confidence and can lead to stock market gains. Improved Trade Relations: Engagement at the summit may lead to enhanced trade relations between the U.S. and China, which is crucial for American corporations reliant on Chinese manufacturing and markets. Access to Rare Earth Elements: Resolving trade disputes could facilitate more predictable access to rare earth minerals, vital for the production of AI technologies that underpin financial services. Focus on Collaborative Technologies: Addressing mutual concerns regarding technology access and cooperation on issues such as AI can pave the way for advancements that enhance operational efficiency in financial services. Potential for Increased Investment: Successful diplomatic engagements can stimulate increased investments in both nations, leading to a broader economic impact that benefits financial professionals. Caveats and Limitations While the potential advantages of resolving geopolitical tensions are significant, certain limitations must be acknowledged. The volatility of international relations can lead to sudden shifts in policy that may undermine established agreements. Furthermore, the complexities of U.S.-China relations, particularly concerning technology transfer and tariffs, may result in protracted negotiations that delay tangible benefits. Additionally, economic improvements may not be evenly distributed, potentially disadvantaging smaller financial institutions or sectors less integrated into global supply chains. Future Implications of AI in Finance and FinTech As we look to the future, the continued evolution of AI technologies in finance and FinTech will significantly influence the landscape of international economic relations. Enhanced AI capabilities can streamline trade processes, improve risk assessment models, and facilitate real-time data analysis, thereby enabling financial professionals to make more informed decisions. Moreover, as geopolitical tensions shape the regulatory environment, the ability of AI systems to adapt to new compliance requirements will be paramount. Financial institutions that successfully integrate AI into their operational strategies will likely gain competitive advantages, particularly in navigating the complexities associated with tariffs and international trade agreements. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Analysis of Mookie Betts’ Injury and the Role of AI in Sports Analytics The recent update regarding the Los Angeles Dodgers outfielder Mookie Betts, who has been on the injured list for a significant duration due to an oblique injury, serves as a pertinent case study for the intersection of sports performance, injury management, and analytics. His potential return to play, imminent after a five-week hiatus, underscores the criticality of timely data in assessing athlete readiness and the efficacy of rehabilitation protocols. Betts’ situation also highlights how artificial intelligence (AI) is increasingly shaping decision-making processes in sports, particularly in the realm of athlete health and performance analytics. Main Goals and Achievements The primary goal articulated in the context of Betts’ injury is to facilitate his swift and safe return to competitive play. Achieving this necessitates a multi-faceted approach that includes comprehensive medical assessments, rehabilitation strategies, and performance analytics. By employing AI-driven insights, teams can monitor recovery metrics, predict outcomes based on historical data, and tailor rehabilitation regimes to enhance efficiency and efficacy. The integration of AI into sports analytics can provide teams with the ability to analyze vast datasets related to player health and performance. For instance, tracking biometric data such as heart rate variability, muscle fatigue, and recovery time can inform coaching staff and medical teams about an athlete’s readiness to return to the field. Advantages of AI in Sports Analytics 1. **Enhanced Injury Prediction and Management**: AI algorithms can analyze historical data to identify patterns associated with specific injuries. This predictive capability allows teams to proactively manage player workloads and reduce the risk of re-injury. 2. **Data-Driven Rehabilitation Protocols**: AI can assist in tailoring rehabilitation programs based on individual recovery patterns, ensuring that athletes like Betts receive personalized care that aligns with their unique physiological responses to injury. 3. **Performance Monitoring**: Continuous data collection allows for real-time monitoring of an athlete’s performance metrics, enabling coaches to make informed decisions about training intensities and game participation. 4. **Decision Support Systems**: AI tools can provide actionable insights that support coaching staff in making strategic decisions regarding player usage, especially in high-stakes scenarios such as playoff games. 5. **Cost Efficiency**: By optimizing rehabilitation and training processes, AI can contribute to reducing costs associated with prolonged player absences and ineffective rehabilitation strategies. While these advantages are significant, it is essential to acknowledge caveats. The reliance on AI necessitates high-quality data inputs, and erroneous data can lead to misleading conclusions. Additionally, the subjective nature of sports performance must be considered, as human factors cannot be entirely quantified by AI models. Future Implications of AI Developments in Sports Analytics The future of AI in sports analytics promises to further revolutionize how teams approach injury management and performance optimization. As machine learning models become more sophisticated, they will likely incorporate a wider array of data sources, including genetic information, nutrition, and psychological factors, allowing for a holistic view of athlete health. Moreover, advancements in AI technology may lead to the development of real-time analytics platforms that provide immediate feedback during games, allowing coaches to make instant strategic adjustments based on player performance and health data. Such innovations could transform game management and enhance the overall spectator experience by providing deeper insights into player dynamics. In summary, the case of Mookie Betts exemplifies the critical intersection of athlete health, performance analytics, and the role of AI in modern sports. As technology continues to evolve, its integration into sports analytics will increasingly shape how teams approach player management, ultimately enhancing both athlete performance and organizational success. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview of Holography in Computer Vision The concept of holography, once relegated to the realms of science fiction, has begun to manifest itself in tangible applications that promise to revolutionize the fields of computer vision and image processing. Pioneering efforts in this domain are exemplified by the work of Shawn Frayne, co-founder and CEO of Looking Glass Factory. With over two decades of dedication, Frayne has successfully developed holographic displays that facilitate immersive experiences without the necessity of headgear or dark environments. This breakthrough technology allows multiple users to engage with three-dimensional holographic content in a shared space, thus democratizing access to advanced visualizations. Main Goal and Achievement Pathways The primary objective of this technological advancement is to create a seamless interface for interacting with 3D holograms, thereby enhancing user engagement and understanding. This is particularly relevant to professionals in the fields of computer vision and image processing, as it enables richer data interpretation and presentation. Achieving this goal requires ongoing collaboration among experts in computer vision, artificial intelligence, and display technologies, as well as active participation in forums like OSCCA, where thought leaders converge to discuss innovations and their implications. Advantages of Holographic Displays Enhanced User Interaction: Holographic displays permit multiple viewers to interact with 3D content simultaneously, fostering collaborative environments that are critical in research and education. Elimination of Physical Barriers: The absence of glasses or headgear eliminates discomfort and allows for spontaneous engagement, making the technology more accessible to a broader audience. Integration of AI and Computer Vision: The convergence of AI with holographic technology enables real-time processing of visual data, which can significantly enhance the capabilities of computer vision applications. Innovative Communication Tools: This technology serves as a powerful medium for presenting complex data sets in a more intuitive manner, facilitating better understanding and retention among users. However, it is crucial to acknowledge certain limitations associated with this technology. The initial cost of implementation and the need for specialized content creation tools may pose barriers to widespread adoption. Additionally, as with any emerging technology, continuous advancements are necessary to keep pace with user expectations and application requirements. Future Implications of AI in Holography The future of holographic technology in conjunction with artificial intelligence holds significant promise. As AI algorithms become more sophisticated, they will enhance the interactivity and realism of holographic displays, enabling predictive analytics and deeper insights into visual data. The anticipated developments in this sphere are likely to catalyze advancements in various applications, including medical imaging, education, and industrial design. Vision scientists and researchers will find themselves at the forefront of this evolution, leveraging these tools to push the boundaries of what is possible in visual representation and interpretation. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

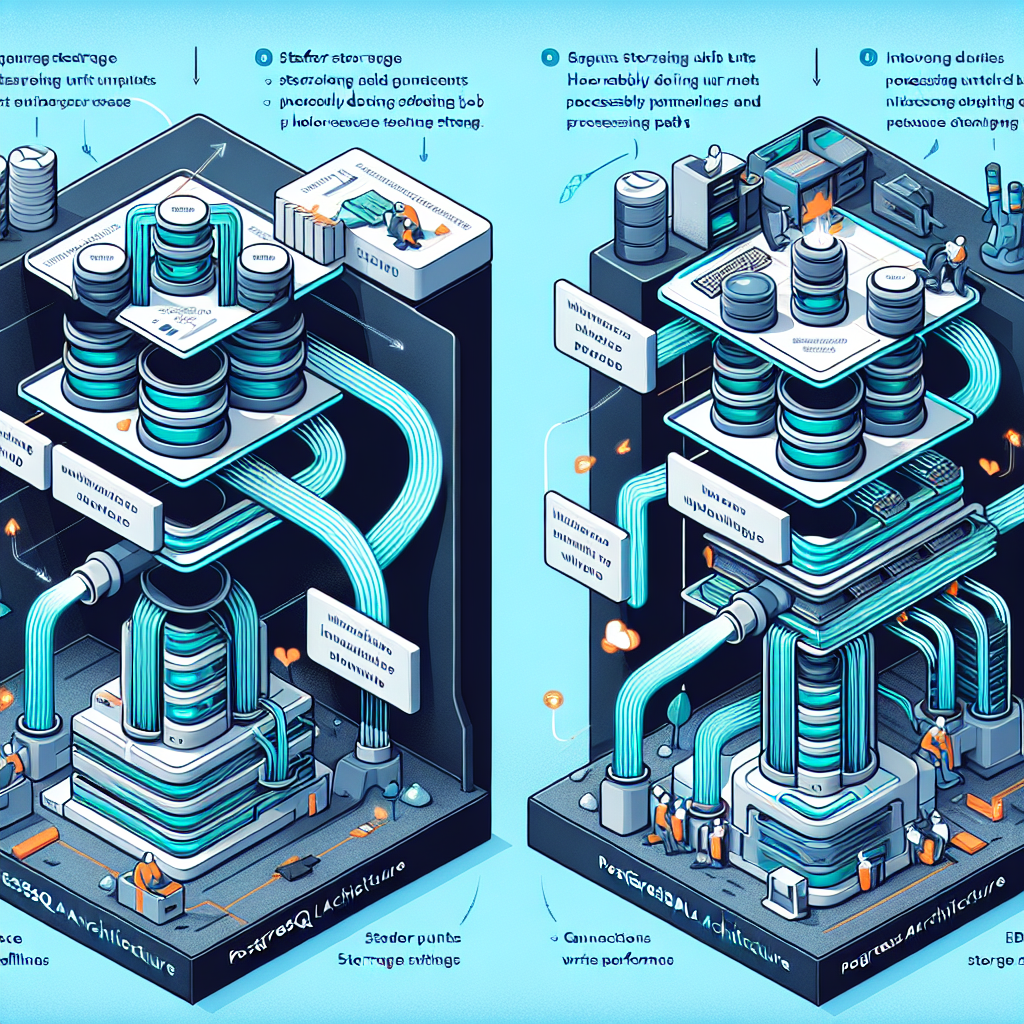

Contextualizing Lakebase Architecture in Big Data Engineering In the realm of Big Data Engineering, the architecture of data storage and computation plays a pivotal role in determining system performance and operational efficiency. The advent of lakebase architecture embodies a paradigm shift in this context, wherein compute and storage are deliberately decoupled. This separation is designed not only for operational flexibility—enabling scaling, branching, and instant recovery—but also to unlock significant performance enhancements. By offloading work from traditional Postgres compute to a more distributed storage system, lakebase architecture offers solutions to longstanding bottlenecks, thereby revolutionizing how data engineers handle write-heavy workloads. Main Goal: Achieving Performance Optimization The primary objective articulated through the exploration of lakebase architecture is to achieve a fivefold improvement in the write performance of managed Postgres instances. This goal can be realized by leveraging the unique structural advantages afforded by the separation of compute and storage layers. In traditional Postgres deployments, durability mechanisms, while crucial, introduce significant overhead, particularly under high write loads. By re-engineering these mechanisms within the lakebase framework, engineers can effectively eliminate the bottlenecks associated with full page writes, thereby drastically enhancing write throughput and overall system performance. Advantages of Lakebase Architecture Network Efficiency: The lakebase architecture promotes a 94% reduction in network traffic by allowing compute nodes to transmit only the changes (deltas) rather than complete page images. This optimization significantly alleviates bandwidth demands, enhancing system responsiveness. Scalability: By distributing workloads across multiple pageservers, lakebase architecture enhances scalability. This shift reduces the burden on a single Postgres writer, facilitating independent scaling of storage resources in response to increasing demands. Optimal Read Performance: The architecture ensures that image generation is based on actual changes to data pages rather than periodic checkpoint processes, maintaining efficient read operations and minimizing latency spikes. Improved Transaction Throughput: Real-world benchmarks demonstrate substantial increases in transaction throughput, with improvements scaling dramatically with compute instance size. For instance, a 32-vCPU instance exhibited throughput gains exceeding 450% due to optimized WAL generation. Enhanced Stability in Latency: The architecture’s reconfiguration has led to a reduction in read latencies, with reports indicating a decrease of 30% to 50% in p99 and p50 read latencies, contributing to a more stable user experience. Future Implications: The Role of AI in Data Engineering Looking forward, the intersection of lakebase architecture and artificial intelligence (AI) presents exciting opportunities for further enhancing data engineering practices. As AI technologies evolve, they may facilitate even more intelligent data processing and management systems. For instance, AI-driven algorithms could optimize data retrieval processes by intelligently predicting access patterns, thereby preemptively managing data caching and storage. Moreover, the application of machine learning techniques could enable adaptive adjustments to compute and storage configurations in real time, further enhancing performance and efficiency in managing large-scale data environments. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context: Earning Customer Trust in the Age of AI In the contemporary landscape of commerce, particularly within the realms of AI-Powered Marketing, the establishment of customer trust is paramount. Trust serves as the foundation for sustained business relationships, instilling confidence in both the technology employed and the individuals behind the organization. Effective trust-building is not an automatic process; it is cultivated through a demonstrated commitment to the success of customers, particularly in challenging circumstances. The insights gained from interactions with key stakeholders underscore the importance of personal relationships in fostering this trust, which ultimately translates into a significant competitive advantage and enhances customer lifetime value (CLV), a crucial metric for long-term profitability in B2B SaaS environments. Main Goal: Achieving Customer Trust The principal goal articulated in the original content is to earn customer trust through consistent and authentic engagement. This trust is achieved when customers perceive the organization and its representatives as genuine partners invested in their success. This necessitates a holistic approach that encompasses not only the delivery of quality products and services but also the establishment of meaningful connections and open lines of communication. The emphasis on human interaction and empathy remains crucial, as these factors are instrumental in reinforcing customer loyalty and satisfaction. Advantages of Earning Customer Trust Enhanced Customer Loyalty: When customers trust a brand, they are more likely to remain loyal, resulting in increased retention rates and a longer customer lifecycle. This loyalty can significantly reduce customer acquisition costs over time. Improved Customer Lifetime Value (CLV): Trust directly correlates with CLV, as satisfied customers tend to make repeat purchases and are more inclined to explore additional services or products offered by the company. Resilience in Adversity: Companies that have established trust with their customers are better positioned to withstand market fluctuations and crises. Customers are more likely to remain supportive during challenging times if they believe in the integrity of the brand. Positive Brand Reputation: A strong reputation built on trust can lead to increased word-of-mouth referrals and organic growth, as customers share their positive experiences with others. Informed Decision-Making: Trust facilitates open communication, allowing for better feedback mechanisms. Companies can better understand customer needs, leading to more informed product development and marketing strategies. Caveats and Limitations While the advantages of earning customer trust are substantial, it is important to recognize potential limitations. Building trust is a gradual process that requires sustained effort and commitment. Additionally, reliance on technology, particularly AI, must be balanced with genuine human interaction, as excessive automation can lead to perceptions of insincerity or detachment. Organizations must also be wary of overpromising, as failing to deliver on commitments can quickly erode trust. Future Implications: The Evolving Role of AI The rapid advancements in AI technology pose both opportunities and challenges for trust-building in marketing. As AI continues to enhance data analysis and customer insights, organizations will be better equipped to tailor their offerings to meet individual customer needs. However, the reliance on AI must be coupled with a commitment to maintaining the human touch in customer interactions. Future developments may lead to more sophisticated AI systems that facilitate personalized communication, thereby reinforcing trust. The challenge will lie in ensuring that these technologies augment rather than replace the essential human connections that underpin trust. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextualizing Companion Robotics: An Overview In a recent podcast episode, Colin Angle, co-founder and CEO of Familiar Machines & Magic, engages in a comprehensive discussion regarding the evolution of companion robotics. This dialogue emerges from the company’s strategic focus on developing consumer-oriented artificial intelligence (AI) systems, specifically through their flagship product, Familiars. These robots are intended to cultivate long-term, emotionally intelligent relationships with users. Angle’s insights illuminate the intersection between advanced robotics and emotional intelligence, placing emphasis on the broader mission of fostering a more empathetic world through technology. Objectives of Familiar Machines & Magic The primary goal articulated by Familiar Machines & Magic is to pioneer the development of emotionally intelligent robots that can replicate and nurture meaningful human interactions. This objective can be realized through a combination of cutting-edge AI technologies, extensive experience in consumer robotics, and an unwavering commitment to user-centric design. The company seeks to harness the potential of artificial life to enhance human experience, thereby contributing to a society where technology serves as a companion rather than merely a tool. Advantages of Companion Robotics Enhanced Emotional Connectivity: The design of Familiars aims to forge deep emotional connections with users, promoting psychological well-being and emotional support. Leverage of Expertise: The founding team possesses a remarkable pedigree in robotics and AI, having previously deployed over 50 million consumer robots. This experience facilitates rapid advancements in the development of reliable and effective companion robots. Interdisciplinary Collaboration: The company’s partnerships with leading researchers and engineers from prestigious institutions such as MIT and Boston Dynamics ensure a robust foundation for innovative solutions in companion robotics. Global Reach: With operations in key cities like Boston, Los Angeles, and Hong Kong, Familiar Machines & Magic is positioned to leverage diverse markets and foster international collaboration in robotics. Continuous Improvement in Human-Robot Interaction: By focusing on emotionally intelligent relationships, the company addresses a critical aspect of human-robot interaction, which has been a significant barrier to widespread adoption of robotic technologies. Caveats and Limitations Despite the promising benefits, several limitations must be acknowledged. The emotional complexities of human relationships are inherently nuanced and difficult to replicate in artificial beings. Additionally, the integration of AI into consumer products raises ethical concerns regarding privacy and data security. The challenge remains to balance technological advancement with responsible usage to prevent potential misuse of such companion robots. Future Implications of AI in Companion Robotics The future of AI developments in companion robotics is poised for transformative impacts. As AI technologies continue to evolve, there is potential for increased sophistication in emotional recognition and response capabilities of robots. This could lead to enhanced personalization in user experiences, making companion robots more intuitive and capable of meeting individual user needs. Moreover, advancements in machine learning and natural language processing may further bridge the gap between human and robotic interaction, fostering deeper connections. Ultimately, as AI becomes more integrated into daily life, the societal implications will warrant careful consideration of ethical frameworks and regulatory oversight to guide the responsible development and deployment of companion robots. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview of Gemini 2.5 Model Family The Gemini 2.5 model family represents a significant advancement in generative AI technologies, particularly in the area of reasoning models. The recent updates to this model family include the stable releases of Gemini 2.5 Pro and Gemini 2.5 Flash, along with the introduction of the preview version of Gemini 2.5 Flash-Lite. These models are designed with enhanced capabilities for reasoning, allowing for improved performance and accuracy in various applications. The flexibility of control over the “thinking budget” empowers developers to optimize the models for their specific needs, thereby enhancing the overall user experience. Main Goal and Implementation Strategies The primary goal of the updates to the Gemini 2.5 model family is to enhance the effectiveness of generative AI applications by providing models that can reason through their responses. This is achieved through iterative improvements in model architecture and functionality, allowing for greater adaptability in various use cases. By providing distinct models such as Gemini 2.5 Pro for high-complexity tasks and Gemini 2.5 Flash-Lite for cost-sensitive applications, the Gemini family accommodates a wide range of developer requirements. This strategic differentiation enables developers to select the most suitable model for their specific applications, ultimately leading to improved outcomes in AI-driven tasks. Structured Advantages of Gemini 2.5 Models Enhanced Performance: The Gemini 2.5 models, particularly Flash-Lite, are engineered for lower latency and superior throughput, making them ideal for high-volume tasks such as classification and summarization. Cost Efficiency: With updated pricing structures, Gemini 2.5 Flash offers a more competitive cost-per-intelligence ratio, ensuring that developers can scale their applications without incurring prohibitive costs. Dynamic Control: The ability to adjust the “thinking budget” via API parameters in models like Flash-Lite provides developers with greater flexibility in balancing cost and performance based on specific application needs. Broad Applicability: The models are designed to support a wide range of applications, from coding and agentic tasks in Gemini 2.5 Pro to high-throughput operations in Flash-Lite, thereby appealing to a diverse set of user requirements. It is important to note that while these models present numerous advantages, there may be limitations in terms of the depth of reasoning available in lower-tier models. Developers must assess the requirements of their specific use cases to select the optimal model accordingly. Future Implications of Generative AI Developments The advancements in the Gemini 2.5 model family have far-reaching implications for the field of generative AI and its applications. As AI technologies continue to evolve, we can anticipate further enhancements in model intelligence and usability. The ongoing research and development efforts aimed at pushing the boundaries of what generative AI can achieve will likely result in even more sophisticated models capable of tackling intricate tasks with greater efficiency. Moreover, the trend towards cost-effective, high-performance AI solutions will empower a broader range of developers and organizations to integrate AI capabilities into their operations, thus accelerating innovation across various industries. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview of TOS Formation and Legal Implications The recent 11th Circuit ruling in Tejon v. Zeus Networks, LLC highlights critical considerations in the formation of Terms of Service (TOS) agreements. The court found that the manner in which Zeus presented its TOS—through a hyperlink obscured by design choices—failed to adequately notify users of their binding implications. This case raises essential questions about the enforceability of online agreements, particularly as businesses increasingly pivot to digital platforms where user engagement hinges on clear and conspicuous disclosures. Main Goals and Achievement Strategies The primary goal underscored by the court’s decision is the necessity for TOS agreements to be presented in a manner that is not only accessible but also clearly understood by users. This can be achieved through various strategies: Design Clarity: Utilizing larger fonts, contrasting colors, and strategic placement of hyperlinks can enhance visibility and user awareness. Direct Language: Clearly articulating the implications of user actions, such as agreeing to arbitration, within the TOS can eliminate ambiguities. Adopting Clickwrap Agreements: Transitioning to clickwrap agreements, where users must actively agree to terms, can significantly reduce legal ambiguity surrounding acceptance. Advantages of Clear TOS Presentation There are several advantages to ensuring that TOS agreements are conspicuous and comprehensible: Enhanced User Trust: When users understand the terms they are agreeing to, they are more likely to trust the platform, fostering a positive user experience. Legal Protection: Clear and enforceable TOS can provide legal safeguards for businesses, reducing the risk of litigation stemming from ambiguous contract terms. Improved Compliance Rates: Users are more likely to comply with terms they are aware of, leading to fewer disputes and enhanced operational efficiency. However, businesses must remain vigilant about the caveats associated with overly complicated layered notices, as highlighted by the court’s critique of Zeus’s approach. A failure to adequately address user comprehension can lead to legal challenges that undermine the intended protections a TOS agreement is meant to provide. Future Implications: The Role of AI in Legal Practices As LegalTech continues to evolve, the integration of artificial intelligence (AI) is poised to revolutionize how TOS agreements are formulated and enforced. AI can facilitate: Enhanced User Interaction: By employing AI-driven chatbots to guide users through terms, businesses can ensure that users comprehend their agreements before acceptance. Automated Compliance Monitoring: AI can assist in monitoring user interactions with TOS to ensure compliance and flag potential issues in real-time. Dynamic TOS Customization: Through machine learning algorithms, platforms can adapt TOS presentations based on user behavior, ensuring heightened awareness and acceptance rates. In conclusion, the implications of AI in TOS formation extend beyond mere compliance; they present opportunities for enhanced user engagement and legal certainty in an increasingly digital marketplace. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview Over three decades ago, the village of Mbem in northwest Cameroon was devoid of electricity, leaving the moon and stars as the only sources of light for its residents, particularly for a young Jude Numfor. This lack of access to electricity profoundly impacted the community’s development and opportunities. Numfor’s vision for electrifying rural areas stemmed from his childhood memories, which fueled his determination to provide sustainable energy solutions. In 2006, he co-founded Renewable Energy Innovators Cameroon (REI), which focuses on designing, installing, and maintaining solar minigrids to facilitate rural electrification. REI leverages photovoltaic technology and battery-energy storage systems to produce electricity distributed through smart meters. The initiative gained traction with support from IEEE Smart Village, which funds projects aimed at enhancing educational and economic opportunities in remote communities. Main Goals and Achievements The principal objective of REI is to electrify rural communities in Cameroon, thereby improving the quality of life and creating economic opportunities. This goal can be achieved through the implementation of solar minigrids that provide consistent and reliable electricity. The strategic collaboration with IEEE Smart Village has enabled REI to refine its business model and expand its operations, ultimately bringing electricity to more than 1,000 households across multiple villages. This partnership has also fostered the development of an open-source metering system, enhancing transparency in energy use and management. Advantages of Electrification Initiatives Enhanced Quality of Life: Access to electricity allows communities to engage in various activities such as studying at night, improving educational outcomes for children. Economic Growth: Electrification stimulates local economies by enabling small businesses to flourish, from food preservation to mobile phone charging stations, thereby creating jobs. Community Empowerment: The establishment of local enterprises and services fosters a sense of ownership among residents and promotes community resilience. Technological Innovation: The adoption of open-source metering systems allows for better energy management and consumer participation, leading to more sustainable practices. Caveats and Limitations Despite the numerous benefits, challenges persist. The financial viability of such projects remains a significant concern, as the return on investment is often low, deterring potential investors. Additionally, the regulatory environment can pose obstacles, as seen with REI’s journey to obtain a license to operate legally in off-grid areas. Moreover, attracting skilled labor is critical for sustaining operations, necessitating robust recruitment and training processes. Future Implications and AI Developments The future of electrification in rural areas, particularly in regions like Cameroon, will likely be influenced significantly by advancements in artificial intelligence (AI). AI has the potential to optimize energy distribution, enhance predictive maintenance of energy systems, and improve demand forecasting. Furthermore, AI-driven analytics can enable better decision-making in energy management, allowing for more tailored solutions that meet the specific needs of communities. As the technology landscape continues to evolve, embracing AI could further empower local entrepreneurs, ensuring that projects like REI can scale effectively and sustainably. The integration of AI in energy systems may also attract a new wave of investors interested in the innovation and impact potential of electrification initiatives. Conclusion The electrification efforts spearheaded by Jude Numfor and REI exemplify how sustainable energy solutions can transform rural communities. By addressing the challenges and leveraging technology, particularly AI, there is a significant opportunity to enhance the quality of life for countless individuals, promote economic development, and inspire future generations of innovators. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here