Contextualizing the AI Controversy in the Music Industry Spotify has recently come under scrutiny for its release of AI-generated music attributed to deceased artists, raising ethical concerns regarding exploitation and intellectual property rights. An investigation by 404 Media revealed that the streaming service published tracks without obtaining authorization from the artists’ estates or record labels. For instance, a song titled “Together” appeared on the page of Blake Foley, a country musician murdered in 1989, despite the track’s creator being a different individual entirely. This incident reflects a broader trend wherein AI tools are employed to generate music that emulates the styles of past artists. The emergence of an AI band, Velvet Sundown, which has gained significant traction with its track “Dust on the Wind,” further exemplifies the growing presence of AI-generated content within established music platforms. Despite Spotify’s removal of unauthorized tracks following media exposure, the prevalence of such incidents indicates a systemic issue within the industry. Main Goals and Their Achievement The primary goal highlighted in this discourse is the necessity for ethical standards and regulatory frameworks governing AI-generated content in the music industry. Achieving this goal involves implementing robust policies that require platforms to seek explicit permission from artists or their estates before using their likeness or style in AI-generated compositions. Moreover, transparency in labeling AI-generated content is essential to inform consumers and protect the integrity of human artistry. Advantages and Considerations Preservation of Artist Rights: Establishing clear regulations surrounding AI-generated music would safeguard the rights of deceased artists and their estates, ensuring that their legacies are not exploited without consent. Consumer Trust: Providing transparent labeling of AI-generated content fosters trust among listeners, as they can make informed decisions about the music they consume. Fair Competition: By regulating AI-generated music, platforms can create a level playing field for human artists, preventing AI tracks from unfairly competing for streaming royalties. Innovation in Music Creation: While regulations are necessary, they can also stimulate innovation by encouraging the development of AI technologies that respect intellectual property rights. However, it is essential to note that overly stringent regulations could stifle creativity and limit the potential benefits of AI in music production, such as enhancing accessibility and diversity in musical expression. Future Implications of AI in the Music Industry The ongoing advancements in AI technology are poised to significantly reshape the music landscape. As AI becomes more sophisticated, the potential for creating music that closely mimics established artists will increase, leading to heightened ethical dilemmas. The industry may witness the development of AI systems capable of generating entirely new compositions that blend various musical elements, further blurring the lines between human and machine-generated music. Moreover, as the debate surrounding copyright and intellectual property rights intensifies, music streaming platforms may face increased pressure to adopt transparent practices. This could prompt a shift toward more equitable revenue-sharing models that recognize the contributions of both human artists and AI systems. Ultimately, the future of AI in the music industry hinges on balancing innovation with ethical considerations, ensuring that technological advancements do not come at the expense of artistic integrity. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context of Agricultural Drone Technology The integration of unmanned aircraft systems (UAS) in agriculture is gaining momentum, presenting innovative solutions to some of the sector’s most pressing challenges. The recent initiatives by Mississippi State University (MSU) through its Agricultural Autonomy Institute (AAI) exemplify this trend. By launching a comprehensive video series aimed at educating farmers about the operational and regulatory aspects of agricultural drones, the AAI is facilitating the adoption of this pioneering technology. These systems, particularly those equipped with spray capabilities, promise to enhance efficiency in agricultural production, a necessity in an industry increasingly challenged by labor shortages and rising operational costs. The rapid acceleration of UAS adoption in agricultural contexts can be attributed to the establishment of clear regulatory frameworks which have permitted their commercial use. While initial investments in technology may appear daunting, the long-term benefits—including significant reductions in labor, time, and costs associated with tasks like aerial cover crop seeding, fertilizer distribution, and pesticide application—underscore the value proposition of this innovation. Main Goal and Achievement Strategies The principal goal of the AAI’s video series is to enhance understanding and facilitate the safe, effective use of agricultural drones among stakeholders. This aim is pursued by addressing common inquiries and providing comprehensive guidance through a 13-part instructional video series. By focusing on both foundational knowledge and troubleshooting, the AAI aims to empower users to navigate the complexities of drone technology effectively. To achieve this goal, the series is structured to incorporate short, digestible videos that cover high-demand topics, thereby catering to various levels of expertise among users. This educational approach not only demystifies drone technology but also promotes safe practices, which is critical given the evolving regulatory landscape surrounding UAS operations in agriculture. Advantages of Agricultural Drones 1. **Increased Efficiency**: Agricultural drones can perform tasks such as spraying and monitoring crops at a fraction of the time required by traditional methods. This efficiency translates to labor savings and increased productivity. 2. **Cost Savings**: While there is an upfront investment, the reduction in labor costs and the ability to cover larger areas quickly yield substantial financial benefits in the long run. 3. **Precision Agriculture**: Drones enable precise application of inputs, reducing waste and minimizing environmental impact. This precision is particularly beneficial for tasks such as pesticide application, where targeted delivery can enhance efficacy and safety. 4. **Real-time Data Collection**: Drones are equipped with advanced sensors that provide real-time data on crop health, soil conditions, and environmental factors, empowering farmers to make informed decisions. 5. **Educational Support**: Initiatives like the video series from AAI provide critical educational resources, bridging the knowledge gap for farmers and stakeholders unfamiliar with drone technology. While these advantages are compelling, it is essential to acknowledge some limitations. The initial investment in drone technology and the need for ongoing training to keep up with regulatory changes can pose challenges for adoption, particularly among smaller operations. Future Implications of AI in Agricultural Drones Looking ahead, advancements in artificial intelligence (AI) are poised to further revolutionize the agricultural drone sector. Enhanced AI algorithms will enable drones to perform more complex tasks, such as autonomous decision-making regarding crop treatment and monitoring. This capability could significantly reduce the need for human oversight, allowing farmers to focus on strategic decision-making rather than operational management. Moreover, the integration of AI with machine learning will facilitate the analysis of vast amounts of data collected by drones, enabling predictive analytics that can inform crop management practices. This evolution could lead to more sustainable agricultural practices, optimized resource management, and improved yield outcomes. In conclusion, the initiatives undertaken by the AAI highlight the potential of agricultural drones as transformative tools in modern farming. By fostering education and understanding of this technology, stakeholders can harness its benefits, leading to enhanced productivity and sustainability in the agricultural sector. The future, particularly with the integration of AI, promises even greater advancements, ensuring that agricultural innovation continues to evolve in response to the challenges of the industry. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Introduction The rapid advancement of specialized AI agents is transforming the landscape of modern business operations. As organizations increasingly adopt agentic AI technologies, they are tasked with determining the most effective AI agents to develop in order to address their unique challenges. This blog post explores the implications of specialized AI agents within the Generative AI Models & Applications sector, highlighting their significant impact on operational efficiency and innovation. Main Goals of Specialized AI Agents The primary goal of specialized AI agents is to enhance business processes through tailored solutions that leverage proprietary data and domain expertise. Organizations are transitioning from generic, one-size-fits-all AI models to customized systems that can better understand and address specific use cases. This shift aims to drive faster outcomes and foster long-term AI adoption by aligning AI capabilities with the unique demands and workflows of various industries. Structured Advantages of Specialized AI Agents Increased Efficiency: Specialized AI agents automate routine tasks, thereby allowing human personnel to concentrate on complex decision-making. For instance, CrowdStrike’s AI agents significantly improve the accuracy of alert triage, enhancing productivity while reducing manual efforts. Enhanced Customization: By developing agents that cater to specific business needs, organizations can achieve performance levels that generic models cannot match. Companies like PayPal utilize specialized agents to facilitate conversational commerce, resulting in reduced latency and improved user experiences. Scalability: The modular design of specialized AI agents allows businesses to scale their solutions effectively. This is evident in Synopsys’s implementation of agentic AI frameworks that boost productivity in chip design workflows, enabling rapid adaptation to evolving engineering tasks. Long-term Viability: Specialized agents promote sustainable AI adoption by continuously improving through iterative training and fine-tuning. This ensures that as business needs evolve, the AI systems remain relevant and effective. While the advantages of specialized AI agents are substantial, organizations must also consider limitations such as the initial investment required for development and the ongoing need for data management and model retraining. Future Implications of Specialized AI Agents The trajectory of AI development suggests that the adoption of specialized AI agents will continue to rise, leading to profound changes within various industries. As companies increasingly leverage generative AI models, the integration of these agents will likely result in more sophisticated applications across sectors such as finance, healthcare, and cybersecurity. Furthermore, advancements in AI technologies will facilitate the creation of agents capable of performing complex tasks, thereby enhancing their utility in real-world applications. This evolution will not only redefine operational efficiency but also reshape the workforce dynamics as AI agents become collaborative partners within organizational ecosystems. Conclusion In summary, the emergence of specialized AI agents represents a significant advancement in the application of generative AI models. By focusing on tailored solutions that leverage proprietary knowledge and domain expertise, organizations can harness the full potential of AI technologies. As the landscape of business continues to evolve, the ongoing refinement and development of specialized AI agents will be crucial in driving innovation and maintaining competitive advantage in an increasingly complex marketplace. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview of Digital Resilience in the Agentic AI Era As global investments in artificial intelligence (AI) are projected to reach $1.5 trillion in 2025, a significant gap persists between technological advancement and organizational preparedness. According to recent findings, less than half of business leaders express confidence in their organizations’ ability to ensure service continuity, security, and cost management during unforeseen disruptions. This lack of assurance is compounded by the complexities introduced by agentic AI, which necessitates a comprehensive reevaluation of digital resilience strategies. Organizations are increasingly adopting the concept of a data fabric—an integrated architectural framework that interlinks and governs data across various business dimensions. This approach dismantles silos and allows for real-time access to enterprise-wide data, thereby equipping both human teams and agentic AI systems to better anticipate risks, mitigate issues proactively, recover swiftly from setbacks, and sustain operational continuity. Understanding Machine Data: The Foundation of Agentic AI and Digital Resilience Historically, AI models have predominantly relied on human-generated data such as text, audio, and video. However, the advent of agentic AI necessitates a deeper understanding of machine data—comprising logs, metrics, and telemetry produced by devices, servers, systems, and applications within an organization. Access to this data must be seamless and real-time to harness the full potential of agentic AI in fostering digital resilience. The absence of comprehensive integration of machine data can severely restrict AI capabilities, leading to missed anomalies and the introduction of errors. As noted by Kamal Hathi, senior vice president and general manager of Splunk (a Cisco company), agentic AI systems depend on machine data for contextual comprehension, outcome simulation, and continuous adaptation. Thus, the management of machine data emerges as a critical element for achieving digital resilience. Hathi describes machine data as the “heartbeat of the modern enterprise,” emphasizing that agentic AI systems are driven by this essential pulse, which requires real-time information access. Effective operation of these intelligent agents hinges on their direct engagement with the intricate flow of machine data, necessitating that AI models are trained on the same data streams. Despite the recognized importance of machine data, few organizations have achieved the level of integration required to fully activate agentic systems. This limitation not only constrains potential applications of agentic AI but also raises the risk of data anomalies and inaccuracies in outputs and actions. Historical challenges faced by natural language processing (NLP) models highlight the importance of foundational fluency in machine data to avoid biases and inconsistencies. The rapid pace of AI development poses additional challenges for organizations striving to keep up. Hathi notes that the speed of innovation may inadvertently introduce risks that organizations are ill-equipped to manage. Specifically, relying on traditional large language models (LLMs) trained on human-centric data may not suffice for maintaining secure, resilient, and perpetually available systems. Strategizing a Data Fabric for Enhanced Resilience To overcome existing shortcomings and cultivate digital resilience, technology leaders are encouraged to adopt a data fabric design tailored to the requirements of agentic AI. This strategy involves weaving together fragmented assets spanning security, information technology (IT), business operations, and network infrastructure to establish an integrated architecture. Such an architecture connects disparate data sources, dismantles silos, and facilitates real-time analysis and risk management. Main Goal and Its Achievement The primary objective articulated in the original content is the enhancement of digital resilience through the effective integration of machine data within a data fabric framework. Achieving this goal involves fostering a seamless connection among various data sources, which enables both human and AI systems to engage with real-time data analytics effectively. This integration is vital for anticipating risks and ensuring operational continuity in an increasingly complex AI landscape. Advantages of Implementing a Data Fabric Enhanced Decision-Making: Integrated real-time data empowers both human teams and AI systems to make informed decisions, thus reducing the likelihood of errors. Proactive Risk Management: Access to comprehensive machine data allows for the identification and mitigation of potential risks before they escalate into significant issues. Operational Continuity: Organizations can sustain operations even in the face of unexpected disruptions, thereby maintaining service continuity and customer trust. Scalability: A well-designed data fabric allows organizations to scale their operations and integrate new technologies without significant disruption. Limitations and Considerations Despite the numerous advantages, organizations must also consider potential limitations, such as the initial investment required to develop a robust data fabric and the ongoing need for data governance and management. Furthermore, organizations must ensure that the AI systems are trained on high-quality, comprehensive machine data to avoid inaccuracies and biases. Future Implications for AI Research and Innovation The ongoing evolution of AI technologies will significantly impact the realm of digital resilience. As AI systems become more autonomous and integrated into critical infrastructure, the necessity for organizations to invest in data fabric architectures will become paramount. Future advancements in AI will likely necessitate even more sophisticated data management practices, emphasizing the importance of machine data oversight to preempt operational risks. As organizations strive to keep pace with rapid technological advancements, those that successfully implement comprehensive data fabrics will likely lead in operational resilience and competitive advantage. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview The forthcoming Black Friday, scheduled for November 28, 2025, and the subsequent Cyber Monday on December 1, 2025, present an opportune moment for consumers to acquire technology products at significant discounts. This period not only stimulates consumer spending but also serves as a critical evaluation point for technological advancements and pricing strategies within the market. As organizations and individuals gear up for these sales, understanding the implications of pricing tactics, particularly in the context of artificial intelligence (AI) applications in cybersecurity, becomes increasingly relevant. Understanding the Goals of Black Friday Shopping The primary goal of participating in Black Friday sales is to access substantial discounts on desired products, particularly in technology sectors that are pivotal for both personal and professional use. To achieve this goal, consumers must remain vigilant against deceptive pricing strategies such as markups and false discounts. Utilizing price tracking tools such as Keepa and CamelCamelCamel can enhance the shopping experience by providing transparent pricing histories, ensuring that consumers make informed purchasing decisions. Advantages of Engaging in Early Black Friday Deals Significant Savings: Products are often available at markdowns of 20% or more, allowing for substantial savings on high-demand items such as televisions, laptops, and smart home devices. Access to Latest Technology: Black Friday is an ideal time to purchase last year’s models, which often experience dramatic price reductions as retailers clear inventory for new releases. Informed Purchasing: The utilization of price comparison tools and consumer reviews equips shoppers with the necessary insights to discern the quality and value of products prior to making a purchase. Consumer Empowerment: By actively researching and comparing prices, consumers can leverage information to secure the best deals, thereby fostering a more competitive marketplace. Limitations and Caveats While Black Friday offers numerous advantages, consumers must navigate several limitations. Retailers may engage in deceptive practices, such as inflating original prices to create the illusion of a discount. Additionally, not all products marked down during Black Friday represent substantial savings or quality assurance. Thus, a thorough investigation of product reviews and price histories is essential to avoid unsatisfactory purchases. Future Implications of AI in Cybersecurity and Shopping As AI technologies continue to evolve, their impact on cybersecurity and consumer shopping experiences will likely grow. Enhanced AI-driven analytics can improve price transparency and predict market trends, allowing consumers to make more informed decisions. Furthermore, AI can facilitate the development of sophisticated protective measures against fraud and cyber threats associated with online shopping. This dual benefit of maximizing savings while ensuring security will be critical as more consumers engage with technology-driven shopping platforms. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

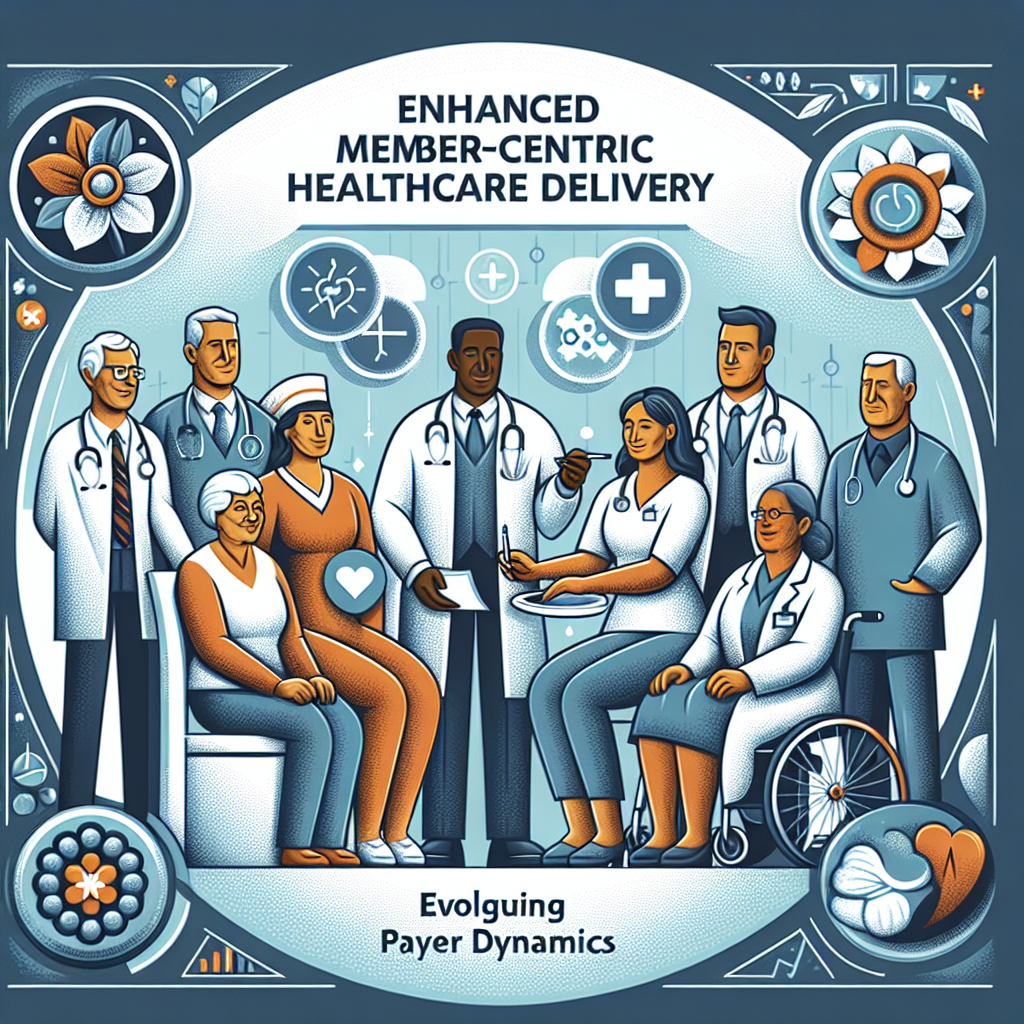

Contextual Overview The healthcare landscape is undergoing a significant transformation, driven by legislative changes such as the One Big Beautiful Bill Act (OBBBA) and an evolving payer ecosystem. These developments are particularly impactful for dual-eligible members, who often present with complex healthcare needs. In this context, a shift towards member-first care has become imperative, necessitating the integration of strategic innovation, real-time data analytics, and collaborative partnerships among healthcare stakeholders. The emphasis is on delivering coordinated care that prioritizes patient experiences while effectively managing costs and administrative burdens. Main Goal and Achievement Strategies The primary objective articulated in this dialogue is to enhance the delivery of coordinated, member-first healthcare services amid a dynamic payer landscape. Achieving this goal entails the adoption of several key strategies: Implementing streamlined processes that improve member experiences and care coordination. Utilizing real-time data to optimize benefits, mitigate fraud, waste, and abuse (FWA), and control overall healthcare costs. Translating complex policy changes into actionable steps for healthcare providers and payers. By focusing on these strategies, healthcare organizations can develop a framework that not only meets regulatory expectations but also addresses the unique challenges faced by dual-eligible members. Advantages of Member-First Care The shift to member-first care presents numerous advantages for healthcare providers and payers, specifically within the context of AI advancements in health and medicine. Enhanced Care Coordination: By streamlining member experiences, healthcare providers can significantly reduce confusion and improve patient satisfaction. Cost Efficiency: Leveraging real-time data analytics enables organizations to identify and eliminate avoidable costs, resulting in more efficient resource allocation. Proactive Policy Compliance: Translating complex legislative requirements into actionable steps allows healthcare organizations to remain compliant while driving measurable outcomes. Despite these benefits, it is crucial to acknowledge potential limitations, such as the need for continuous training and adaptation to new technologies, which may pose challenges for healthcare organizations striving to implement these changes effectively. Future Implications of AI in Healthcare As artificial intelligence continues to evolve, its implications for coordinated, member-first care will be profound. Future developments are expected to enhance predictive analytics capabilities, allowing for more personalized healthcare solutions tailored to individual patient needs. AI can facilitate deeper insights into patient behaviors and outcomes, driving further innovation in care delivery models. Moreover, as AI technologies become more integrated into healthcare systems, they can streamline administrative processes, thus reducing the burden on healthcare providers. This will lead to a more agile healthcare environment that can quickly adapt to ongoing changes in policies and member needs. In conclusion, the ongoing advancements in AI and the restructuring of payer systems underscore the necessity for healthcare organizations to adopt member-first care strategies. By embracing innovation, leveraging real-time data, and fostering collaborative partnerships, the healthcare industry can navigate these changes effectively and enhance care delivery for dual-eligible members. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context In the evolving landscape of software development, Large Language Models (LLMs) have emerged as pivotal assets for developers, particularly those working on Apple platforms. However, the integration of LLMs remains a significant challenge due to disparate APIs and varying requirements across different model providers. This complexity often leads to heightened development friction, deterring developers from fully exploring the potential of local, open-source models. The introduction of AnyLanguageModel aims to streamline this integration process, thereby enhancing the usability of LLMs for developers targeting Apple’s ecosystem. Main Goal and Its Achievement The primary objective of AnyLanguageModel is to simplify the integration of LLMs by providing a unified API that seamlessly supports various model providers. This is achieved by allowing developers to replace existing import statements with a single line of code, thereby maintaining a consistent interface regardless of the underlying model. This streamlined approach not only reduces the technical overhead associated with switching between different model providers but also encourages the adoption of local, open-source models that can operate effectively on Apple devices. Advantages of AnyLanguageModel Simplified Integration: Developers can switch from importing Apple’s Foundation Models to AnyLanguageModel with minimal code alteration, thus enhancing productivity. Support for Multiple Providers: The framework accommodates a diverse set of model providers, including Core ML, MLX, and popular cloud services like OpenAI and Anthropic, offering developers the flexibility to choose models that best fit their needs. Reduced Experimentation Costs: By lowering the technical barriers and enabling easier access to local models, developers can experiment more freely, discovering new applications for AI in their projects. Optimized Local Performance: The focus on local model execution, particularly through frameworks like MLX, ensures efficient use of Apple’s hardware capabilities, maximizing performance while preserving user privacy. Modular Design: The use of package traits allows developers to include only the necessary dependencies, thereby mitigating the risk of dependency bloat in their applications. Caveats and Limitations Despite its advantages, AnyLanguageModel does come with certain limitations. The reliance on Apple’s Foundation Models framework means that any inherent constraints or delays in its development may directly impact AnyLanguageModel’s capabilities. Furthermore, while it aims to support a wide range of models, the performance and functionality can vary based on the specific model used and its integration with Apple’s hardware. Future Implications As the field of artificial intelligence continues to advance, the implications for tools like AnyLanguageModel are profound. The ongoing development of more sophisticated LLMs and their integration into diverse applications will likely transform how developers approach software design. Future enhancements may include improved support for multimodal interactions, where models can process both text and images, thus broadening the scope of applications. Furthermore, as AI technology matures, the demand for more intuitive and less cumbersome integration frameworks will increase, positioning AnyLanguageModel as a potentially critical player in the developer ecosystem for AI on Apple platforms. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context The political landscape in the United States is continuously evolving, particularly in light of recent events surrounding former President Donald Trump. His indictments have drawn significant attention, paralleling the dynamic nature of sports analytics, where data and performance analysis are crucial for decision-making. In the realm of sports, the adoption of Artificial Intelligence (AI) is transforming how data is processed and interpreted, providing a wealth of information to coaches, analysts, and enthusiasts. This blog post aims to explore the implications of AI in sports analytics and its relevance to sports data enthusiasts, drawing parallels with the shifts in support observed in Trump’s GOP primary campaign. Main Goal and Its Achievement The primary objective of analyzing the fluctuations in Trump’s support is to understand how significant events, such as indictments, can influence public perception and voter behavior. In a similar vein, the incorporation of AI in sports analytics seeks to enhance the accuracy of performance predictions and game strategies, ultimately leading to improved outcomes for teams and athletes. Achieving this goal requires a robust framework that employs advanced data algorithms and machine learning techniques to process vast amounts of sports data effectively. Advantages of AI in Sports Analytics Enhanced Data Processing: AI systems can analyze extensive datasets rapidly, identifying patterns and trends that human analysts might overlook. This capability allows teams to make data-driven decisions based on real-time performance metrics. Predictive Analytics: Through machine learning algorithms, AI can forecast future performance based on historical data. This predictive capability can guide training regimens and game strategies, optimizing team performance during competitions. Injury Prevention: AI can analyze player movements and biometrics to detect potential injury risks. By focusing on these indicators, organizations can implement preventive measures to reduce injury rates among athletes. Fan Engagement: AI-driven analytics can also enhance fan experiences by providing insights into player performances and game statistics, fostering deeper connections between fans and their teams. Better Recruitment: AI tools can assist in scouting potential talent by evaluating player performance metrics across various leagues, ensuring teams make informed recruitment decisions. Caveats and Limitations Despite the numerous advantages, there are caveats associated with the implementation of AI in sports analytics. Data quality is paramount; inaccurate or incomplete data can lead to erroneous conclusions. Furthermore, the reliance on AI may inadvertently reduce the human element in coaching and management, leading to potential oversights in strategy that require nuanced understanding beyond mere numbers. Future Implications The future of AI in sports analytics appears promising, with ongoing advancements in technology likely to further enhance its capabilities. As machine learning algorithms become more sophisticated, the integration of AI will likely lead to even more precise predictions and insights. Moreover, as sports organizations increasingly adopt AI, the competitive landscape will shift, necessitating that teams not only adopt these technologies but also innovate continuously to maintain their competitive edge. This evolution will not only impact team dynamics and performance but will also influence how fans engage with their favorite sports and athletes. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

OpenCV.js, a JavaScript/WebAssembly port of the OpenCV library, is revolutionizing the way computer vision applications are built and deployed, particularly in the context of real-time webcam filters. By leveraging WebAssembly, OpenCV.js enables robust visual processing directly in the browser, eliminating the need for complex installations or native dependencies. This capability allows for a wide range of visual effects, from face blurring to artistic transformations, facilitating a seamless user experience across various devices. The following sections will delve into the significance of OpenCV.js in the domain of computer vision and image processing while addressing its applications and implications for vision scientists. 1. Understanding OpenCV.js OpenCV.js serves as a bridge between traditional computer vision techniques and modern web technologies. By compiling the OpenCV library into WebAssembly, it allows for advanced operations such as image filtering, matrix manipulations, and video capture to be executed in the browser environment. This innovation has the potential to democratize access to sophisticated computer vision applications, making them available to a broader audience. 2. The Importance of Real-Time Processing Prior to the advent of OpenCV.js, many computer vision tasks were constrained to backend environments, typically requiring languages like Python or C++. This limitation not only introduced latency but also posed challenges for real-time interaction. In contrast, OpenCV.js facilitates instant image and video processing directly within the browser, thereby enhancing user engagement and interaction. This immediate processing capability is particularly beneficial for applications in fields such as teleconferencing, gaming, and online education, where real-time feedback is essential. 3. Key Advantages of OpenCV.js Cross-Platform Compatibility: OpenCV.js operates across all modern browsers that support WebAssembly, ensuring accessibility and ease of use regardless of the underlying operating system. Real-Time Performance: The integration of WebAssembly enables near-native execution speeds, allowing for smooth and efficient processing of complex visual transformations at high frame rates. User-Friendly Deployment: By running entirely in the browser, OpenCV.js eliminates the need for extensive installation processes, thereby simplifying deployment for end-users and developers alike. Enhanced Interactivity: The framework integrates seamlessly with HTML and Canvas elements, promoting the development of interactive user interfaces that can respond dynamically to user inputs. However, it is crucial to acknowledge certain limitations. Performance can vary significantly depending on the device and browser in use. Additionally, certain advanced features available in native OpenCV may be absent in the JavaScript version, and WebAssembly may struggle on lower-end hardware. 4. Future Implications of AI Developments The intersection of OpenCV.js with burgeoning AI technologies heralds a transformative era for computer vision applications. As AI continues to evolve, the integration of deep learning models into web-based platforms will enhance the capabilities of real-time image processing. For instance, incorporating neural networks for object detection and recognition will enable more sophisticated filtering effects and user interactions. Furthermore, advancements in AI will likely lead to more optimized algorithms, improving the performance and responsiveness of real-time applications. 5. Conclusion OpenCV.js stands at the forefront of the computer vision revolution, offering powerful tools for real-time image processing directly within web browsers. By making advanced visual effects accessible without the need for extensive setups or installations, it paves the way for innovation in various industries. As developments in AI continue to shape this landscape, the potential for even more sophisticated applications will expand, providing exciting opportunities for vision scientists and developers alike. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context of Production-Ready Data Applications Building production-ready data applications poses significant challenges, particularly due to the complexity of managing multiple tools involved in hosting the application, managing the database, and facilitating data movement across various systems. Each of these components introduces additional overhead in terms of setup, maintenance, and deployment. Databricks addresses these challenges by providing a unified platform that integrates these functionalities. This consolidation is achieved through the Databricks Data Intelligence Platform, which encompasses Databricks Apps for running web applications on serverless compute, Lakebase for managed PostgreSQL database solutions, and the capability to use Databricks Asset Bundles (DABs) for streamlined deployment processes. The synergy between these components allows for the building and deployment of data applications that can seamlessly sync data from Unity Catalog to Lakebase, thereby enabling efficient and rapid access to governed data. Main Goals and Achievements The primary goal articulated in the original blog post is to simplify the process of building and deploying data applications. This is accomplished through the integration of Databricks Apps, Lakebase, and DABs, which collectively reduce the complexities associated with separate toolsets. By consolidating these functionalities, organizations can achieve a streamlined development process that facilitates rapid iteration and deployment without the cumbersome overhead typically involved in managing disparate systems. Advantages of Using Databricks for Data Applications 1. **Unified Platform**: The integration of hosting, database management, and data movement into a single platform minimizes the complications usually associated with deploying data applications. This reduces the need for multiple tools and the resultant complexity. 2. **Serverless Compute**: Databricks Apps enable the deployment of web applications without the need to manage the underlying infrastructure, allowing developers to focus on application development rather than operational concerns. 3. **Managed Database Solutions**: Lakebase offers a fully managed PostgreSQL database that syncs with Unity Catalog, ensuring that applications have rapid access to up-to-date and governed data. 4. **Streamlined Deployment with DABs**: The use of Databricks Asset Bundles allows for the packaging of application code, infrastructure, and data pipelines, which can be deployed with a single command. This reduces deployment times and enhances consistency across development, staging, and production environments. 5. **Real-Time Data Synchronization**: The automatic syncing of tables between Unity Catalog and Lakebase ensures that applications can access live data without the need for custom Extract, Transform, Load (ETL) processes, thereby enhancing data freshness and accessibility. 6. **Version Control**: DABs facilitate version-controlled deployments, allowing teams to manage changes effectively and reduce the risk of errors during deployment. Considerations and Limitations While the advantages are compelling, certain considerations must be taken into account: – **Cost Management**: Utilizing serverless architecture and a managed database may incur costs that require careful monitoring to avoid overspending, particularly in high-demand scenarios. – **Complexity of Migration**: Transitioning existing applications to the Databricks platform may involve significant effort, particularly for legacy systems that require re-engineering. – **Training Requirements**: Teams may need to undergo training to effectively leverage the Databricks ecosystem, which could introduce initial delays. Future Implications and AI Developments As artificial intelligence (AI) continues to evolve, its integration within data applications is poised to enhance the capabilities of platforms like Databricks. Future advancements in AI may lead to: – **Automated Data Management**: AI-driven tools could automate the monitoring and optimization of data flows, further reducing the need for manual intervention and enhancing operational efficiency. – **Predictive Analytics**: Enhanced analytics capabilities could enable organizations to derive insights and predictions from data in real-time, fostering more informed decision-making. – **Natural Language Processing (NLP)**: AI advancements in NLP could allow non-technical users to interact with data through conversational interfaces, democratizing data access and usability. In conclusion, the landscape of data application development is rapidly evolving, with platforms like Databricks leading the charge in simplifying complexities and enhancing productivity. As the integration of AI progresses, the potential to further streamline processes and elevate the capabilities of data applications will be significant, positioning organizations to leverage their data assets more effectively. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here