Context In a recent presentation during the XR edition of The Android Show, Google unveiled a series of updates and new features for its mixed reality operating system, Android XR. While the primary focus of these announcements was on developers, the implications of these advancements extend to various hardware platforms, such as Samsung’s Galaxy XR headset and Xreal’s Project Aura smart glasses. Through demonstrations of these devices, significant enhancements in the ecosystem of head-mounted displays were showcased, highlighting the potential future of mixed reality technology. Main Goal and Achievement The primary objective of Google’s efforts in advancing Android XR is to create a robust and flexible framework that supports the development of mixed reality applications. This can be achieved by simplifying the development process for existing applications, ensuring compatibility with a diverse range of hardware, and integrating advanced features that enhance user experience. By focusing on creating a seamless transition between Bluetooth and Wi-Fi connectivity, as well as leveraging existing Android notification systems for UI design, Google aims to foster an environment where developers can efficiently build and adapt their applications for next-generation smart devices. Structured Advantages of Android XR Enhanced Developer Flexibility: Google’s commitment to supporting diverse hardware designs allows developers to create applications that work across a wide range of devices, from lightweight smart glasses to full-fledged VR headsets. This adaptability is crucial for fostering innovation within the mixed reality space. Interoperability with Existing Applications: By utilizing existing Android code for notifications and creating a minimalist UI for smart glasses, developers can port their applications without significant modifications. This reduces barriers to entry for developers and encourages the growth of the application ecosystem. Seamless Connectivity: The ability of Android XR devices to switch effortlessly between Bluetooth and Wi-Fi connections ensures that users experience minimal disruptions during their interactions, thereby enhancing usability and engagement. Advanced AI Integration: The integration of AI, particularly through features like Gemini, allows for innovative functionalities such as real-time context recognition and enhanced user interaction, opening new possibilities for application development and user engagement. Caveats and Limitations While the advancements brought forth by Android XR are promising, there are inherent limitations. The reliance on existing Android infrastructure may lead to performance constraints in certain applications, particularly those requiring high computational power. Additionally, as the mixed reality landscape evolves, there may be challenges in maintaining uniform standards across disparate devices, which could hinder the seamless user experience that Google aims to provide. Future Implications of AI Developments As AI technologies continue to advance, their integration into mixed reality systems will likely redefine user interaction paradigms. The ability of devices like smart glasses to understand human gestures and context will enhance user engagement and make interactions feel more organic and intuitive. Furthermore, the emergence of realistic avatars, such as Google’s Likeness, promises to transform virtual collaboration by providing users with lifelike representations, thereby fostering a greater sense of presence in virtual environments. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

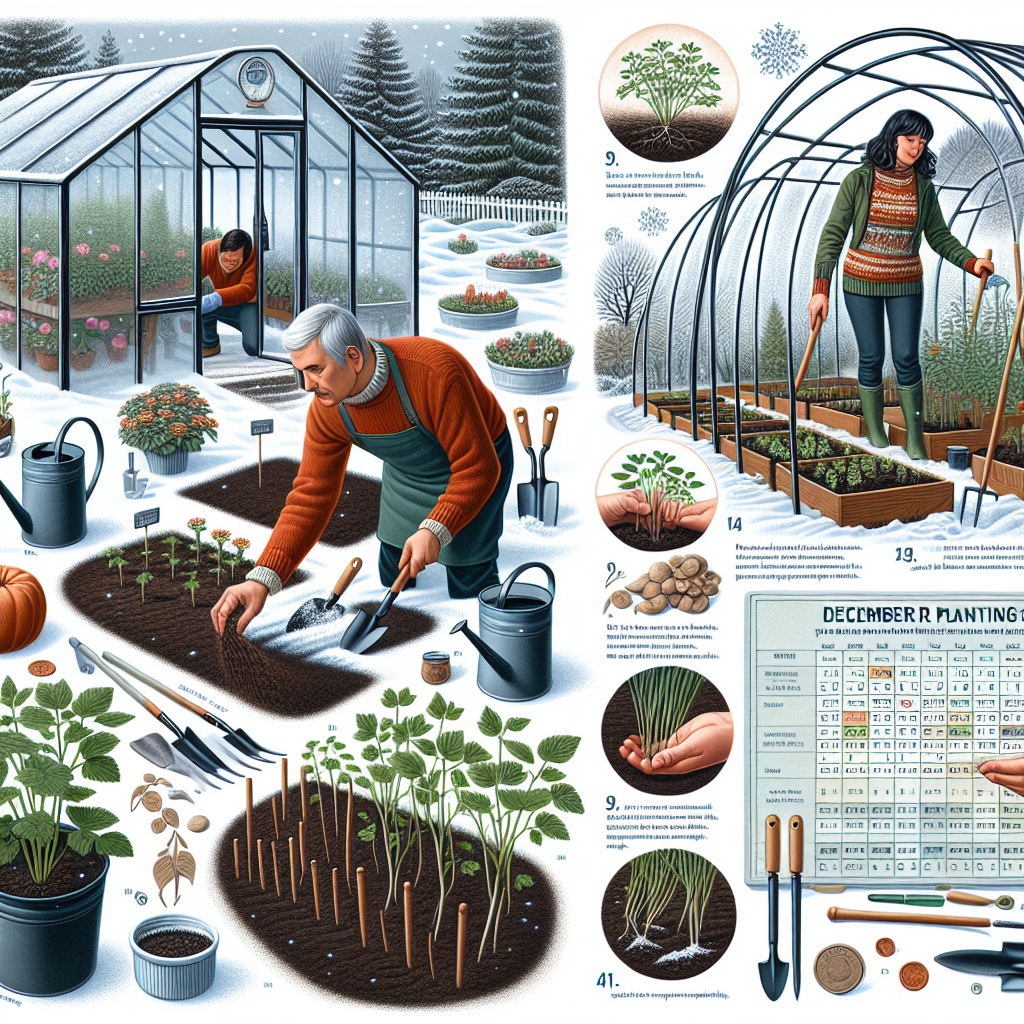

Introduction Winter presents significant challenges for herbaceous plants, particularly in regions experiencing extreme cold. Traditional cultivation methods often lead to diminished yields or complete crop failure during the winter months. However, innovative solutions such as cold frames, hoop houses, and covered rows can mitigate these challenges, enabling agricultural practitioners to cultivate crops even in December. This approach not only extends the growing season but also allows for the cultivation of cold-tolerant species, thus enhancing food security and sustainability within the AgriTech sector. Key Objective and Implementation The primary goal of utilizing cold frames and hoop houses in December is to create a conducive microclimate for growing cold-hardy crops. This can be achieved by ensuring that the structure is appropriately designed for the local climate and by selecting crops that can withstand low temperatures. Proper setup will allow for significant temperature increases inside the structures, often reaching 50°F (10°C) above the external environment. Farmers can thus plan their planting schedules to capitalize on these favorable conditions. Advantages of Utilizing Cold Frames and Hoop Houses Extended Growing Season: Cold frames and hoop houses allow for the cultivation of crops beyond the traditional growing season, which can lead to increased yield and profitability. The ability to harvest crops such as carrots and beets as early as March or April demonstrates this potential. Efficient Resource Use: These structures can be constructed from readily available and repurposed materials, reducing costs associated with agricultural infrastructure. This is particularly advantageous for small-scale farmers and startups in the AgriTech domain. Improved Crop Quality: Crops grown in these protected environments often exhibit higher quality due to reduced exposure to harsh weather conditions. For instance, crops like spinach and kale can develop enhanced flavors and nutrients when grown under cover. Market Diversification: The ability to grow specialty crops during winter months opens new avenues for farmers to diversify their product offerings, catering to local markets and restaurants seeking fresh produce year-round. Considerations and Limitations While there are numerous advantages, certain caveats must be considered. The effectiveness of cold frames and hoop houses is contingent upon proper temperature management and ventilation. In regions with extreme cold, it is essential to ensure that the structures are well-sealed to retain heat. Additionally, the initial setup may require an investment of time and resources, which could be a barrier for some farmers. Regular monitoring and adjustment are necessary to prevent overheating during sunnier days, which can be detrimental to crops. Future Implications: The Role of AI in AgriTech The integration of artificial intelligence (AI) in agriculture is poised to revolutionize practices such as those involving cold frames and hoop houses. AI technologies can enhance environmental monitoring, allowing for real-time adjustments to temperature and humidity levels, optimizing growing conditions for various crops. Furthermore, predictive analytics can assist farmers in making data-driven decisions regarding planting schedules and crop varieties, thereby maximizing yield and minimizing waste. As AI continues to evolve, we may witness advancements in automated systems for managing cold frames and hoop houses, reducing labor costs while enhancing precision in agricultural practices. The future of winter crop cultivation appears promising, as these innovations will enable farmers to adapt more readily to climate variability and consumer demand for fresh produce. Conclusion In summary, employing cold frames and hoop houses during December presents a viable strategy for overcoming the challenges posed by winter conditions in agriculture. By focusing on the cultivation of cold-tolerant crops and leveraging modern technology, agricultural innovators can not only improve their productivity but also contribute to a more sustainable food system. The growing integration of AI in agriculture further enhances this potential, promising a future where winter crop cultivation is both efficient and profitable. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context and Importance of AI Tools in Applied Machine Learning The advent of Artificial Intelligence (AI) has significantly transformed various industries, particularly in the realm of content creation. As we approach 2025, the integration of AI tools has become imperative for professionals aiming to enhance their content generation capabilities. The applied machine learning (ML) landscape is experiencing a paradigm shift where AI tools can facilitate efficient content creation, thereby streamlining workflows and enhancing creative outputs. The demand for innovative content solutions necessitates the utilization of AI technologies, which serve as essential enablers for content creators and marketers alike. Main Goals of Utilizing AI Tools The primary objective of leveraging AI tools in the content creation process is to augment productivity while maintaining high-quality output. By employing advanced machine learning algorithms, these tools can generate ideas, optimize content for search engines, and ensure adherence to brand guidelines. Consequently, practitioners can focus on their core creative processes, resulting in enhanced efficiency and effectiveness. The integration of AI tools facilitates a comprehensive approach to content creation, enabling users to keep pace with the growing demands of digital marketing and audience engagement. Structured Advantages of AI Tools Increased Efficiency: AI tools automate repetitive tasks, such as content formatting and optimization, allowing creators to allocate more time to strategic decision-making and creative processes. Enhanced Creativity: By providing data-driven insights and suggestions, AI tools can inspire new content ideas, encouraging innovation in content strategy. Improved Quality: Advanced algorithms can analyze vast datasets to inform best practices in content creation, ensuring that outputs are not only relevant but also resonate with target audiences. Scalability: AI technologies enable practitioners to produce content at scale without compromising quality, essential for meeting the demands of various marketing channels. Cost-Effectiveness: By streamlining workflows and reducing the time required for content production, organizations can achieve significant cost savings, allowing for reinvestment in other strategic initiatives. Caveats and Limitations: Although AI tools offer numerous advantages, it is crucial to acknowledge their limitations. The reliance on AI for content creation may result in a loss of personal touch and nuanced understanding that human creators bring. Additionally, the effectiveness of AI tools is contingent upon the quality of input data; poor data quality can lead to suboptimal outputs. Future Implications of AI Developments in Content Creation The trajectory of AI advancements suggests a future where machine learning will continue to refine content creation processes. As algorithms become more sophisticated, we can anticipate personalized content experiences tailored to individual user preferences. This evolution will not only enhance audience engagement but also redefine the parameters of successful content marketing strategies. Moreover, as natural language processing (NLP) technologies improve, AI tools will increasingly enable seamless content generation that closely mimics human writing styles, thereby blurring the lines between human and machine-generated content. In conclusion, the integration of AI tools into content creation processes holds significant promise for practitioners in the applied machine learning field. By embracing these technologies, content creators can enhance their productivity and creativity while preparing for the future landscape of digital marketing. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Evolution of Embeddings The evolution of embeddings has marked a significant milestone in the field of Natural Language Processing (NLP) and understanding. From the foundational count-based methods such as Term Frequency-Inverse Document Frequency (TF-IDF) and Word2Vec to the sophisticated context-aware models like BERT and ELMo, the journey reflects an ongoing effort to capture the nuanced semantics of language. Modern embeddings are not merely representations of word occurrences; they encapsulate the intricate relationships between words, enabling machines to comprehend human language more effectively. Such advancements empower various applications, including search engines and recommendation systems, enhancing their ability to interpret user intent and preferences. Main Goals and Achievements The primary goal of this evolution is to develop embeddings that not only provide numerical representations of words but also enrich the contextual understanding of language. Achieving this involves leveraging advanced models that analyze entire sentences or even paragraphs, capturing semantic meaning that traditional methods fail to recognize. The integration of embeddings into machine learning workflows enables a range of applications, from improving search accuracy to enhancing the performance of AI-driven chatbots. Structured Advantages of Modern Embedding Techniques Contextual Understanding: Advanced models like BERT and ELMo offer bidirectional context analysis, allowing for more accurate interpretations of words based on their surrounding terms. Versatility: Techniques such as FastText and Doc2Vec extend embeddings beyond single words to phrases and entire documents, enhancing their application scope in various NLP tasks. Performance Optimization: Leaderboards like the Massive Text Embedding Benchmark (MTEB) facilitate the identification of the best-performing models for specific tasks, streamlining the selection process for practitioners. Open-source Accessibility: Platforms like Hugging Face provide developers with access to cutting-edge embeddings and models, democratizing the use of advanced NLP technologies. Important Caveats and Limitations Computational Demands: Many state-of-the-art embedding models require significant computational resources for both training and inference, which may limit their accessibility for smaller organizations or individual researchers. Data Dependency: The quality and performance of embeddings are often contingent upon the quality of the training data; poorly curated datasets can lead to suboptimal outcomes. Static Nature of Certain Models: While models like Word2Vec and GloVe provide effective embeddings, they do not account for context, leading to potential ambiguities in understanding polysemous words. Future Implications Looking ahead, the advancements in AI and machine learning are poised to further enhance the capabilities of embeddings in Natural Language Understanding. As models become more sophisticated, the integration of multimodal data—combining text with visual and auditory information—will likely become commonplace. This shift will enable richer semantic representations and deeper insights into human communication patterns. Moreover, ongoing research is expected to focus on reducing the computational burden of advanced models, making them more accessible to a wider audience. The implications for NLP professionals are profound, as these developments will not only expand the horizons of what can be achieved with embeddings but also foster innovative applications across various domains. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

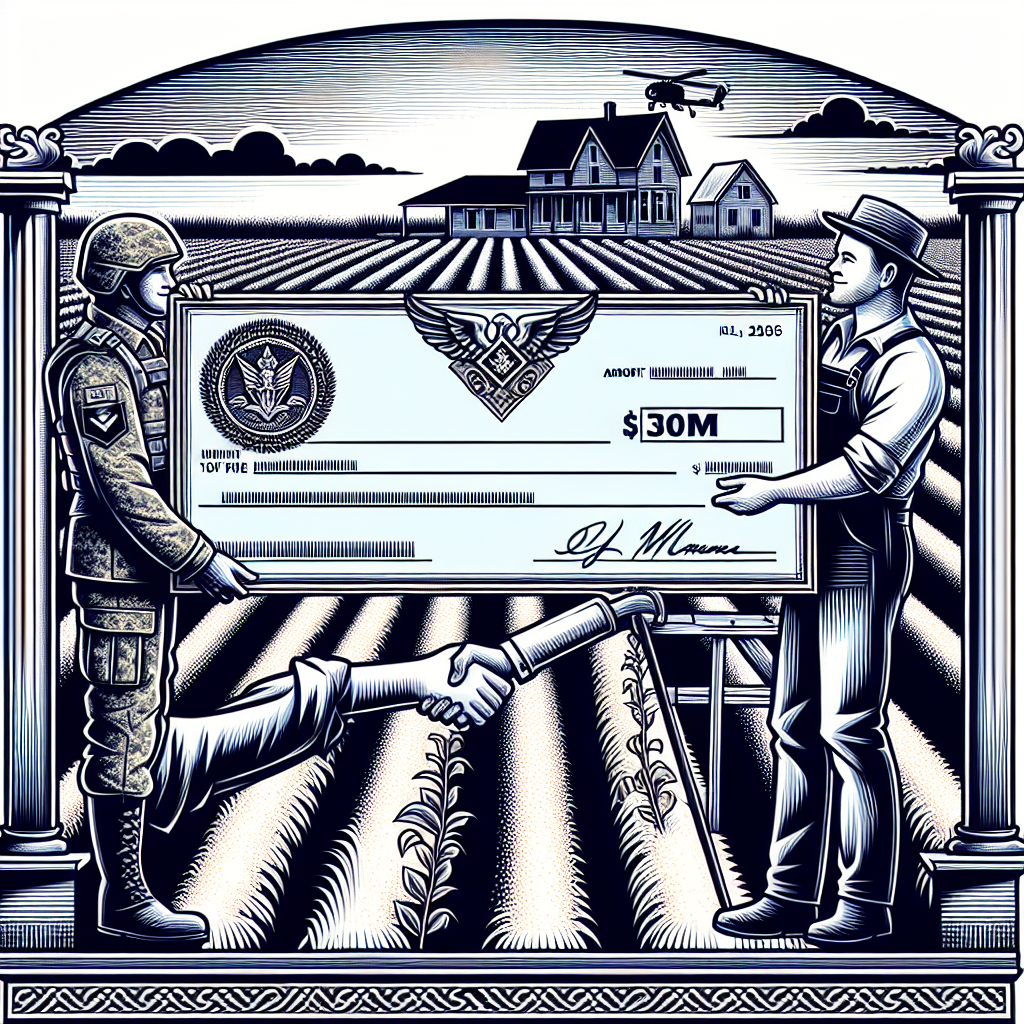

Context In recent years, the intersection of charitable organizations and for-profit enterprises has sparked considerable discussion regarding transparency and ethical practices. A notable case is the operational model of Wreaths Across America (WAA), which has generated over $30 million annually while procuring its wreaths exclusively from the Worcester Wreath Company, owned by the charity’s founders. This association raises critical questions about the implications of such business relationships within the non-profit sector, particularly in terms of accountability and donor trust. As organizations increasingly leverage data analytics to enhance operational efficiency and transparency, a closer examination of these dynamics is essential for data engineers operating in this landscape. Main Goals and Achievements The primary goal of Wreaths Across America is to honor and remember military personnel and their families while educating the public about their contributions. This objective is primarily achieved through the annual distribution of wreaths at cemeteries across the United States, a mission that has expanded significantly since its inception. The charity’s model demonstrates the power of leveraging community volunteerism and corporate partnerships to fulfill its objectives, despite the potential conflicts of interest arising from its close ties to a for-profit supplier. Structured Advantages Community Engagement: The WAA mobilizes nearly 3 million volunteers annually, fostering a deep sense of community and shared purpose while honoring veterans. This level of engagement exemplifies how data-driven insights can optimize volunteer management and event logistics. Financial Contributions to Local Charities: Over the past 15 years, WAA has raised $22 million for local civic and youth organizations through its wreath sales, highlighting the ripple effect of charitable initiatives on local economies. Awareness and Education: The organization’s outreach and educational events throughout the year serve to enhance public knowledge about military history and veterans’ issues, thus fulfilling its educational mission. Transparency in Operations: WAA has publicly disclosed its financial dealings with Worcester Wreath, a practice that, while scrutinized, demonstrates a commitment to transparency and compliance with regulatory standards. Potential for Growth: The operational model of WAA suggests that similar organizations could replicate its success by leveraging partnerships and volunteer engagement, leading to expanded outreach and funding opportunities. Future Implications The trajectory of organizations like WAA indicates that developments in artificial intelligence (AI) will significantly impact data analytics in the charitable sector. As AI technologies continue to evolve, they will provide data engineers with advanced tools for predictive analytics, enabling organizations to forecast volunteer turnout, optimize resource allocation, and refine marketing strategies. Furthermore, AI can enhance transparency and accountability by automating reporting processes, thus addressing potential conflicts of interest more effectively. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context The recent introduction of the swift-huggingface Swift package represents a significant advancement in the accessibility and usability of the Hugging Face Hub. This new client aims to optimize the development experience for users working with Generative AI models and applications. By addressing prevalent issues associated with previous implementations, swift-huggingface enhances the efficiency and reliability of model management for developers, especially for those involved in the dynamic loading of large model files. Main Goals and Achievements The primary objective of the swift-huggingface package is to facilitate a seamless interaction with the Hugging Face Hub, improving how developers access and utilize machine learning models. This goal is achieved through several key enhancements: **Complete coverage of the Hub API**: This enables developers to interact with various resources, including models, datasets, and discussions, in a unified manner. **Robust file handling**: The package offers features like progress tracking and resume support for downloads, addressing the common frustration of interrupted downloads. **Shared cache compatibility**: By enabling a cache structure compatible with the Python ecosystem, swift-huggingface ensures that previously downloaded models can be reused without redundancy. **Flexible authentication mechanisms**: The introduction of the TokenProvider pattern simplifies how authentication tokens are managed, catering to diverse use cases. Advantages The swift-huggingface package provides numerous advantages, particularly for Generative AI scientists and developers: **Improved Download Reliability**: By incorporating robust error handling and download resumption capabilities, users can efficiently manage large model files without the risk of data loss. **Enhanced Developer Experience**: The new authentication framework and comprehensive API coverage streamline the integration process, allowing developers to focus on building applications rather than managing backend complexities. **Cross-Platform Model Sharing**: The compatibility with Python caches reduces redundancy and encourages collaboration across different programming environments, thus fostering a more integrated development ecosystem. **Future-Proof Architecture**: The ongoing development, including the integration of advanced storage backends like Xet, promises enhanced performance and scalability for future applications. Future Implications The swift-huggingface package not only addresses current challenges but also sets the stage for future advancements in AI development. As the field of Generative AI continues to evolve, the package’s architecture is designed to adapt, supporting the integration of cutting-edge technologies and methodologies. This adaptability will empower AI scientists to explore novel applications, enhance model performance, and ultimately drive innovation across various domains, from natural language processing to computer vision. Conclusion In summary, the swift-huggingface package represents a significant leap forward in the Swift ecosystem for AI development. By enhancing the client experience with improved reliability, shared compatibility, and robust authentication, it lays a solid foundation for future innovations in Generative AI models and applications. As researchers and developers increasingly rely on sophisticated machine learning tools, initiatives like swift-huggingface will be critical in shaping the landscape of AI technology. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextualizing Data Security in AI Strategy The integration of data and artificial intelligence (AI) has transformed numerous sectors, enhancing decision-making processes and operational efficiencies. However, as organizations increasingly adopt generative AI solutions, the necessity for a robust security framework becomes paramount. Nithin Ramachandran, the Global Vice President for Data and AI at 3M, underscores the evolving landscape of security considerations, emphasizing that the assessment of security posture should precede functionality in the deployment of AI tools. This shift in perspective highlights the complexities faced by organizations as they strive to balance innovation with risk management. Main Goal and Achieving Security in AI Integration The principal aim articulated in discussions surrounding the intersection of data management and AI strategy is the establishment of a secure operational framework that fosters innovation while mitigating risks. This can be achieved through a multi-faceted approach that includes: comprehensive security assessments, the implementation of advanced security protocols, and continuous monitoring of AI systems. Organizations must prioritize security measures that are adaptable to the fast-evolving AI landscape, ensuring that both data integrity and privacy are preserved. Advantages of Implementing a Secure AI Strategy Enhanced Data Integrity: Prioritizing security from the outset ensures that data remains accurate and trustworthy, which is critical for effective AI model training. Regulatory Compliance: Adhering to security protocols helps organizations meet legal and regulatory requirements, reducing the risk of penalties associated with data breaches. Increased Stakeholder Confidence: A solid security posture fosters trust among stakeholders, including customers and investors, who are increasingly concerned about data privacy. Risk Mitigation: By integrating security into the AI development lifecycle, organizations can proactively identify vulnerabilities and implement corrective measures before breaches occur. However, it is crucial to recognize limitations, such as the potential for increased operational costs and the need for continuous training of personnel to keep pace with rapidly evolving security technologies. Future Implications of AI Developments on Security The future of AI integration in organizational strategies will undoubtedly be shaped by advancements in both technology and security measures. As AI continues to evolve, the sophistication of potential threats will also increase, necessitating a corresponding enhancement in security frameworks. Organizations will need to adopt a proactive stance, leveraging emerging technologies such as AI-driven security protocols to anticipate and mitigate risks. Furthermore, ongoing research in AI ethics and governance will play a crucial role in defining security standards that align with societal expectations and legal requirements. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context of Emerging Cybersecurity Threats Recent advancements in artificial intelligence (AI) have catalyzed a new wave of cybersecurity threats, particularly through mechanisms that exploit the capabilities of agentic browsers. A notable instance is the zero-click agentic browser attack that targets the Perplexity Comet browser, as identified by researchers from Straiker STAR Labs. This attack exemplifies how seemingly benign communications, such as crafted emails, can lead to catastrophic outcomes, including the complete deletion of a user’s Google Drive contents. The attack operates by leveraging the integration of browsers with services like Gmail and Google Drive, enabling automated actions that can inadvertently compromise user data. Main Goal of the Attack and Mitigation Strategies The primary objective of this attack is to manipulate AI-driven browser agents into executing harmful commands without explicit user consent or awareness. This manipulation is facilitated by natural language instructions embedded within emails, which the browser interprets as legitimate requests for routine housekeeping tasks. To mitigate such risks, it is crucial to implement robust security measures that encompass not only the AI models themselves but also the agents, their integrations, and the natural language processing components that interpret user commands. Organizations must adopt a proactive stance in fortifying their systems against these zero-click data-wiper threats. Advantages of Understanding AI-Driven Cyber Threats Enhanced Awareness: Understanding the mechanics of these attacks allows cybersecurity experts to identify vulnerabilities in AI systems and develop tailored defense mechanisms. Improved Incident Response: By recognizing the potential for zero-click attacks, organizations can streamline their incident response protocols to address threats more effectively. Strategic Resource Allocation: Awareness of such threats enables organizations to allocate resources more efficiently towards securing high-risk areas, such as email communications and AI integrations. Advanced Training Opportunities: Insights gained from analyzing these attacks can inform training programs for cybersecurity personnel, enhancing their capability to respond to emerging threats. Limitations and Caveats Despite the advantages, there are inherent limitations in addressing these threats. The dynamic nature of AI and machine learning technologies means that new vulnerabilities can emerge rapidly, potentially outpacing existing defense strategies. Furthermore, the reliance on user compliance and awareness can lead to gaps in security if users do not recognize the risks associated with seemingly benign actions. Future Implications of AI Developments in Cybersecurity The continuous evolution of AI technologies will likely exacerbate the complexities surrounding cybersecurity. As AI becomes more integrated into everyday applications, the potential for exploitation through sophisticated attacks will increase. It is imperative for cybersecurity experts to stay abreast of these developments, adapting their strategies to counteract emerging threats effectively. Additionally, the integration of AI in cybersecurity may lead to the creation of smarter defense mechanisms capable of predicting and neutralizing threats before they manifest. However, this progression also necessitates a vigilant approach to ensure that AI systems themselves do not become conduits for malicious activities. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Introduction Artificial Intelligence (AI) is increasingly embedded in the healthcare landscape, facilitating improved patient outcomes and operational efficiencies. Central to this advancement are large language models (LLMs) that underpin numerous AI applications in health and medicine. However, the implementation of LLMs involves significant computational demands, particularly in terms of memory and processing power. This blog post highlights how optimizing mixed-input matrix multiplication can enhance the efficiency of LLMs in healthcare applications, thus benefiting HealthTech professionals. Main Goal and Implementation The primary objective of optimizing mixed-input matrix multiplication performance is to enable efficient utilization of memory and computational resources when deploying LLMs. This optimization can be achieved by utilizing specialized hardware accelerators, such as NVIDIA’s Ampere architecture, which support advanced matrix operations. By implementing software techniques that facilitate data type conversion and layout conformance, mixed-input matrix multiplication can be effectively executed on these hardware platforms, thereby improving the overall performance of AI applications in healthcare. Advantages of Mixed-Input Matrix Multiplication Optimization Reduced Memory Footprint: Utilizing narrower data types (e.g., 8-bit integers) significantly decreases the memory requirements for storing model weights, resulting in a fourfold reduction compared to single-precision floating-point formats. Enhanced Computational Efficiency: By leveraging mixed-input operations, models can achieve acceptable accuracy levels while utilizing lower precision for weights, thus improving overall computational efficiency. Improved Hardware Utilization: Optimized implementations allow for more effective mapping of matrix multiplication to specialized hardware, ensuring that the full capabilities of accelerators like NVIDIA GPUs are utilized. Scalability: The techniques discussed enable scalable implementations of AI models, making them more accessible for deployment in various healthcare settings, from research institutions to clinical environments. Open-Source Contributions: The methods and techniques developed are shared through open-source platforms, facilitating widespread adoption and further innovation within the HealthTech community. Limitations and Caveats While the advantages of optimizing mixed-input matrix multiplication are substantial, there are limitations to consider. The complexity of implementing these techniques requires a strong understanding of both software and hardware architectures, which may pose challenges for some organizations. Additionally, while mixed-input operations allow for reduced precision, this may introduce trade-offs regarding the accuracy of outcomes, necessitating thorough validation in clinical applications. Future Implications for AI in HealthTech The continued advancement of AI technologies, particularly in the context of LLMs and matrix multiplication optimizations, is poised to reshape the healthcare landscape significantly. As these technologies mature, we can expect: Increased Integration: AI systems will become more integrated into clinical workflows, providing real-time analytics and decision support to healthcare professionals. Broader Accessibility: As optimization techniques reduce computational costs, smaller healthcare providers will have better access to sophisticated AI tools, democratizing the benefits of advanced technologies. Enhanced Personalization: The ability to process vast amounts of patient data efficiently will lead to more personalized treatment plans and improved patient engagement. Research Advancements: Optimized AI models can expedite research processes, leading to faster discoveries in medical science and more rapid response to emerging health challenges. Conclusion In summary, the optimization of mixed-input matrix multiplication presents a significant opportunity to enhance the performance of AI applications in health and medicine. By addressing memory and computational challenges through innovative software techniques, HealthTech professionals can leverage AI to improve patient outcomes and operational efficiencies. As AI continues to evolve, the implications for healthcare will be profound, offering new possibilities for innovation and improved care. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview: The Intersection of Sports Analytics and the 2026 FIFA World Cup The upcoming 2026 FIFA World Cup marks a significant milestone in the realm of global sports, particularly with the expansion of the tournament to include 48 teams. This increase necessitates adjustments in various operational aspects, including the draw process for team groups. As the draw approaches, it is imperative to consider how advancements in artificial intelligence (AI) and sports analytics can enhance the understanding and preparation of stakeholders, including teams, analysts, and fans alike. By leveraging data-driven insights, enthusiasts can better navigate the complexities of the tournament and improve their predictive capabilities regarding outcomes. Main Goal and Its Achievements The primary goal of the original post is to elucidate the mechanics of the World Cup draw and outline potential scenarios for the United States Men’s National Team (USMNT). Achieving this entails a thorough breakdown of the draw process, including the categorization of teams into pots based on FIFA rankings and the implications of these rankings on matchups. By analyzing historical data and current performance metrics, stakeholders can gain insights into the likelihood of favorable or unfavorable group placements for the USMNT, thereby enhancing strategic planning and resource allocation. Advantages of AI in Sports Analytics Enhanced Predictive Analytics: AI algorithms can analyze vast datasets to identify patterns in team performance, which can inform predictions about group outcomes. For instance, understanding the historical performance of teams in similar draw scenarios can lead to more accurate forecasts. Real-Time Data Processing: The ability to process data in real-time allows for immediate adjustments in strategies, contributing to improved decision-making during the tournament. This capability can be crucial during group stages where match outcomes influence progression. Comprehensive Profiling: AI tools can provide detailed profiles of teams, including player statistics, injury reports, and tactical formations. Such profiles enable analysts to assess strengths and weaknesses effectively, shaping game strategies. Fan Engagement: Advanced analytics can enhance the viewing experience for fans by delivering personalized content and predictions, thus increasing audience engagement and interest in the tournament. Limitations and Caveats Despite the numerous advantages, there are inherent limitations to relying solely on AI in sports analytics. Predictive models are only as good as the data fed into them; thus, inaccurate or incomplete data can lead to misleading conclusions. Additionally, the unpredictable nature of sports, influenced by human factors such as player psychology and unforeseen events (e.g., injuries), may not be fully accounted for by AI models. Future Implications of AI Developments in Sports As technology continues to evolve, the integration of AI in sports analytics is expected to deepen, leading to more sophisticated predictive tools and methodologies. Future developments may include enhanced machine learning algorithms that can adapt to new data inputs more effectively and provide more nuanced insights into team dynamics and match outcomes. Additionally, the use of AI in real-time decision-making during matches could revolutionize coaching strategies and player substitutions, ultimately influencing the trajectory of upcoming tournaments like the World Cup. Conclusion In summary, the 2026 FIFA World Cup presents a unique opportunity to explore the intersection of sports analytics and AI. By understanding the intricacies of the draw process and leveraging data-driven insights, stakeholders can enhance their strategic approaches and engage more meaningfully with the event. As AI technologies continue to evolve, their application in sports analytics will likely yield profound implications for both teams and fans alike, shaping the future landscape of competitive sports. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here