Introduction The ongoing legal proceedings surrounding the Musk v. Altman case have illuminated critical issues within the artificial intelligence (AI) sector, particularly concerning the ethical and operational frameworks of organizations like OpenAI. As AI technologies continue to permeate various industries, including finance and fintech, the implications of this trial extend beyond the courtroom into the practices and responsibilities of financial professionals. This blog post aims to contextualize the significance of the Musk v. Altman case, analyze its implications for the AI landscape in finance, and elucidate how financial professionals can navigate these emerging challenges. Context and Significance of the Musk v. Altman Case The Musk v. Altman trial underscores a pivotal moment in the evolution of AI organizations, particularly those transitioning from nonprofit to for-profit models. Elon Musk’s allegations against OpenAI, including breaches of fiduciary duty and failure to adhere to nonprofit principles, raise fundamental questions about the governance and accountability of AI entities. As the jury prepares to deliberate, the outcome will serve as a benchmark for the legal expectations placed on AI organizations, especially those involved in financial applications. Main Goals and Achievements The primary goal of the Musk v. Altman case is to establish a legal precedent regarding the responsibilities of AI companies in maintaining ethical practices while pursuing profitability. Achieving this goal entails determining whether OpenAI’s transition to a for-profit model constituted a breach of trust with its stakeholders, particularly its early supporters like Musk. The case, therefore, aims not only to address Musk’s claims but also to set a standard for future AI ventures, particularly in how they balance innovation with ethical obligations. Advantages for Financial Professionals Enhanced Accountability: The legal outcomes of the Musk v. Altman case could lead to more stringent regulations governing AI in finance, enhancing accountability and ethical standards within the industry. This development could foster greater trust among clients and investors. Improved Compliance Strategies: Financial professionals may benefit from clearer compliance frameworks that emerge from the case, enabling them to align their practices with evolving legal standards in AI application. Guidance on Ethical AI Use: The trial highlights the need for ethical considerations in AI deployment, guiding financial professionals on how to responsibly integrate AI technologies into their strategies and operations. Informed Decision-Making: Understanding the implications of the trial can empower financial professionals to make informed decisions about partnerships with AI firms, ensuring alignment with ethical practices and regulatory compliance. Caveats and Limitations While the potential advantages are significant, it is essential to recognize the limitations inherent in the legal outcomes of the Musk v. Altman case. The advisory nature of the jury’s verdict means that ultimate decisions may still vary based on judicial interpretations. Additionally, the rapidly evolving nature of AI technologies may outpace the regulatory frameworks developed in response to this case, necessitating ongoing adaptation by financial professionals. Future Implications of AI in Finance The implications of ongoing AI developments in finance are profound. As AI technologies continue to advance, financial institutions will increasingly rely on AI for data analysis, risk assessment, and compliance monitoring. However, the Musk v. Altman case serves as a cautionary tale, emphasizing the need for ethical governance and transparent operational practices. Future developments may lead to more robust regulatory frameworks that ensure AI technologies are deployed responsibly, fostering innovation while safeguarding stakeholder interests. Conclusion The Musk v. Altman trial exemplifies a critical intersection between legal accountability and technological innovation within the AI sector. As the jury deliberates and the implications unfold, financial professionals must remain vigilant and adaptive to the evolving landscape shaped by these proceedings. By understanding the lessons from this case, they can better navigate the challenges and opportunities presented by AI technologies in finance. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Introduction The rapid advancements in artificial intelligence (AI) and machine learning (ML) technologies are reshaping various industries, including Computer Vision and Image Processing. The recent workshop titled “Building with AI 2026” highlighted the potential of utilizing tools like Gemini CLI in conjunction with Model Context Protocol (MCP) to streamline development processes. This blog post explores the implications of these technologies for Vision Scientists, detailing the goals, advantages, and future trends in the field. Main Goal and Achievement The primary goal discussed in the workshop is to enhance the efficiency of deploying AI models in practical applications, specifically in creating bots and applications that can interact with users effectively. This can be achieved by leveraging Gemini CLI, which allows developers to articulate their needs in natural language, thus simplifying the deployment process. By employing MCPs, developers can access Google’s extensive resources without grappling with complex command-line interfaces, enabling a more intuitive experience. Advantages of Using Gemini CLI and MCPs Streamlined Development Process: The integration of Gemini CLI with official MCPs allows for a more cohesive development workflow. Developers can directly access Google’s APIs and resources, reducing the time spent on setup and configuration. Enhanced Accessibility: The natural language interface of Gemini CLI lowers the barrier to entry for new developers. This democratizes access to advanced AI tools, allowing scientists and engineers from various backgrounds to contribute to projects without needing extensive command-line expertise. Official Documentation Access: The Google Developer Knowledge MCP provides verified information directly from official documentation. This minimizes the risks associated with outdated or misleading information encountered during web searches, ensuring that developers are working with the most current data. Reducing Errors: By facilitating a direct line of communication between developers and the tools they use, Gemini CLI enables the identification and resolution of errors in real-time. This iterative feedback loop is crucial for refining AI models and ensuring robust performance. Reproducibility of Results: The structured workflows established by deploying models through Gemini CLI ensure that results can be consistently reproduced. This is particularly important in scientific research, where reproducibility is a cornerstone of valid experimentation. Caveats and Limitations While the advancements presented are promising, it is essential to recognize certain limitations. The reliance on cloud-based services necessitates a stable internet connection and may incur costs associated with API usage and data storage. Additionally, as the tools evolve, ongoing updates and changes in APIs may require developers to adapt their workflows continuously. Future Implications The integration of AI into Computer Vision and Image Processing is expected to accelerate in the coming years. With continuous improvements in tools like Gemini CLI and the growing adoption of MCPs, Vision Scientists will be empowered to create more sophisticated applications that leverage real-time data processing and user interaction. Future iterations of these technologies may further simplify the development process, enabling scientists to focus more on innovative research rather than the complexities of deployment. Conclusion In conclusion, the workshop on Gemini CLI and MCPs illustrates the transformative potential of AI technologies in the realm of Computer Vision and Image Processing. By simplifying development workflows, enhancing accessibility, and ensuring the use of reliable resources, these tools present significant advantages for Vision Scientists. As the technology continues to evolve, it holds the promise of fostering greater innovation and efficiency in the field. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

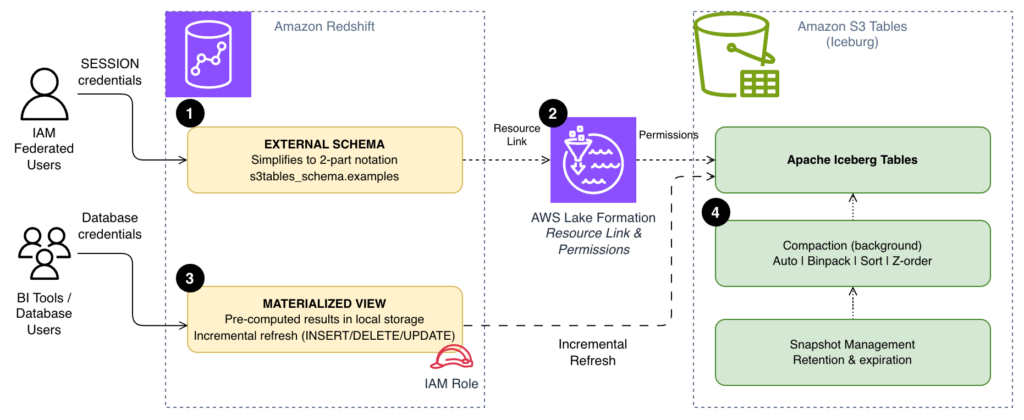

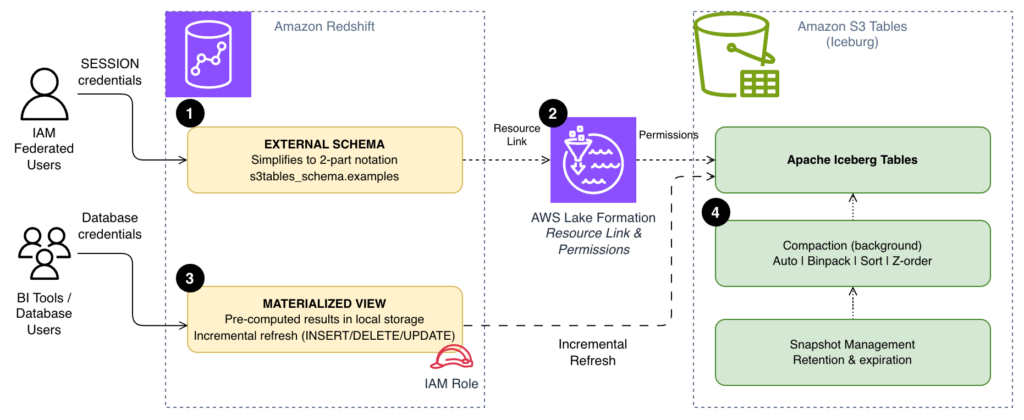

Contextual Overview The integration of Amazon S3 Tables and Amazon Redshift presents a robust framework for executing analytical workloads, particularly when utilizing Apache Iceberg tables. As query volumes surge, inefficiencies can become magnified, particularly evident in scenarios involving recurrent queries. These include frequent dashboard refreshes or repetitive data joins executed by analysts throughout the day, which necessitate scanning data directly from Amazon Simple Storage Service (Amazon S3) each time. Furthermore, reliance on fully qualified three-part table references ([email protected]) can introduce unnecessary complexity, complicating interactions for business intelligence (BI) tools and end users accustomed to more straightforward SQL syntax. Additionally, without appropriate data file organization in S3 Tables, queries may engage more files than necessary. By addressing these critical areas, one can significantly enhance the speed, simplicity, and cost-effectiveness of S3 Tables queries in Amazon Redshift, facilitating both recurring dashboard functionalities and supporting large-scale ad hoc analyses. Main Goal and Achievable Outcomes The primary objective of optimizing S3 Tables queries with Amazon Redshift is to enhance performance and usability through three key strategies: simplifying query syntax with external schemas, utilizing materialized views for storing pre-computed results, and implementing compaction strategies that align data file organization with query patterns. Achieving this optimization can lead to improved query execution times, increased accessibility for end-users, and reduced operational costs. By employing these methodologies, organizations can ensure that data retrieval processes are streamlined and more efficient, thus fostering an environment conducive to data-driven decision-making. Advantages of Optimization Simplified Query Syntax: By transitioning from three-part to two-part notation via external schemas, query execution becomes less cumbersome for users, particularly in BI tools and application code. Enhanced Performance with Materialized Views: Materialized views allow for the local storage of pre-computed results, significantly reducing the frequency and volume of data scans against S3, which can lead to substantial performance gains. Cost-Efficiency Through Compaction: Configuring compaction strategies to fit specific query patterns minimizes the number of files read during queries, thereby optimizing resource utilization and potentially lowering associated costs. Incremental Refresh Capabilities: Redshift’s support for incremental refresh of materialized views enables handling of large datasets efficiently, as only changed rows are processed, reducing both time and computational expenses. Limitations and Caveats Despite the numerous advantages, there are important considerations to note. For instance, while materialized views provide speed advantages, they also require thoughtful management to avoid excessive storage costs. It is essential to balance the frequency of materialized view refreshes with the costs associated with storing pre-computed data. Additionally, the effectiveness of compaction strategies may vary based on the specific patterns of query access, necessitating careful monitoring and potential adjustments over time. Future Implications The landscape of data engineering is poised for significant transformation, particularly with the rise of artificial intelligence (AI). As AI technologies continue to evolve, they will likely enhance the capabilities of data processing and analytics platforms, making them more intelligent and adaptive. For instance, AI-driven optimization algorithms could automate the selection of compaction strategies based on real-time query patterns, further enhancing performance without manual intervention. Additionally, machine learning could be employed to predict query loads and optimize resource allocation dynamically, ensuring that data retrieval processes are not only efficient but also scalable. As these technologies develop, they will undoubtedly shape the future of data engineering, offering new avenues for efficiency and insight extraction from vast datasets. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Context of Generative Engine Optimization The landscape of brand discovery is undergoing a rapid transformation, influenced significantly by advancements in artificial intelligence (AI). Marketers are increasingly recognizing that consumer behavior is shifting towards channels where brands either gain visibility through AI-generated responses or risk becoming invisible. This phenomenon is encapsulated in the concept of Generative Engine Optimization (GEO), which emphasizes the need for marketers to structure their content and digital presence so that AI platforms, such as ChatGPT, Google AI, and others, can effectively understand, cite, and recommend their brands. Unlike traditional Search Engine Optimization (SEO), which primarily relies on link-based rankings, GEO prioritizes structured data and machine-readable content, enhancing the efficacy of existing SEO strategies rather than replacing them. Main Goals of Generative Engine Optimization The principal aim of GEO is to secure a tangible and measurable advantage in brand visibility within AI-generated content. This can be achieved by adopting strategies that enhance the machine-readability of digital assets, thereby ensuring that brands are cited in responses generated by AI platforms. Marketers are encouraged to embrace GEO by integrating structured data, utilizing schema markup, and focusing on content formats that align with user queries. By doing so, they can effectively navigate the complexities of AI visibility and leverage it to drive higher-quality traffic to their websites. Advantages of Generative Engine Optimization Enhanced Visibility in AI Responses: GEO allows brands to appear directly within AI-generated answers, significantly increasing the chances of engagement at a moment of high purchase intent. Higher-Quality Leads: Traffic driven by AI referrals tends to exhibit stronger purchase intent, resulting in improved conversion rates compared to traditional organic search. Studies have shown that leads from AI interactions convert at rates up to 4.4 times higher. Increased Brand Authority: Being cited by AI as a trusted source enhances a brand’s perceived authority, which can lead to greater consumer trust and loyalty. Compound Authority Across Platforms: Successful citations in one AI platform can boost visibility across multiple platforms, creating a cycle of increased authority and visibility. Measurable Performance Metrics: GEO introduces new key performance indicators (KPIs) that allow marketers to assess their AI visibility effectively, such as citation frequency and share of voice in AI-generated content. Optimized Content ROI: Existing content can be repurposed and optimized for GEO, allowing marketers to unlock additional visibility without significant new investment. Caveats and Limitations While the benefits of GEO are compelling, several challenges must be navigated. The complexity of tracking AI visibility can pose a significant barrier, as traditional metrics may not accurately reflect performance in this new landscape. Additionally, there are risks associated with AI hallucinations, where the AI may generate inaccurate or misleading information about the brand. Furthermore, implementing structured data and schema markup can require technical expertise that some marketing teams may lack. Future Implications of AI Developments on Marketing The ongoing evolution of AI will undoubtedly shape the future of marketing strategies. As AI technologies become more sophisticated, the demand for structured and machine-readable content will increase. Marketers who proactively adopt GEO strategies will not only enhance their brand visibility but also position themselves to adapt to future trends in consumer behavior and technology. The integration of AI into the marketing ecosystem will likely lead to more personalized and contextually relevant advertising, thereby fostering deeper connections between brands and consumers. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

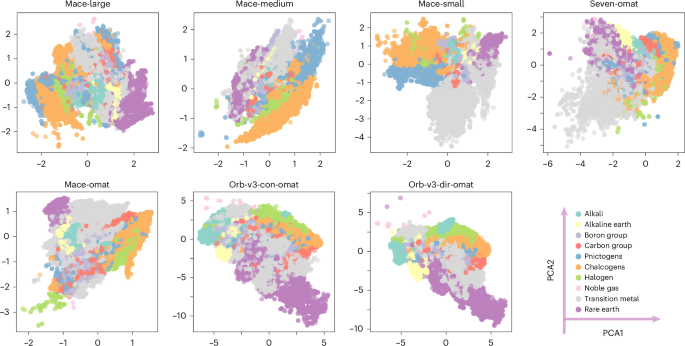

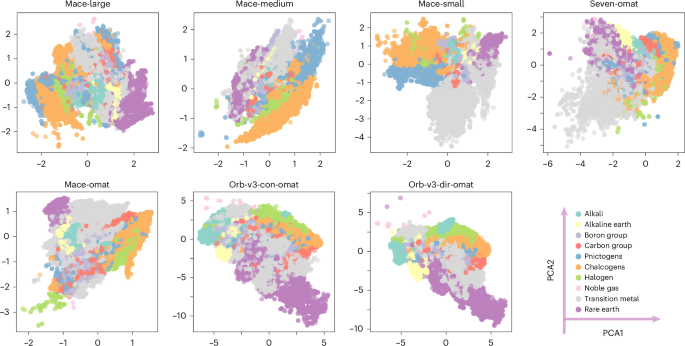

Contextualizing Machine Learning in Smart Manufacturing and Robotics The integration of machine learning interatomic potentials (MLIPs) into smart manufacturing and robotics is revolutionizing how industrial technologists approach materials design and production processes. By leveraging advanced ML models, such as those represented in the study of Platonic representation, various architectures can be employed to enhance energy efficiency and promote sustainability in manufacturing operations. These models utilize diverse datasets to yield insights into material properties, thereby facilitating the design of more effective and innovative manufacturing processes. In the context of smart manufacturing, the challenge lies in aligning incompatible representations derived from different ML approaches. The analysis of seven foundational MLIPs, each embodying unique architectures and datasets for energy conservation, illustrates the need for a unified representation that enables meaningful comparisons across models. This alignment is crucial for harnessing the full potential of machine learning in developing new materials and optimizing manufacturing workflows. Main Goal and Achievement Strategies The primary goal of the Platonic representation framework is to establish a cohesive and standardized method for comparing different machine learning models in terms of their interatomic potentials. This can be achieved through the construction of a unified latent space that accommodates model agnosticism, geometric faithfulness, sufficient diversity, and robustness. To attain this goal, four key strategies are implemented: 1. **Model Agnosticism**: The approach must function independently of the internal workings of individual models. 2. **Geometric Faithfulness**: The representation should preserve the relative distances and neighborhood relationships among atomic environments. 3. **Diversity**: The framework must encompass a wide chemical space to effectively distinguish between chemically diverse environments. 4. **Robustness**: The representation must be invariant to random seed choices and anchor permutations, ensuring that structural insights remain consistent across model variations. Advantages of Unified Representation 1. **Improved Comparability**: By creating a common coordinate system, models can be directly compared, allowing technologists to select the most effective MLIP for specific applications. This is supported by evidence that even minimal anchor sets can align embeddings across different architectures. 2. **Enhanced Predictive Power**: A unified representation captures essential chemical trends and relationships, thereby improving the models’ predictive capabilities. The study highlights how utilizing various sampling strategies, such as DIRECT sampling, can lead to better coverage and representation of chemical landscapes. 3. **Facilitation of Model Interoperability**: The framework allows for seamless integration and compatibility between different ML models. This can lead to more versatile applications in manufacturing, as models can be combined to leverage their strengths. 4. **Robustness Against Variability**: The proposed representation framework ensures that variations in training setup do not compromise the integrity of the results. It offers a method to detect deviations and assess the reliability of different models. 5. **Ground-Truth-Free Assessment**: By utilizing manifold geometry, the framework provides a means to evaluate structural typicality without relying on pre-defined labels, which can be particularly beneficial in exploratory research scenarios. Future Implications of AI Developments The evolution of artificial intelligence and machine learning is poised to significantly impact the field of smart manufacturing and robotics. As AI technologies continue to advance, the capabilities of MLIPs are expected to improve, leading to more accurate and efficient predictive models. This will enable industrial technologists to innovate at an unprecedented pace, ultimately enhancing productivity and sustainability in manufacturing processes. Furthermore, the ongoing development of AI will facilitate more sophisticated algorithms capable of analyzing complex data sets, thereby unlocking new insights into material properties and behaviors. This could lead to the discovery of novel materials with superior characteristics, further pushing the boundaries of what is achievable in smart manufacturing. In conclusion, the integration of Platonic representation in MLIPs offers a pathway to enhance the comparability, predictive power, and robustness of machine learning models in the manufacturing sector. As AI continues to evolve, its influence on material science and manufacturing will undoubtedly expand, fostering a new era of innovation and efficiency in industrial processes. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here

Contextual Overview The landscape of biotechnology is undergoing a transformative evolution, driven by advancements in cell therapy and the emerging role of artificial intelligence (AI) in health and medicine. Notably, companies like CREATE Medicines are pioneering innovative therapies, exemplified by their recent fundraising of $122 million aimed at developing in vivo CAR-T therapies for autoimmune diseases. Such endeavors highlight a significant shift in the focus of cell therapies beyond oncology, signaling an expansion of treatment modalities that can potentially benefit a wider range of patients. In parallel to these scientific advancements, the political environment surrounding regulatory agencies, such as the U.S. Food and Drug Administration (FDA), is also in flux. The ongoing search for a new FDA commissioner under the Trump administration indicates potential shifts in regulatory policy that could directly affect the approval processes for new biotechnological therapies. Together, these elements underscore the dynamic interplay between scientific innovation and regulatory frameworks, which HealthTech professionals must navigate carefully. Main Goal and Achieving It The primary goal articulated in the recent developments within the biotechnology sector is to enhance the therapeutic landscape through innovative cell therapies while ensuring that regulatory bodies adapt to these advancements. Achieving this goal requires a multifaceted approach that includes robust scientific research, strategic funding, and active engagement with regulatory agencies. HealthTech professionals play a crucial role in this process, serving as intermediaries who facilitate communication between researchers, investors, and regulatory authorities to streamline the path from laboratory to clinic. Advantages of Current Developments Expanded Therapeutic Applications: The diversification of cell therapies beyond cancer treatment promises to address unmet medical needs in autoimmune diseases and other conditions, potentially improving patient outcomes. Increased Investment: Significant funding, as evidenced by CREATE Medicines’ $122 million raise, catalyzes research and development, enabling more rapid advancements in therapeutic options. AI Integration: The incorporation of AI technologies can enhance the efficiency of drug discovery and development processes, allowing for more personalized medicine approaches and better patient stratification. Regulatory Adaptation: As regulatory bodies begin to evolve, there is an opportunity for HealthTech professionals to influence policy frameworks that can accommodate innovative therapies while ensuring patient safety. However, it is important to acknowledge that challenges remain, including the complexities of regulatory approval and the need for extensive clinical validation for new therapies. Additionally, the ethical implications of AI in health care must be carefully considered to avoid biases in treatment recommendations. Future Implications of AI in Biotech As AI technologies continue to evolve, their integration into the biotechnology sector is likely to reshape the landscape of health and medicine significantly. Future developments may include enhanced predictive analytics for patient outcomes, more sophisticated biomarker identification, and streamlined clinical trial processes. Furthermore, AI is poised to facilitate real-time data analysis, enabling more adaptive and responsive therapeutic strategies. For HealthTech professionals, staying abreast of these advancements will be critical. Embracing AI not only enhances operational efficiencies but also positions professionals to leverage new insights that can lead to innovative therapeutic solutions. The ongoing collaboration between biotech companies and AI developers will be paramount in navigating this complex ecosystem, ultimately driving a new era of health care that is more personalized, efficient, and effective. Disclaimer The content on this site is generated using AI technology that analyzes publicly available blog posts to extract and present key takeaways. We do not own, endorse, or claim intellectual property rights to the original blog content. Full credit is given to original authors and sources where applicable. Our summaries are intended solely for informational and educational purposes, offering AI-generated insights in a condensed format. They are not meant to substitute or replicate the full context of the original material. If you are a content owner and wish to request changes or removal, please contact us directly. Source link : Click Here